TLDR¶

• Core Points: Neuromorphic engineering integrates memory and computation in hardware to mimic brain function, enabling faster, energy-efficient data processing for robotic perception.

• Main Content: The field aims to narrow the performance gap between human and machine vision by building processors that handle sensing and memory in tandem, improving real-time interpretation of visual data.

• Key Insights: Brain-like chips can reduce latency and power use, potentially transforming autonomous systems, robotics, and edge AI.

• Considerations: Adoption hinges on scalable fabrication, software ecosystems, and evaluating reliability under varied real-world conditions.

• Recommended Actions: Stakeholders should invest in cross-disciplinary research, standardize benchmarks, and pilot neuromorphic systems in constrained, real-time tasks.

Product Specifications & Ratings (Product Reviews Only)¶

No hardware product review applicable.

Content Overview¶

Neuromorphic engineering is a frontier in computing that seeks to replicate the architecture and operational principles of the human brain. Traditional computing systems separate memory (where data is stored) from processing (where data is computed). This separation creates bottlenecks, particularly in tasks involving perception, pattern recognition, and real-time decision making, where data must be repeatedly moved between memory and the processor. Neuromorphic chips aim to close this gap by integrating memory and computation into a single, brain-inspired architecture. The implications for robotics are significant: sensors generate streams of data that require rapid interpretation, and the ability to process this information quickly and efficiently is crucial for tasks such as obstacle avoidance, motion tracking, and autonomous navigation.

The drive behind brain-inspired hardware is not merely incremental efficiency. By emulating neural architectures—spiking neurons, synaptic plasticity, and event-driven computation—these chips can operate with substantially lower power, which is vital for mobile and edge robotics where energy resources are limited. Moreover, the real-time nature of neuromorphic processing aligns with the demands of robotic perception, where delays can compromise safety and performance. The article discusses a breakthrough in which a neuromorphic approach is enabling robots to see faster and in real time, reinforcing the broader trend toward hardware that mirrors cognitive processing.

Neuromorphic designs depart from von Neumann architectures by leveraging asynchronous, event-driven computation. Rather than executing a fixed stream of instructions, neuromorphic systems respond to input as it arrives, much like biological senses. This paradigm supports continuous learning and adaptation, potentially allowing robots to improve their perception without relying on expensive cloud-based computation. The result is a class of processors and accelerators that can handle complex sensory tasks with reduced latency and energy consumption, offering new possibilities for autonomous systems operating in dynamic environments.

In evaluating these advancements, it is important to place them within the broader landscape of artificial intelligence, computer vision, and robotics. Traditional deep learning approaches have achieved remarkable accuracy in perception tasks but often require substantial computational resources and power, making real-time, on-device inference challenging for mobile robots. Neuromorphic hardware promises to complement or even transform these capabilities by enabling low-power, real-time processing at the edge. The discussion around these systems includes not only hardware design but also software ecosystems, neuron models, and learning rules that drive performance in perception tasks.

In-Depth Analysis¶

The core premise of neuromorphic engineering is to construct hardware that mirrors key aspects of the brain’s information processing, particularly for perception and action. In conventional computing, data must be shuttled between memory and a central processing unit (CPU), introducing latency and energy costs. Neuromorphic chips, by contrast, integrate memory and computation, using networks of artificial neurons and synapses that encode information in trace-like states and adjust their strengths through learning rules inspired by neuroscience.

One of the defining benefits of this approach is energy efficiency. The brain achieves remarkable computational power while consuming about 20 watts for a wide range of tasks. Neuromorphic systems aim to come close to that efficiency for specific perceptual workloads, such as recognizing objects in a scene or tracking motion, by leveraging event-driven computation. In these systems, neurons only fire—and consume power—when their state changes in response to input, reducing waste from idle computation common in traditional processors.

Another critical advantage is latency reduction. Real-time perception requires rapid processing of sensory streams. Neuromorphic chips can react to new data almost as soon as it arrives, because processing occurs locally where sensing occurs. This reduces the need for costly round trips to distant hardware or cloud servers, enabling faster decision-making in robotics. For example, a robot navigating a cluttered environment benefits from immediate interpretation of visual cues, allowing it to adjust trajectory on the fly and avoid collisions more effectively.

The architecture of neuromorphic systems often features spiking neural networks (SNNs), where information is conveyed through discrete spikes akin to action potentials in biological neurons. SNNs can model time-dependent processes more naturally than traditional feedforward networks, making them well-suited for temporal tasks such as motion prediction and dynamic scene understanding. Synaptic plasticity—the ability of connections to strengthen or weaken with experience—enables on-device learning and adaptation. When a robot encounters a new environment, these learning mechanisms can help it fine-tune perception to improve accuracy over time without external supervision.

Hardware implementations vary widely, from custom analog circuits to digital architectures that approximate spiking behavior. Analog neuromorphic hardware can achieve high energy efficiency by exploiting the continuous nature of physical processes to emulate neuronal dynamics. Digital implementations, while potentially more flexible and easier to program, may incur higher power consumption but benefit from mature tooling and software ecosystems. Hybrid approaches seek to balance these trade-offs by combining soft real-time digital control with energy-efficient analog processing where advantageous.

In evaluating progress, researchers consider several metrics. Latency, power consumption, recognition accuracy, robustness to noise, and the ability to operate under real-world conditions are among the key criteria. Real-time robotic perception demands not only high accuracy but also stability across varied lighting, weather, and scene compositions. Ensuring reliability in the field is as important as achieving theoretical benchmarks in the lab. As neuromorphic hardware matures, cross-disciplinary collaboration becomes essential—neuroscience informs the models of neural computation, electrical engineering advances the hardware, and computer vision compels designs that serve practical perception tasks.

Developers of neuromorphic systems also face challenges. Software development and programming models for neuromorphic hardware lag behind those for traditional processors. Creating tools that allow researchers and engineers to design, train, and deploy neuromorphic networks is critical for broader adoption. Moreover, benchmarking standards are needed to compare different hardware implementations fairly. Without common benchmarks, it is difficult to assess progress or determine which approaches best meet the demands of real-time robotic perception.

Another consideration is the integration of neuromorphic chips with existing robotic architectures. Perception is just one component of a robot’s pipeline, which includes planning, control, actuation, and higher-level cognition. Interoperability with conventional sensors, data formats, and software stacks must be addressed to realize the full potential of brain-inspired hardware. In some cases, neuromorphic accelerators may complement primary processing units, handling perception tasks while traditional processors handle planning and decision making. In other scenarios, end-to-end neuromorphic systems could emerge, though this requires substantial gains in accuracy and robustness.

The potential impact of neuromorphic vision extends beyond robotics. Edge AI applications—such as drones, autonomous vehicles, and smart sensors—stand to gain from on-device processing that reduces latency and conserves bandwidth. In healthcare, neuromorphic devices could enable real-time analysis of dynamic physiological signals with low power budgets. In industry and manufacturing, rapid perception can lead to more responsive automation and safety systems. The overarching theme is a shift toward hardware that mirrors the brain’s efficiency and adaptability, enabling more capable autonomous systems across a range of domains.

Despite the promise, practical deployment raises questions about long-term reliability, manufacturability, and cost. Analog neuromorphic components may be sensitive to manufacturing variations and environmental conditions, affecting consistency across devices. Digital approaches, while more robust, might not achieve the same energy efficiency as their analog counterparts. Balancing performance, reliability, and cost will be central to determining how quickly neuromorphic vision reaches widespread adoption in robotics.

Researchers are actively pursuing hybrid systems in which neuromorphic processors accelerate perception tasks within a broader robotic platform. Such designs may couple neuromorphic accelerators with conventional processors, GPUs, or specialized accelerators to handle diverse workloads. The goal is to construct heterogeneous systems that leverage the strengths of each technology: neuromorphic hardware for low-latency perception and energy efficiency, classical hardware for high-throughput numerical tasks, and AI software stacks for learning and adaptation.

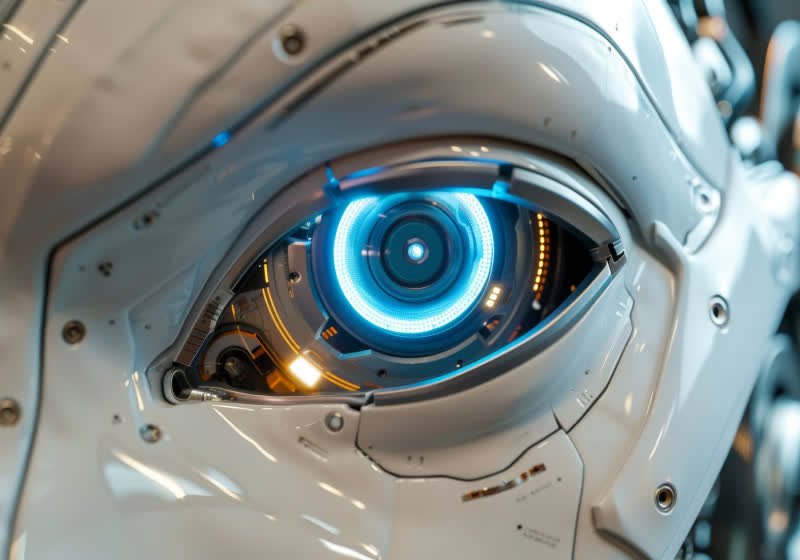

*圖片來源:Unsplash*

As these technologies evolve, collaboration between academia, industry, and standards bodies will be vital. Shared benchmarks, open datasets, and interoperable software frameworks can accelerate progress and reduce the risk of fragmentation. Open-source initiatives and joint research programs can help disseminate best practices, validate performance claims, and foster a more rapid cycle of iteration, testing, and deployment. In parallel, regulatory and safety considerations must keep pace with capability gains, particularly for autonomous systems operating in public or sensitive environments.

In summary, the development of brain-inspired chips for real-time robotic vision represents a strategic shift in how perception is implemented in autonomous systems. By integrating sensing, memory, and computation, neuromorphic hardware offers the prospect of faster, more energy-efficient perception that can operate at the edge without reliance on cloud-based processing. While challenges remain—ranging from software ecosystems to reliability and cost—the potential benefits for robotics, edge AI, and beyond make neuromorphic engineering a compelling area of ongoing research and practical exploration.

Perspectives and Impact¶

The trajectory of brain-inspired hardware sits at the intersection of neuroscience-inspired computation and pragmatic engineering. In robotics, perception is a bottleneck that constrains autonomy, safety, and efficiency. A neuromorphic approach addresses this bottleneck by delivering fast, low-power processing that aligns with the way information is sensed and transformed in real time. The capacity to process visual streams on-device reduces latency, which is critical in dynamic scenarios such as obstacle avoidance, multi-target tracking, and simultaneous localization and mapping (SLAM) under tight power budgets.

Beyond the technical advantages, neuromorphic hardware catalyzes new design paradigms for robots. Instead of siloed components that communicate over centralized buses, perception modules can be embedded within distributed, event-driven networks that respond directly to sensory input. This can lead to more modular and scalable robotic architectures, where perception, decision, and action occur in a more tightly coupled loop. In practice, this may yield robots that better cope with partial observability, varying illumination, or sensor failures, as local processing can maintain a degree of functionality even when connectivity to higher-level systems is compromised.

From an economic and industrial perspective, neuromorphic chips open avenues for deploying capable autonomous systems in environments where power and space are constrained. Drones, ground robots, and robotic assistants that rely on compact, energy-efficient vision systems can operate longer between charges, expanding mission durations and reducing operational costs. In addition, edge computing capabilities reduce dependence on remote data centers, improving resilience and responsiveness in critical applications such as industrial automation, public safety, and environmental monitoring.

The research ecosystem is likely to experience a shift toward more biology-inspired experimentation. Insights from neuroscience—such as synaptic plasticity, spike-timing-dependent plasticity, and neuromodulation—will inform learning rules that can be implemented in hardware. These rules must be translated into reliable, scalable circuit designs and software abstractions that practitioners can use to build and deploy perception models. The interplay between hardware constraints and algorithmic innovations will shape which neuromorphic approaches gain traction for specific robotics tasks.

However, the road to widespread adoption is not without obstacles. Key challenges include device variability, long-term stability, and manufacturability at scale. Analog circuits, while offering energy advantages, are sensitive to process variations and environmental factors such as temperature. Ensuring consistent performance across production lots and operating conditions will require robust calibration methods, fault tolerance, and perhaps standardized module designs. Additionally, the software stack—from neuron models to training frameworks—must mature to reduce the cost and time required to bring neuromorphic solutions from research prototypes to commercial products.

Interdisciplinary collaboration will be essential. Engineers, neuroscientists, computer vision researchers, and industry partners must work together to define practical benchmarks, validate real-world performance, and establish safety and reliability standards. Public-private partnerships and consortia can help accelerate translational efforts, support pilot deployments, and foster the creation of open ecosystems that lower barriers to entry for startups and researchers alike.

In terms of future implications, the integration of neuromorphic vision with other cognitive modules—such as tactile sensing (haptics), proprioception, and higher-level reasoning—could yield more autonomous and resilient robotic systems. A holistic brain-inspired platform could enable end-to-end perception-to-action loops that are both energy-efficient and adaptable. As sensing modalities diversify, neuromorphic hardware may extend beyond vision to process auditory, tactile, and proprioceptive data in a cohesive, low-power framework, enhancing multimodal perception and coordination.

Ethical and societal considerations also accompany advances in autonomous perception. As robots become more capable in real time, questions about safety, accountability, and transparency gain prominence. Understanding how neuromorphic systems make perceptual decisions, and ensuring that their behavior can be audited and constrained within ethical guidelines, will be important for public trust and regulatory compliance. Developers should consider robust validation, fail-safe mechanisms, and clear communication about the capabilities and limitations of these systems.

Overall, brain-inspired chips stand to redefine how robots perceive the world. While the technology is not a universal substitute for all AI workloads, it provides a complementary pathway toward more capable, efficient, and robust edge intelligence. The ongoing evolution of neuromorphic hardware—driven by advances in materials, circuit design, neuron modeling, and learning algorithms—will shape the next generation of autonomous systems that can operate in real time, with limited energy, and in a wide range of real-world environments.

Key Takeaways¶

Main Points:

– Neuromorphic engineering integrates memory and computation to emulate brain-like processing for perception tasks.

– Brain-inspired chips can reduce latency and energy consumption, enabling real-time on-device robotics.

– Practical deployment requires mature software ecosystems, benchmarking standards, and reliability under diverse conditions.

Areas of Concern:

– Device variability and environmental sensitivity of analog components.

– Immature software toolchains and lack of universal benchmarks.

– Integration challenges with existing robotics architectures and safety considerations.

Summary and Recommendations¶

The development of brain-inspired neuromorphic chips represents a strategic approach to enhancing robotic perception by marrying sensing, memory, and computation in a single, brain-like hardware layer. This architecture promises substantial gains in latency reduction and energy efficiency, enabling real-time interpretation of visual data on the edge. Such capabilities are critical for autonomous robots operating without constant cloud connectivity or high-power processors, including delivery drones, service robots, and industrial automation systems.

To realize the broad potential of neuromorphic vision, stakeholders should prioritize several actions. First, invest in cross-disciplinary research that combines neuroscience insights with hardware engineering and computer vision to refine neuron models, learning rules, and circuit designs. Second, develop and adopt standardized benchmarks and open datasets to enable fair, apples-to-apples evaluation across different neuromorphic implementations. Third, advance software ecosystems that make it easier for engineers to design, train, and deploy neuromorphic networks on real hardware, including lightweight compilers and simulation tools. Fourth, pursue pilot deployments in constrained, real-world tasks to validate reliability, performance, and resilience in diverse environmental conditions. Finally, address safety, regulatory, and ethical considerations early, establishing transparency around capabilities and limitations while ensuring robust, fail-safe operation in autonomous systems.

If these steps are undertaken, neuromorphic vision could become a cornerstone technology for next-generation robotics and edge AI, enabling faster, more efficient perception that scales across devices and applications. The journey from lab breakthroughs to widespread impact will require sustained collaboration, investment, and careful attention to reliability, interoperability, and safety. With continued progress, brain-inspired chips could redefine how machines see and respond to the world in real time.

References¶

- Original: https://www.techspot.com/news/111316-brain-inspired-chip-helping-robots-see-faster-real.html

- Additional context: Benchmarking neuromorphic hardware performance; neuromorphic engineering reviews; real-time edge AI applications in robotics.

*圖片來源:Unsplash*