TLDR¶

• Core Points: Metacritic removed an AI-generated Resident Evil Requiem review from VideoGamer after questions about authenticity.

• Main Content: The review appeared to be authored by a fake AI journalist, prompting scrutiny of content integrity on review platforms.

• Key Insights: AI-written content can mislead readers and undermine trust in gaming journalism; platforms may need stronger verification measures.

• Considerations: Verification processes, transparency about AI involvement, and editorial safeguards are essential for credible reviews.

• Recommended Actions: Platforms and outlets should implement AI-authorship disclosures and anti-fraud checks; readers should approach dubious reviews with caution.

Content Overview¶

The incident centers on Metacritic’s decision to remove a review of Resident Evil Requiem after discovering that it was AI-generated. The piece had been published by VideoGamer, a UK-based gaming site, but scrutiny revealed that it did not originate from a real journalist. While definitive proof of AI authorship can be challenging, the language, structure, and overall execution of the review raised substantial red flags. The episode has drawn attention to broader concerns about the reliability of AI-generated content in game journalism and the potential for such material to mislead audiences.

Metacritic, a prominent review aggregator, has previously enforced policies to preserve the authenticity of reviews and to prevent manipulated or automated content from skewing scores and opinions. The remediation in this case underscores ongoing tensions in the media ecosystem as AI tools become more capable of producing publishable text. For readers, the incident serves as a cautionary example of the need to verify sources and consider the credibility of online reviews, particularly when they originate from outlets that publish a mix of traditional reporting and AI-generated material.

In-Depth Analysis¶

The controversy arose when a Resident Evil Requiem review surfaced on Metacritic, attributed to VideoGamer, but evidently drafted by an AI entity rather than a human writer. The ethical and practical implications of AI-generated journalism are multifaceted. On one hand, AI can dramatically boost productivity, enabling faster turnaround times and broader coverage. On the other hand, it raises concerns about originality, accountability, and the risk of disseminating inaccuracies or biased assessments without human editorial oversight.

Several indicators pointed toward AI authorship in the VideoGamer review. While not all telltale signs are conclusive, patterns such as repetitive phraseology, unusual sentence constructions, abrupt shifts in tone, and a lack of nuanced critical insights are common features of many AI-generated texts. In gaming journalism, where subjective impressions, contextual knowledge, and hands-on experience shape reviews, the absence of genuine experiential detail becomes especially conspicuous. The AI-generated piece reportedly lacked authentic hands-on impressions and contained phrasing that felt more mechanical than reflective of a writer’s voice.

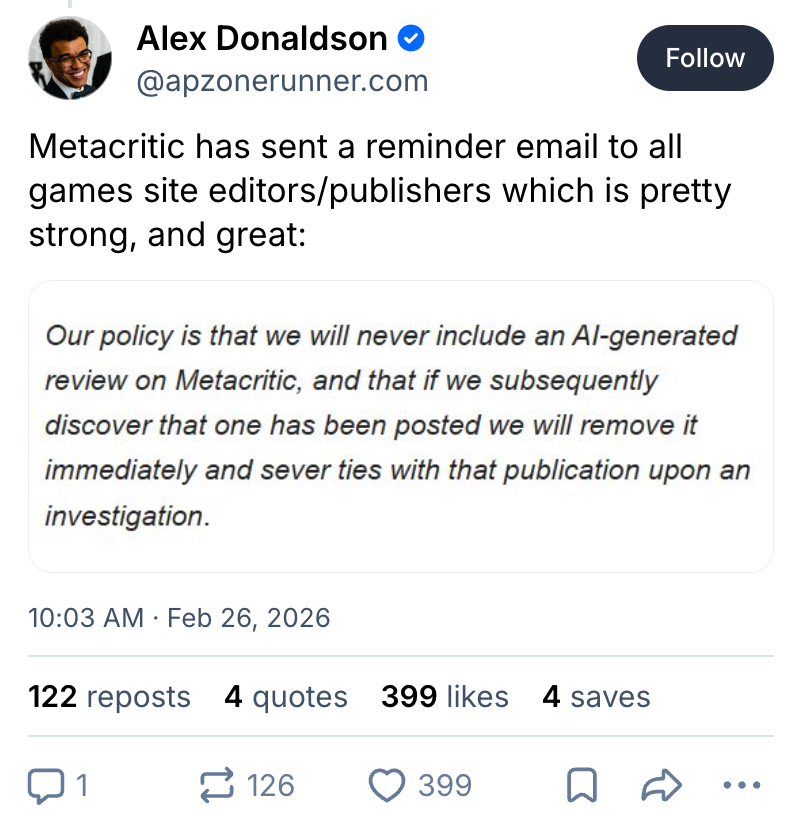

This incident triggered Metacritic’s moderation workflows. Review aggregators generally rely on a combination of user-submitted content, editorial oversight, and automated detection to ensure authenticity and defuse spam or inauthentic submissions. When a review is suspected to be AI-produced, platforms must balance transparency with the necessity of upholding community trust. In this case, Metacritic chose to remove the AI-generated review, signaling a firm stance against content that cannot be clearly attributed to a verified human author or verifiable editorial process.

The broader implications extend beyond a single review. As AI-generated content becomes more prevalent across media sectors, the integrity of reviews, critiques, and commentary hinges on transparent disclosure. Critics and readers increasingly expect that published opinions come with clear indications of authorship and the editorial standards governing the piece. For outlets, this means establishing robust editorial guidelines for AI-involved content, including disclosure of AI assistance, human oversight requirements, and quality-control measures.

The Resident Evil Requiem case also invites a discussion about the value and risks of AI-assisted journalism in niche entertainment domains. On the positive side, AI tools can help journalists process large volumes of information, compile data points, and draft initial versions that editors can refine. On the negative side, the ease of producing publishable text with AI heightens the potential for misrepresentation, especially in spaces where subjective judgments and experiential reporting are essential. Authenticity—rooted in credible authorship and transparent practices—remains a cornerstone of journalistic integrity.

From a reader’s perspective, this incident underscores the importance of critical consumption. When evaluating game reviews, readers can look for consistency in the reviewer’s voice, depth of hands-on analysis, and the presence of unique insights derived from genuine gameplay experience. Reviews that rely heavily on generic praise or criticism, or that lack specific references to mechanics, level design, narrative choices, or technical performance, may warrant extra scrutiny. Cross-referencing opinions across multiple outlets can also help readers form a more balanced view of a game like Resident Evil Requiem.

The technical and ethical layers of this event prompt several questions for the industry: How can platforms better detect AI-generated content before it reaches readers? What disclosure standards should outlets implement when AI tools contribute to a piece? How can editorial teams preserve the unique voice and expertise of human writers while harnessing the efficiencies of automation? And crucially, how can readers differentiate between trustworthy, human-authored reviews and AI-assisted pieces?

The decision to remove the AI-generated review aligns with a growing expectation that credible journalism should be transparently sourced and responsibly edited. It also highlights the ongoing evolution of content creation technologies and the regulatory or policy responses that communities may adopt as these technologies mature. While AI has the potential to augment reporting and analysis, the current consensus among major platforms and many outlets is that clear attribution and rigorous editorial oversight are essential to maintain trust with audiences.

For Resident Evil Requiem specifically, the episode does not require drawing sweeping conclusions about the game’s quality. Rather, it illuminates the broader ecosystem—where AI can produce content that appears legitimate but lacks the depth, nuance, and accountability associated with traditional journalism. In turn, it offers a cautionary tale about the importance of credibility, editorial standards, and the ongoing need for vigilance in distinguishing genuine critique from machine-generated text.

*圖片來源:Unsplash*

Perspectives and Impact¶

The incident’s impact extends beyond Metacritic and VideoGamer to influence expectations across the gaming press and digital media at large. As AI text-generation tools become more accessible, it is inevitable that some content will be produced without direct human authorship or explicit editorial involvement. This reality places additional pressure on platforms to evolve their policies and on publishers to embed safeguards that preserve the integrity of their content.

One key implication is the potential chilling effect on smaller outlets or freelancers who rely on AI to manage workloads. If readers and platforms demand airtight authenticity and robust verification, some publishers may invest more heavily in human editorial workflows to differentiate quality content. This could also spur the development of standardized disclosure practices for AI-assisted writing, similar to existing policies around sponsored content or affiliate links.

Another consideration is the audience’s media literacy. As AI-generated submissions become harder to distinguish from human-authored work, readers must become more discerning. This includes examining the depth of analysis, the writer’s demonstrated expertise, and the presence of verifiable data or firsthand experience. In game journalism, where impressions and subjective judgments are central, the credibility of the reviewer—rooted in transparent processes and accountability—becomes a defining feature of professional integrity.

From a developer and publisher perspective, incidents like this may encourage more direct engagement with communities through official channels, previews, and post-release analyses that showcase verified hands-on experiences. Building trust with fans might involve publishing detailed post-release write-ups, developer insight pieces, and clearly labeled AI-assisted content where appropriate, ensuring readers know what they are consuming and why it matters.

Policy-wise, some platforms might consider implementing AI-authorship indicators, similar to plagiarism checks or content-origin verification. This could include tagging sections generated by AI, requiring an explicit disclosure statement, or even implementing automated confidence scoring to assist editors in deciding whether a piece warrants human review. Over time, such measures could become standard practice across major review aggregators, news outlets, and entertainment sites.

For players and fans, the core takeaway is straightforward: credibility matters. When seeking information to inform purchasing decisions or gauge a game’s reception, audiences are best served by consulting multiple independent sources, looking for detailed, experience-based analysis, and paying attention to disclosures about content creation methods. The Resident Evil Requiem case offers a concrete example of why transparency is essential and why readers should remain vigilant in a landscape increasingly influenced by automation.

Key Takeaways¶

Main Points:

– Metacritic removed an AI-generated Resident Evil Requiem review published by VideoGamer.

– The AI-authored piece lacked the authenticity of a real hands-on, human-written critique.

– The episode highlights ongoing questions about AI usage, disclosure, and editorial oversight in game journalism.

Areas of Concern:

– Potential erosion of trust in review platforms if AI-authored content is not properly disclosed.

– Risk of readers being misled by convincingly written but unvetted AI-generated material.

– Need for robust verification processes and clear ethical guidelines across publishing platforms.

Summary and Recommendations¶

This episode serves as a lesson in the maturation of AI tools within journalism. While automation can streamline certain tasks and enable broader coverage, it cannot replace the value of human expertise, accountability, and transparent disclosure. For platforms, publishers, and readers alike, the priority should be safeguarding trust by establishing explicit AI-authorship policies, ensuring proper editorial oversight, and fostering a culture of critical engagement with online reviews.

Recommended actions include:

– Implementing clear disclosures for AI-assisted content, including the extent of AI involvement and the presence of human editing.

– Enhancing detection and moderation workflows to identify AI-generated material before publication.

– Encouraging outlets to publish hands-on, experience-driven reviews with verifiable gameplay details.

– Promoting media literacy among readers by offering guidance on evaluating reviews and recognizing red flags.

By adopting these approaches, the gaming press can navigate the evolving landscape of AI-assisted content while maintaining high standards of integrity and credibility.

References¶

- Original: https://www.engadget.com/ai/an-ai-generated-resident-evil-requiem-review-briefly-made-it-on-metacritic-194414929.html?src=rss

- Additional context on AI in journalism and platform policies: [To be added by editor; include credible sources discussing AI-authored content, editorial standards, and Metacritic policies.]

*圖片來源:Unsplash*