TLDR¶

• Core Points: Broadcom pursues 2nm stacked silicon with vertical chip bonding to boost AI workloads through higher data transfer speeds and lower energy consumption.

• Main Content: The approach uses a vertically integrated two-chip stack to tightly couple silicon layers, aiming to improve efficiency for increasingly demanding AI tasks.

• Key Insights: Stacked 2nm architecture could deliver notable gains in performance-per-watt, vital for data centers and edge AI; success depends on manufacturing maturity and ecosystem support.

• Considerations: Manufacturing complexity, yield at 2nm, software and toolchain readiness, and competition from Nvidia and other fabs.

• Recommended Actions: Monitor Broadcom’s process development milestones, assess potential ecosystem partnerships, and evaluate total cost of ownership versus competing architectures.

Content Overview¶

Artificial intelligence workloads have consistently pressed hardware designers to push the boundaries of efficiency and performance. As models grow larger and inference needs intensify, the industry has seen a variety of approaches to extract more capability from silicon while curbing energy use and cooling demands. Broadcom has signaled a strategic bet on 2nm technology through a stacked silicon design, aiming to rival Nvidia in AI acceleration. The concept centers on vertically integrating two silicon layers into a single, tightly coupled stack. By bonding these chips into one package, Broadcom intends to dramatically improve data transfer speeds between layers, reduce latency, and cut energy consumption — a combination that could be particularly appealing for data centers running AI training and inference workloads, as well as edge deployments where efficiency translates to lower operating costs and thermal output.

This initiative comes amid a broader industry push toward advanced process nodes and heterogeneous integration. The 2nm process node promises larger performance gains per watt and higher density than its predecessors, but achieving these benefits at scale requires not only the lithography and materials breakthroughs but also a mature ecosystem of design tools, design libraries, and supply chain reliability. Broadcom’s approach—stacked silicon with a tightly coupled interconnect — places it in a competitive space alongside Nvidia’s accelerators and other foundries and fabless players pursuing similar goals through advanced packaging and monolithic designs. The success of such efforts depends on multiple interdependent factors, including manufacturing yield, thermal management, software support, and the ability to deliver a compelling total cost of ownership.

In this article, we examine the rationale behind Broadcom’s 2nm stacked-silicon strategy, its potential implications for AI workloads, the challenges it faces in development and deployment, and the broader market context as Nvidia remains a dominant force in AI acceleration. We also consider what the technology landscape may look like as process nodes shrink, packaging techniques advance, and silicon architectures become more tightly integrated.

In-Depth Analysis¶

Broadcom’s proposed 2nm stacked silicon approach represents a deliberate shift from traditional single-die designs toward a vertically integrated paradigm. In a stacked configuration, two silicon dies are bonded together within a single package, enabling near-instantaneous communication between layers. This tight coupling can substantially increase data transfer bandwidth and lower inter-die latency compared with conventional multi-chip configurations. The result is a potential reduction in energy per operation for AI workloads, where data movement often dominates power consumption.

The central value proposition hinges on several interconnected advantages:

Enhanced bandwidth and reduced latency: By sharing a high-speed interconnect between stacked layers, Broadcom can accelerate data pathways essential for neural network operations. In AI tasks such as matrix multiplications, attention mechanisms, and sparse computations, transferring data quickly between memory and compute units translates into faster training and inference cycles.

Improved energy efficiency: Data movement is a major power sink in AI accelerators. Stacked silicon can mitigate this by shrinking the distance data must travel and enabling more efficient communication protocols. The hope is that the overall energy per compute operation decreases, which is critical for sustaining larger models and serving latency-sensitive workloads in data centers.

Density gains: Stacking allows more functionality within a given footprint, potentially increasing the computational density of AI accelerators. This can help data centers maximize throughput per rack while managing power and cooling costs.

Ecosystem and software alignment: Beyond hardware, success requires corresponding software tooling, compiler optimizations, and libraries that exploit the stacked architecture. The broader AI software ecosystem will influence how quickly such hardware can be adopted and how effectively it can deliver real-world model performance improvements.

However, the path to 2nm stacked silicon is fraught with challenges. Manufacturing at 2nm is still emerging, with stringent requirements for lithography, materials stability, and defect control. The yield of 2nm devices must be high enough to support commercially viable volumes, and the packaging processes for stacking must maintain reliability over the product’s lifetime. Thermal management is another critical concern; stacking increases heat density, necessitating innovative cooling solutions and thermal design to prevent thermal throttling that could negate performance gains.

In addition to the hardware considerations, Broadcom faces competitive dynamics. Nvidia has established a strong lead in AI acceleration, particularly with its accompanying software ecosystem, libraries, and developer support. Any new stacked-silicon offering from Broadcom would need to demonstrate clear advantages not only in raw performance but also in operational efficiency, integration with existing AI pipelines, and total cost of ownership. Competing approaches in the field include advanced packaging techniques, multi-die modules, and monolithic 2D and 3D designs from other semiconductor players. Market acceptance will depend on a combination of performance metrics, reliability, and the ability to scale production to meet demand.

From a design perspective, the vertical bond between two chips implies careful consideration of interconnect technology, timing, power delivery, and heat transfer across the stack. Engineers must address potential issues such as increased parasitics, cross-talk, and mechanical stress that can occur in a stacked die configuration. Solutions may involve sophisticated through-silicon vias (TSVs), optimized interposer designs, and advanced thermal interfaces. The system must also maintain compatibility with existing software frameworks and machine learning workloads, ensuring that models can be ported or optimized to exploit the architecture’s strengths.

Strategically, Broadcom’s move can be viewed as part of a broader industry trend toward heterogeneous integration, where different functional blocks—compute, memory, and specialized accelerators—are integrated at the package or chiplet level. Such designs aim to balance the performance benefits of proximity with the flexibility of modularity, enabling more rapid iteration and customization for specific AI workloads. If successful, a 2nm stacked design could influence data-center infrastructure by delivering higher throughput with lower energy costs, potentially reducing operational expenses for AI workloads that run continuously at scale.

Yet, optimistic projections must be tempered by the realities of manufacturing maturity and market readiness. Achieving reliable, high-yield production at 2nm requires sustained investment across process development, supply chain resilience, and ecosystem partnerships. The transition from laboratory prototypes to production-grade devices with robust lifetimes and predictable performance is non-trivial. In parallel, Broadcom would need to align with customers’ procurement cycles, budget cycles, and performance targets, which can vary widely across hyperscale data centers, cloud providers, and enterprise AI deployments.

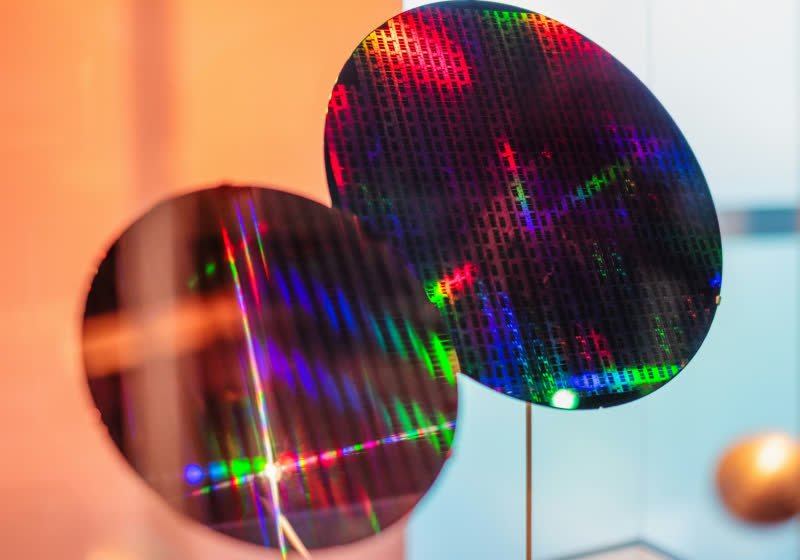

*圖片來源:Unsplash*

The broader AI hardware landscape is also evolving. Nvidia’s dominance in accelerator sales is complemented by a strong software and ecosystem offering, including libraries, frameworks, and developer tools widely adopted in AI research and production. Competing efforts at 2nm or alternative packaging approaches will require credible demonstrations of real-world performance improvements, not just theoretical gains. The pace at which customers adopt stacked-silicon solutions will depend on demonstrated reliability, a clear path to scalable manufacturing, and compelling total-cost-of-ownership advantages over existing architectures.

Beyond the technical and market considerations, broader geopolitical and logistical factors can influence the trajectory of such advanced semiconductor initiatives. Supply chain continuity, access to advanced lithography tooling, and the availability of specialized materials are all critical to sustaining progress toward 2nm and beyond. Partnerships with foundries, equipment manufacturers, and material suppliers play a pivotal role in turning stacked-silicon concepts from prototypes into commercial products. Regulatory environments and export controls may also shape the pace and reach of deployment, particularly for cutting-edge technologies with strategic significance.

In summary, Broadcom’s 2nm stacked-silicon strategy embodies a forward-looking attempt to redefine AI acceleration through vertical integration and tight interconnects. If the engineering challenges can be overcome and the ecosystem can mature around the architecture, the approach holds the potential to deliver meaningful gains in performance-per-watt and data throughput, which are highly sought after in AI-focused data centers and edge deployments. However, the outcome remains contingent on manufacturing readiness, reliable yields, software enablement, and the ability to compete effectively with established players like Nvidia in both hardware capabilities and the accompanying software stack.

Perspectives and Impact¶

The potential impact of Broadcom’s 2nm stacked-silicon approach extends beyond a single product line. A successful implementation could influence how AI accelerators are designed and deployed across various segments, including hyperscale cloud providers, enterprise AI initiatives, and edge computing applications. The stacked architecture might enable more energy-efficient inference at scale, with cooling requirements and power budgets easing in high-density environments. In research settings, such hardware could accelerate experimentation with large models and complex architectures, potentially shortening development cycles for new AI techniques.

If Broadcom achieves strong yield and reliable performance, downstream effects could include revised procurement strategies within large AI-focused organizations. Data centers may consider repurposing or upgrading existing infrastructure to maximize the benefits of stacked-silicon accelerators, while equipment integrators and system manufacturers explore new packaging solutions that fully exploit the architecture’s bandwidth and low-latency characteristics. On the software side, compiler optimizations, kernel drivers, and model libraries would need to be adapted to the stacked model to fully realize the hardware’s advantages. This adaptation could spur closer collaboration between hardware vendors and software developers to deliver end-to-end performance improvements.

From a competitive standpoint, Nvidia and other players would monitor Broadcom’s progress closely. A credible, high-performance 2nm stacked-silicon solution could prompt competitors to escalate their own research into advanced packaging, chiplet architectures, and heterogeneous integration. The AI hardware market has historically rewarded innovations that deliver tangible performance and efficiency gains at scale, as these translate to measurable savings in energy consumption and operational costs for large AI deployments. In this context, Broadcom’s bet is a strategic move to diversify capabilities and respond to the escalating demands of AI workloads.

In the longer term, the success of stacked silicon at 2nm could influence broader technological ecosystems. The interconnect and packaging technologies developed for this approach might spill over into other high-performance computing domains, data communications, and specialized workloads that benefit from tightly integrated compute and memory. The lessons learned in reliability, manufacturability, and ecosystem coordination could inform subsequent generations of AI accelerators and general-purpose processors, pushing the industry toward more compact, energy-efficient, and capable systems.

However, it is essential to maintain realistic expectations. While stacked-silicon designs promise compelling advantages, they also introduce new layers of complexity that must be managed through meticulous engineering, rigorous testing, and strategic collaborations. The timeline for achieving commercial-scale readiness at 2nm is uncertain, and early-stage demonstrations may emphasize proof-of-concept performance rather than immediate market dominance. Stakeholders should watch for concrete milestones in process development, device yield, packaging innovations, and customer pilot programs before drawing conclusions about the technology’s immediate market impact.

Key Takeaways¶

Main Points:

– Broadcom is pursuing a 2nm stacked-silicon design featuring two bonded dies to enable high-speed data transfer and improved energy efficiency for AI workloads.

– The stacked approach aims to address data movement bottlenecks and power consumption common in AI training and inference at scale.

– Success depends on manufacturing maturity, reliable yields, and a robust software and ecosystem alignment to realize real-world performance gains.

Areas of Concern:

– Manufacturing challenges at 2nm, including yield, defect control, and thermal management in a stacked configuration.

– Competition from Nvidia and other players, plus the need for compelling total-cost-of-ownership advantages.

– The requirement for extensive software tooling, libraries, and developer support to fully exploit the architecture.

Summary and Recommendations¶

Broadcom’s strategic wager on 2nm stacked silicon represents a bold attempt to redefine AI acceleration through vertical integration and tightly coupled silicon layers. If successful, the approach could deliver meaningful gains in data throughput and energy efficiency, translating into lower operating costs for AI workloads and enabling denser, higher-performance data-center deployments. However, the path to commercialization is contingent on multiple challenging factors, including achieving high yields at 2nm, maintaining thermal viability in a stacked stack, and assembling a supportive software ecosystem that enables developers to leverage the hardware effectively.

Investors and industry observers should monitor Broadcom’s progress across several milestones: process development advancements toward reliable 2nm production, demonstrations of stack-to-stack interconnect performance and thermal management, and early customer engagements or pilots that validate real-world benefits. Parallelly, the company should prioritize partnerships with software tool developers and AI framework ecosystems to ensure the architecture is accessible and attractive to data-center operators. Given Nvidia’s entrenched market position, Broadcom’s success will likely hinge on delivering clear, tangible advantages in energy efficiency and sustained performance at scale, coupled with a compelling total-cost-of-ownership narrative.

In the broader context, Broadcom’s initiative contributes to the ongoing exploration of heterogeneous integration and advanced packaging as pathways to continued AI hardware growth. While the industry awaits more concrete results, the potential implications of a 2nm stacked-silicon solution—if realized—could influence future hardware design philosophies and deployment strategies for AI across various market segments.

References¶

- Original: https://www.techspot.com/news/111501-broadcom-bets-2nm-stacked-silicon-rival-nvidia-ai.html

- Additional context on stacking, 2nm process challenges, and AI accelerator ecosystems:

- General overview of stacked die packaging and through-silicon vias (TSVs)

- Industry analyses of 2nm process scalability and manufacturing risks

- Nvidia accelerator ecosystem and software stack considerations

Forbidden:

– No thinking process or “Thinking…” markers

– Article must start with “## TLDR”

*圖片來源:Unsplash*