TLDR¶

• Core Points: Broadcom pursues 2nm stacked silicon with vertically integrated design to boost AI workloads, aiming for higher data transfer speeds and lower energy consumption compared to traditional architectures.

• Main Content: The approach bonds two chips into a single stack to tightly couple silicon layers, enabling faster interconnects and improved efficiency for intensive AI tasks.

• Key Insights: Stacked silicon designs offer potential gains in performance-per-watt and throughput, but face manufacturing, yield, and ecosystem challenges in scaling to 2nm.

• Considerations: Competitive landscape with Nvidia; integration challenges; supply chain and fab readiness; software and compiler support for new architecture.

• Recommended Actions: Monitor Broadcom and partner progress on 2nm fabrication, interposer or TSV-based stacking, and software ecosystem readiness; assess potential milestones and risk factors for AI workloads.

Content Overview¶

The race to accelerate artificial intelligence workloads has largely orbited around a few heavyweight players, with Nvidia at the forefront thanks to its CUDA ecosystem and specialized GPUs. Broadcom, traditionally known for semiconductors and communications infrastructure, has signaled a bold push into the high-end AI accelerator arena by betting on a 2-nanometer (2nm) stacked silicon approach. This technique links two chips into a single, vertically integrated stack, a strategy that could translate into tighter coupling, faster data transfer, and lower energy consumption—three critical attributes as AI models grow in complexity and scale.

In a stacked-silicon architecture, the surface-level emphasis is on interconnect efficiency and heat management. By bonding two wafers or dies in a precise, vertically integrated configuration, Broadcom aims to achieve higher bandwidth between layers, reduce parasitic losses, and improve overall energy efficiency. The immediate goal is to render AI training and inference pipelines more power-efficient while delivering the raw throughput required by modern large-scale models. The 2nm node itself implies significant transistor density gains and potential improvements in leakage control, both of which can contribute to better performance-per-watt metrics.

The broader context includes an industry trend toward advanced process nodes and innovative packaging techniques. Many competitors and collaborators in the AI compute space are pursuing multi-die, chiplet, and stacked solutions to overcome the limits of traditional monolithic designs. As AI workloads demand ever-higher compute capabilities, the market is watching how alternatives to conventional single-die accelerators—such as multi-chip modules, high-bandwidth interposers, and 3D-stacked silicon—will fare in terms of manufacturability, reliability, and software support.

This article examines Broadcom’s 2nm stacked-silicon strategy, the potential advantages it could deliver for AI workloads, and the challenges the company may face as it moves toward commercialization. It also situates Broadcom’s approach within the competitive landscape, characterized by Nvidia’s dominance in AI acceleration, and discusses the broader implications for the AI compute ecosystem, including ecosystem readiness, software adaptation, and supply-chain considerations.

In-Depth Analysis¶

Broadcom’s proposed 2nm stacked-silicon architecture hinges on vertically integrating two silicon layers into a single, cohesive package. This stacked configuration often involves bonding techniques that create a robust, high-density interconnect between the layers, enabling much faster data exchange than traditional 2D layouts. In practical terms, this can translate into higher memory bandwidth, lower latency, and reduced energy per operation—factors that are especially valuable for AI workloads that rely on rapid tensor operations, large-weight matrices, and substantial data movement.

One of the primary technical rationales behind stacking is to shorten the physical distance data must travel between computational cores and memory or accelerator functions. By bringing these components into closer proximity, designers can cut interconnect losses and reduce the energy overhead associated with data movement. At the 2nm node, transistor density and switching efficiency are expected to improve, which could yield performance gains if the architecture can be efficiently wired and cooled.

Thermal management remains a central challenge for stacked architectures. The heat generated by two densely packed layers can be difficult to dissipate, particularly under sustained AI training sessions that push hardware to its limits. Broadcom’s strategy likely includes advanced cooling solutions and potentially novel packaging designs to ensure reliability and maintain performance over time. Achieving favorable thermal characteristics is essential if the stacked design is to offer meaningful advantages over existing GPU-centric or ASIC-based AI accelerators.

Interconnect technology is another critical area. Stacked designs rely on high-bandwidth, low-latency communication channels between the layers—often implemented through through-silicon vias (TSVs) or similar interposer-based schemes. The efficiency and reliability of these interconnects determine the practical throughput improvements and energy savings possible with stacked silicon. If Broadcom can optimize these interconnects at 2nm, the resulting acceleration could enable more efficient large-scale AI inference and training pipelines, potentially reducing operational costs for data centers and edge deployments.

From a competitive standpoint, Nvidia remains the dominant force in AI acceleration through its CUDA ecosystem and mature software stack. Nvidia has built a broad developer community, robust libraries, and compiler optimizations that are tightly coupled with its hardware. Broadcom’s entry with a stacked 2nm approach will require not only hardware performance but also a workable software ecosystem—compilers, libraries, and toolchains that can extract the theoretical gains from the hardware and integrate smoothly with existing AI workloads and frameworks.

The journey from concept to production involves several milestones and risks. Fabrication at the 2nm scale is still a frontier area, with manufacturing yield, process stability, and defect rates representing potential bottlenecks. Stacked silicon adds another layer of complexity, including precise die-to-die alignment, bonding strength, and long-term reliability across thermal cycles. Broadcom and its manufacturing partners would need to demonstrate consistency, high yield, and cost-effectiveness to justify the move from pilot tests to mass production.

Software implications are equally consequential. The architecture’s success depends on mature support for the hardware in popular AI frameworks, including optimized kernels, memory management strategies for stacked designs, and the ability to partition workloads efficiently across the stacked layers. Without robust software support, even a technically superior silicon stack may underperform relative to established platforms with well-supported ecosystems.

Broadcom’s strategy also reflects a broader industry shift toward heterogeneous computing, where accelerators of varying architectures coexist and collaborate to handle different parts of an AI workload. A successful 2nm stacked silicon approach could be deployed not only as a standalone accelerator but also as a component within larger compute ecosystems, contributing to hybrid configurations that balance throughput, latency, and energy efficiency across training and inference tasks.

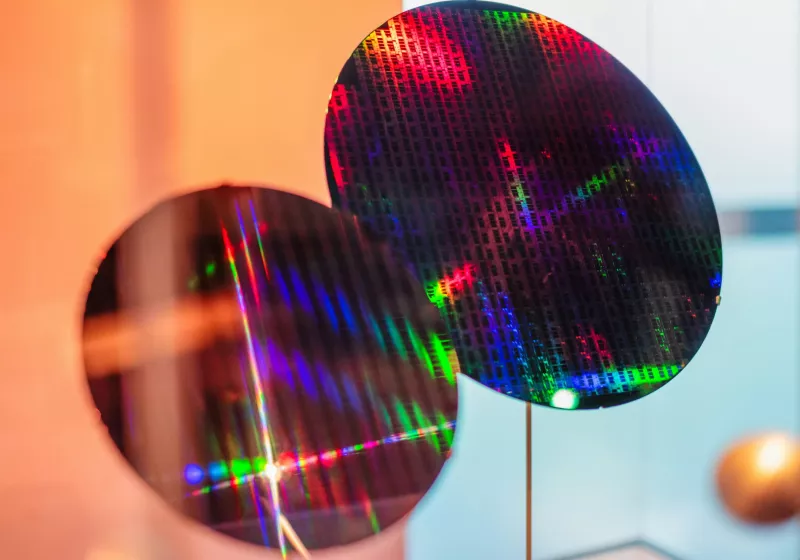

*圖片來源:Unsplash*

The economics of 2nm production will also play a decisive role. The cost per transistor, die yield rates, and packaging complexity all influence the overall total cost of ownership. If Broadcom can achieve attractive performance-per-watt metrics at a lower total cost than competing solutions, it could gain traction in both hyperscale data centers and edge environments. However, competing pressures from established players, supply-chain dynamics, and the need for a robust software ecosystem could slow adoption or limit the pace of rollout.

In summary, Broadcom’s pivot to 2nm stacked silicon embodies a strategic bet on next-generation packaging and process technology to deliver AI acceleration with superior performance-per-watt. The approach leverages vertical integration of two silicon layers to shorten data paths and increase inter-layer bandwidth, with the ultimate aim of improving efficiency for AI workloads. The potential benefits are substantial, but the execution hinges on breakthroughs in manufacturing yield, thermal management, interconnect reliability, and, critically, software ecosystem readiness. As Broadcom navigates these challenges, the AI compute landscape will closely watch how this stack-based paradigm competes with and complements established platforms, including Nvidia’s GPUs and software stack.

Perspectives and Impact¶

The move toward 2nm stacked silicon by Broadcom is emblematic of a broader industry trend: the convergence of advanced process nodes with sophisticated packaging and 3D integration to push beyond the limitations of planar, single-die architectures. If successful, Broadcom’s stacked approach could alter the economics of AI compute by delivering higher performance per watt and potentially lower total cost of ownership for certain workloads. This, in turn, could influence data-center design choices, energy efficiency standards, and the CAPEX/OPEX calculus for enterprises building AI infrastructure.

One potential impact area is data-center cooling and power provisioning. As AI models scale to trillions of parameters and inference at ambient environments becomes more feasible, the demand for energy-efficient accelerators increases. A solution that can deliver more compute per watt would help operators manage power budgets and reduce cooling burdens, potentially enabling denser, more cost-effective clusters. However, if stacking introduces thermal challenges that offset efficiency gains, the net advantage could be reduced. The reliability of long-term operation under varied workloads will be critical for enterprise adoption.

From a supply-chain perspective, the 2nm node remains a frontier technology. The ability to source wafers, manage yields, and maintain consistent manufacturing timelines will influence Broadcom’s time-to-market and cost structure. The involvement of semiconductor foundries and the ecosystem of subcontractors for packaging, testing, and validation will determine how quickly Broadcom can move from pilot productions to large-scale deployments. Any delays in fab capacity or packaging capabilities could ripple through product calendars and customer planning.

The software dimension cannot be overstated. For AI accelerators to achieve broad adoption, developers need reliable, highly optimized software stacks. This includes CUDA-like or alternative programming models, optimized libraries for dense and sparse AI operations, and tooling for profiling and debugging on stacked architectures. Broadcom’s success will depend on partnerships with software vendors, adherence to open standards where applicable, and a clear migration path for developers already invested in Nvidia-centric tooling. Without a robust software ecosystem, hardware gains risk being underutilized.

Broadcom’s strategy may also influence collaboration across the AI hardware ecosystem. The industry’s trajectory includes a mix of dedicated AI accelerators, general-purpose GPUs, and increasingly sophisticated chiplet and 2.5D/3D integration approaches. If Broadcom’s 2nm stack proves compelling, it might encourage more players to experiment with vertical stacking and interconnect innovations, potentially accelerating the pace of packaging advances and the adoption of new manufacturing techniques. Conversely, if the implementation proves too complex or costly, the industry could see a reversion to more incremental improvements on established architectures.

In terms of consumer impact, direct effects are likely to be limited in the near term. AI accelerators are predominantly deployed in data centers, cloud platforms, and enterprise AI workflows. However, the ripple effects—such as reduced energy costs, improved latency for AI-driven services, and opportunities for edge AI deployments with higher efficiency—could eventually influence the performance and pricing of AI-enabled applications.

Ultimately, the success of Broadcom’s 2nm stacked silicon initiative will hinge on a combination of technical execution, manufacturing feasibility, and the market’s readiness to adopt a new architectural paradigm. If Broadcom can demonstrate clear, sustained advantages in performance-per-watt for representative AI workloads and provide a compelling software toolkit, the company could establish a credible alternative to Nvidia’s GPU-dominated approach. The broader AI compute landscape would then be characterized by richer options for data-center design, with hardware diversity enabling more finely tuned cost-performance configurations for different AI applications.

Key Takeaways¶

Main Points:

– Broadcom is pursuing a 2nm stacked-silicon design that bonds two silicon layers into a single package to enhance AI compute efficiency.

– The strategy focuses on increased data transfer speeds between stacked layers and reduced energy consumption, addressing AI workloads’ growing demands.

– Success depends on manufacturing viability at 2nm, effective thermal management, robust interconnects (TSVs or similar), and a strong software ecosystem.

Areas of Concern:

– Manufacturing yield and cost at the 2nm node, along with packaging complexities for stacked silicon.

– Thermal management challenges inherent to dense, multi-layer designs.

– Software ecosystem readiness, including compilers, libraries, and tooling compatible with stacked architectures.

Summary and Recommendations¶

Broadcom’s move into 2nm stacked silicon represents a high-stakes bet on next-generation packaging and process technology to deliver a step-change in AI acceleration metrics. If the company can surmount manufacturing, thermal, interconnect, and software hurdles, the stacked approach could offer meaningful gains in performance-per-watt and inter-layer throughput, potentially redefining power and cost dynamics in AI data centers. However, the path to mass production is fraught with risks tied to yield, reliability across thermal cycles, and ecosystem maturation. Stakeholders should monitor Broadcom’s progress on fabrication partnerships, bonding reliability, interconnect performance, and the development of a supportive software stack that enables developers to exploit the architecture’s advantages. The broader market implications will hinge on whether Broadcom can translate architectural promise into real-world, scalable deployments that compete effectively with Nvidia’s established GPU-centric solutions.

References¶

- Original: https://www.techspot.com/news/111501-broadcom-bets-2nm-stacked-silicon-rival-nvidia-ai.html

- Additional references:

- Articles on 3D stacking, TSV interposers, and high-bandwidth memory strategies in AI accelerators

- Industry analyses of 2nm process node feasibility and packaging advancements

- Nvidia AI compute ecosystem and software stack perspectives

*圖片來源:Unsplash*