TLDR¶

• Core Points: Replacing bespoke glue code with standardized, context-aware model protocols to streamline AI integrations.

• Main Content: Transition from custom connectors to context-driven interfaces that enable reliable, scalable LLM interactions with databases, APIs, and tools.

• Key Insights: Model Context Protocol (MCP) offers consistent semantics, improved security, and easier governance for AI-powered ecosystems.

• Considerations: Adoption requires tooling, safety controls, and interoperability standards across platforms.

• Recommended Actions: Invest in MCP-enabled architectures, embrace standardized prompts and contexts, and phase out ad-hoc glue code where possible.

Content Overview¶

The software industry has long grappled with integration complexity. For decades, connecting disparate systems relied on custom code: bespoke connectors, one-off adapters, and maintenance-intensive bridges that demanded deep, ongoing engineering effort. The AI era has inherited a parallel set of challenges. Large language models (LLMs) must converse with databases, APIs, and business tools, yet each integration often resembles a fresh, unique project—requiring new glue code, bespoke data transformations, and specialized error handling. This fragmentation can slow deployment, increase risk, and hinder the scalable adoption of AI across the enterprise.

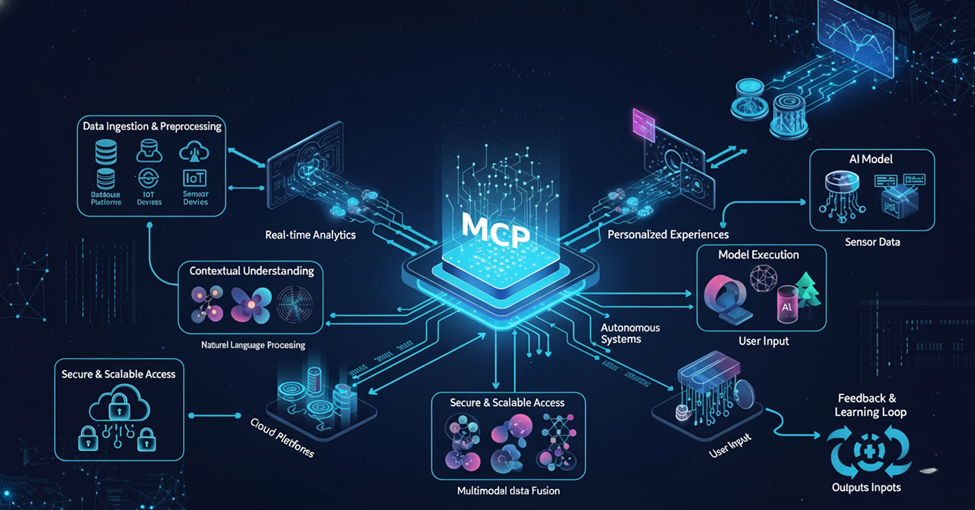

To address these issues, a paradigm shift is emerging: a standardized, protocol-based approach to AI integration centered on model context. Rather than trying to recreate every API in the model’s internal reasoning, developers are increasingly focusing on structuring the inputs, intents, and context in a uniform way that LLMs can understand and reason about reliably. This approach aims to reduce repetitive engineering work, improve predictability, and provide a foundation for governance, security, and auditability in AI-enabled workflows. The discussion around Model Context Protocol (MCP) is part of a broader movement toward API-like consistency for AI interactions—an evolution that could redefine how applications are built, tested, and maintained in an AI-first environment.

In-Depth Analysis¶

The core problem with traditional AI integration lies in the bespoke nature of glue code. When LLMs need to fetch data, trigger actions, or orchestrate business processes, developers must create connectors that translate between the model’s reasoning and the target system’s interface. Each connector is exposed to version drift, varying data schemas, and evolving security requirements. As the number of integrations grows, so does the complexity of testing, monitoring, and updating them. The result is a brittle landscape where the cost of adding new capabilities often outweighs the business value they deliver.

Model Context Protocols offer a different approach. Instead of attempting to replicate every API and data model inside the LLM, MCP defines a standardized set of elements that describe the current context, user intent, data provenance, constraints, and operational boundaries in which the model operates. By encoding these aspects into a structured context, the model can reason about what actions are permissible, what data is accessible, and how to interpret responses. This context-driven method helps decouple the model’s internal reasoning from the specifics of each external system, enabling more predictable behavior and easier integration management.

Key benefits of MCP include:

- Consistency and predictability: A uniform protocol reduces the volatility that comes with ad-hoc integrations. Developers know how to design prompts and context blocks, and operators gain clearer expectations about model behavior across different tasks.

- Security and governance: MCP emphasizes explicit constraints, data access controls, and traceable decision rationales. This makes it easier to enforce policy compliance, perform audits, and mitigate risks associated with AI actions.

- Interoperability and reuse: When multiple systems rely on the same context schema, components can be reused, upgraded, or swapped with minimal disruption. This modularity accelerates development and lowers long-term maintenance costs.

- Observability and debugging: Structured context provides a richer signal for monitoring and tracing model decisions. Engineers can diagnose issues more efficiently by inspecting the context that guided a given action.

Nevertheless, adopting MCP is not a silver bullet. Real-world implementation requires a comprehensive strategy that addresses tooling, education, and ecosystem alignment. Organizations must invest in:

- Defining the standard: The MCP must specify the exact fields that constitute context, including user identity, data provenance, temporal constraints, permission scopes, and outcome expectations.

- Tooling and runtimes: Developers need libraries, SDKs, and runtime environments that support context construction, validation, and safe execution of model-driven actions.

- Validation and testing: Testing MCP-based integrations involves not only validating inputs and outputs but also verifying that the model adheres to context constraints under diverse scenarios.

- Collaboration across vendors: For MCP to achieve mass adoption, interoperability across cloud providers, database systems, and toolchains is essential. This requires industry collaboration and potentially open standards governance.

The shift toward MCP also invites a broader discussion about the role of AI in software delivery. If models operate within well-defined contexts, human-in-the-loop oversight can focus on higher-level strategy and risk management rather than micromanaging each integration detail. This could free engineers to focus on building robust data ecosystems, improving model performance, and delivering faster value to users.

From a security perspective, a well-engineered MCP framework can reduce attack surfaces. By enforcing explicit data access boundaries and auditable actions, organizations can limit what models can do and quickly identify anomalous behavior. Context-driven governance can also simplify regulatory compliance, especially in sectors with stringent data handling and operation requirements.

However, there are also potential risks to consider. Over-reliance on standardized contexts might constrain model creativity or lead to underutilization of model capabilities if contexts are too restrictive. There is also the challenge of keeping context definitions up to date with evolving APIs, data schemas, and business rules. A balance must be struck between defined constraints and flexible, adaptive reasoning that leverages the model’s strengths.

Implementing MCP at scale will require thoughtful architectural decisions. Teams may adopt a layered approach:

- Layer 1: Data and action boundaries. Clearly delineate what data the model can access and which actions it can trigger, with strict permission checks and audit trails.

- Layer 2: Context specification. Define comprehensive, machine-readable context blocks that capture user intent, constraints, data provenance, and expected outcomes.

- Layer 3: Orchestration and adapters. Build lightweight adapters that translate between external systems and the MCP context, avoiding deep coupling between the model and each service.

- Layer 4: Observability and governance. Instrument integrations with telemetry, validation, and policy enforcement to support visibility and compliance.

- Layer 5: Human oversight. Maintain governance processes for exceptions, risk review, and escalation when model decisions fall outside predefined boundaries.

A practical path to MCP adoption often begins with guiding principles and pilot projects. Organizations can start by selecting a limited set of repeatable tasks—such as data retrieval from a central warehouse or triggering a standardized workflow in a workflow engine—and design MCP-based interfaces around them. As teams gain confidence, they can expand to more complex scenarios, gradually broadening the scope of MCP coverage while refining the standard.

Interoperability remains a central challenge. Different vendors may have varied data formats, authentication models, and API semantics. To maximize value, MCP implementations should be designed with compatibility in mind—supporting common authentication patterns, robust data typing, versioning, and backward compatibility.

The broader implications of MCP extend beyond technical convenience. By providing a stable abstraction layer for AI-driven tasks, MCP can influence organizational structure and talent development. The demand for skilled “integration engineers” who excel at defining context schemas, validating data lineage, and crafting governance policies may grow in importance. In this new regime, successful AI adoption hinges as much on systems thinking and process design as on model capabilities.

*圖片來源:Unsplash*

In summary, MCP represents a promising pathway to reduce integration chaos in the AI era. It proposes a disciplined, context-first approach that aligns model reasoning with organizational policies and data governance. While the journey toward widespread MCP adoption will require careful planning, collaboration, and investment, the potential benefits in reliability, security, and development velocity make it a compelling direction for enterprises aiming to unlock scalable AI-enabled workflows.

Perspectives and Impact¶

The shift to Model Context Protocols carries far-reaching implications for developers, operators, and business leaders.

- For developers, MCP offers a blueprint for building reusable integration primitives. Instead of writing bespoke connectors for every new tool or data source, engineers can rely on a standardized context that defines what the model can access and how it should operate. This could reduce cognitive load and accelerate feature delivery, particularly in complex, data-rich environments.

- Operators gain improved visibility into model activity. The structured context makes it easier to monitor decisions, trace outcomes, and identify where an action diverges from expected behavior. This enhanced observability is critical for maintaining reliability in production AI systems.

- Business leaders can leverage MCP to improve governance and risk management. A policy-driven approach helps ensure compliance with data privacy, security, and industry regulations. It also supports auditable decision trails, which can be essential for regulatory scrutiny and consumer trust.

- The broader AI ecosystem benefits from a more interoperable landscape. If MCP gains traction as a de facto standard, it could reduce vendor lock-in and enable smoother plugin development, better tool compatibility, and a more resilient AI supply chain.

Future implications include potential standardization efforts led by industry consortia or standards bodies. The collaboration between cloud providers, database vendors, and enterprise software developers will be crucial to ensure broad compatibility. Additionally, the evolution of MCP-related tooling—such as context validators, secure sandboxes, and governance dashboards—will shape how organizations design, deploy, and monitor AI capabilities.

There is also an educational dimension to consider. As MCP becomes more prevalent, curricula for software engineering, data governance, and AI ethics will increasingly emphasize context management, policy enforcement, and integration design. Training programs may focus on how to architect systems that support safe, scalable AI interactions, rather than solely on model fine-tuning or prompt engineering.

In the long term, MCP could influence the competitive landscape of AI platforms. Vendors that provide robust MCP ecosystems—complete with developer tooling, security controls, and interoperability guarantees—may offer faster time-to-value for customers, while those with more fragmented approaches could struggle to maintain momentum. The resulting market dynamics could favor platforms that prioritize open, context-driven interfaces and thoughtful governance over opaque, bespoke integration layers.

However, realizing these benefits requires addressing several challenges. Adopting MCP involves cultural shifts within organizations, as teams move from a “build it bespoke” mindset to a more standardized, policy-driven approach. It also demands investment in governance frameworks, security review processes, and cross-functional collaboration between product, security, and compliance teams. Organizations must balance the need for speed with the imperative to minimize risk, ensuring that MCP implementations do not become bottlenecks due to overly rigid constraints or bureaucratic overhead.

As with any major architectural shift, careful experimentation is essential. Early pilots should measure not only execution success but also metrics related to maintainability, cost, latency, and user satisfaction. By combining quantitative results with qualitative insights from developers and business stakeholders, organizations can refine MCP designs to better meet real-world needs while preserving flexibility where it matters most.

Ultimately, the promise of MCP lies in turning integration chaos into a predictable, governed, and scalable system for AI-enabled workflows. If effectively realized, MCP could become a foundational element of the next generation of software ecosystems, enabling LLMs to operate with greater reliability, transparency, and interoperability across an expanding universe of tools and data sources.

Key Takeaways¶

Main Points:

– Model Context Protocols aim to standardize how AI systems interact with external tools, replacing bespoke glue code.

– MCP emphasizes context, constraints, data provenance, and governance to improve security and reliability.

– Widespread MCP adoption depends on clear standards, interoperable tooling, and industry collaboration.

Areas of Concern:

– Risk of over-constraining model capabilities if contexts are too rigid.

– Challenges in maintaining up-to-date context definitions amid evolving APIs and data schemas.

– Need for robust governance, testing, and cross-vendor interoperability to prevent fragmentation.

Summary and Recommendations¶

The future of AI integration is likely to be shaped by Model Context Protocols that prioritize structured context, governance, and interoperability. By decoupling model reasoning from the specifics of every external system, MCP can reduce integration complexity, increase predictability, and enhance security. This approach supports scalable AI deployments across enterprises, enabling faster iteration and more reliable outcomes while providing clear auditability and risk management.

To move toward MCP-driven architectures, organizations should:

– Define a clear MCP standard that specifies the elements of context, data access boundaries, and permissible actions.

– Invest in tooling and runtimes that support context construction, validation, and secure execution of model-driven tasks.

– Start with pilot projects focusing on repeatable tasks to demonstrate value and establish best practices.

– Prioritize interoperability by collaborating with vendors and adopting open standards where possible.

– Balance governance with flexibility to avoid stifling model capabilities and innovation.

If implemented thoughtfully, MCP could become a cornerstone of modern AI-enabled software, transforming how teams design, deploy, and govern intelligent systems. The journey requires intentional planning, cross-functional collaboration, and ongoing refinement, but the potential payoff is a more reliable, scalable, and secure AI ecosystem.

References¶

- Original: https://dzone.com/articles/future-of-ai-integration-why-mcp-is-the-new-api

- Additional references:

- OpenAI, “ChatGPT and API integrations: best practices for secure and scalable AI apps”

- Gartner, “Market Guide for AI-Driven Integration Platforms”

- O’Reilly, “Architecting AI-powered Platforms: Governance, Security, and Observability”

*圖片來源:Unsplash*