TLDR¶

• Core Points: Microsoft CEO Satya Nadella reframes AI advancement as a collective shift toward humans acting as stewards of interoperable, expansive digital cognition.

• Main Content: Nadella’s Davos remarks place AI as an ongoing collaboration between human judgment and machine-scale capabilities, with a focus on responsibility and enterprise-scale impact.

• Key Insights: The metaphor of “managers of infinite minds” emphasizes governance, ethical considerations, and scalable coordination across diverse AI systems.

• Considerations: Practical implementation requires robust governance, transparency, and attention to workforce transformation and security.

• Recommended Actions: Businesses should invest in governance frameworks, upskill employees, and pilot multi-AI coordination strategies that align with ethical guidelines.

Content Overview¶

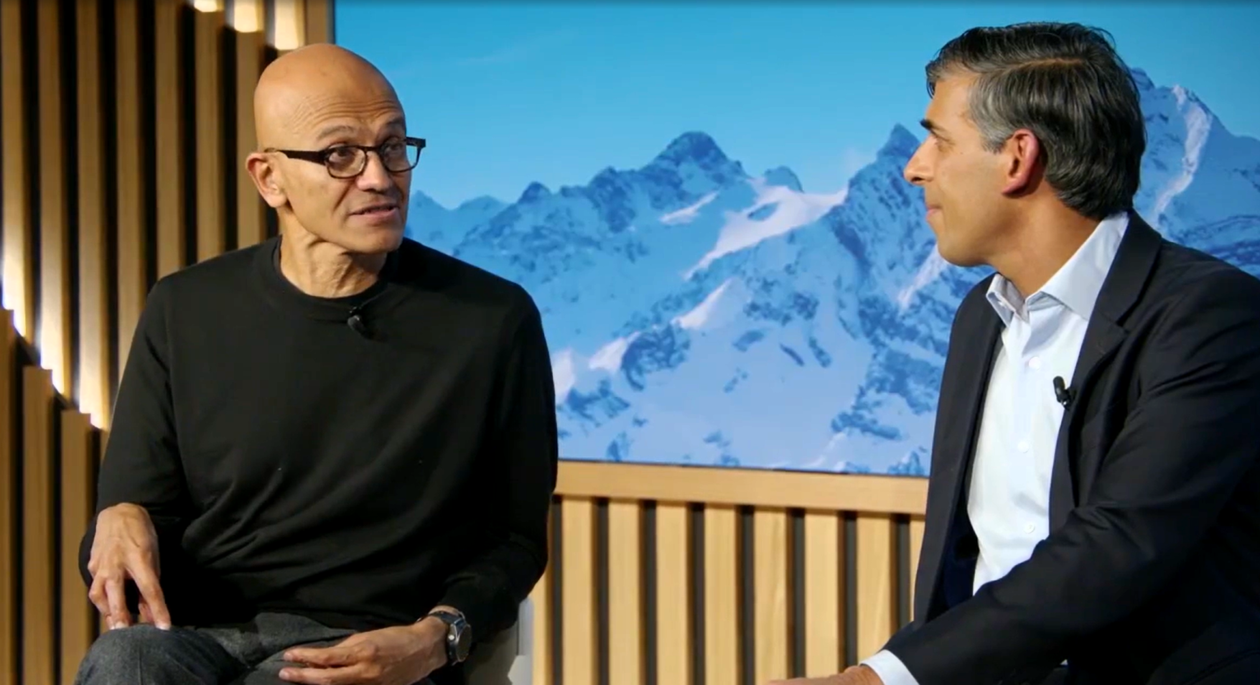

In a high-profile appearance at the World Economic Forum in Davos, Switzerland, Microsoft CEO Satya Nadella offered a fresh metaphor for how society might conceptualize the ongoing AI revolution. While echoing familiar refrains about exponential technological change, Nadella proposed a provocative new frame: humanity is becoming “managers of infinite minds.” This phrase builds on the longstanding tradition in tech rhetoric that has used metaphors to describe computing progress—from predecessors who likened computers to clocks, brains, or powerful offices. Nadella’s formulation aims to capture the evolving role of humans not as passive users of AI, but as strategic stewards who coordinate a vast, interconnected ecosystem of intelligent systems.

Nadella’s comments come amid broader corporate and policy conversations about responsible AI development, governance, and the integration of AI tools into business processes. The Davos platform provided a stage to articulate how Microsoft envisions AI as an amplifier of human capability, rather than a replacement for human judgment. The idea of “infinite minds” suggests AI systems capable of processing and synthesizing information at extraordinary scale, across domains, languages, and contexts. In Nadella’s framing, people become managers who orchestrate these minds, set objectives, monitor outcomes, and ensure alignment with ethical norms and organizational priorities.

This metaphor sits within a larger narrative about practical AI adoption: deploying tools that can augment decision-making, automate routine tasks, and unlock new insights while maintaining a commitment to governance and accountability. As AI capabilities grow, the role of leaders and workers is reframed toward stewardship—designing systems, guiding their use, and ensuring that the benefits are equitably shared and the risks appropriately mitigated. Nadella’s remarks at Davos thus contribute to a broader discussion about how to balance innovation with responsibility in a world where intelligent technologies are increasingly woven into the fabric of business, government, and daily life.

In-Depth Analysis¶

Satya Nadella’s “managers of infinite minds” concept represents a deliberate shift in the discourse around AI. Rather than presenting AI as a monolithic, autonomous force, the metaphor foregrounds human oversight, governance, and collaborative coupling with machine intelligence. In practical terms, this means organizations should design AI ecosystems in which human operators set goals, define constraints, and continuously supervise AI-driven processes. The “infinite minds” element underscores the scale and capability of modern AI—systems that can ingest vast amounts of data, learn across tasks, and generate actionable insights at speeds far beyond human capability. Yet Nadella emphasizes that such power must be managed with care to avoid unintended consequences, bias, or loss of accountability.

The Davos moment situates Nadella among a cadre of tech leaders who are increasingly mindful of the social contract surrounding AI. The global audience at the World Economic Forum is typically attentive to the crosscurrents of technology, economics, and governance, including privacy concerns, job displacement, and inequities in access to AI benefits. Nadella’s metaphor aligns with a broader agenda: to frame AI as a collaborative toolkit that extends human intellect rather than replaces it. This perspective resonates with attempts to articulate responsible AI frameworks—principles that emphasize fairness, transparency, accountability, and safety. In practice, the phrase invites questions about how enterprises can operationalize governance across diverse AI solutions, ensure consistent decision-making, and maintain clear lines of responsibility when things go wrong.

A key part of the analysis is the emphasis on “managers” rather than “users.” This distinction signals a shift in responsibility: individuals and organizations are asked to oversee AI systems, curate their inputs, and interpret outputs in light of ethical and strategic objectives. The mapping between human judgment and machine cognition becomes a central design principle. Leaders are invited to create governance structures that can handle multi-AI coordination, provenance tracking, and risk assessment across automated workflows. In such models, humans retain ultimate accountability for outcomes, while AI provides the cognitive horsepower to scale analysis, scenario modeling, and decision support.

The “infinite” aspect of minds evokes scale and interoperability. Nadella’s metaphor implies that AI systems should be capable of inter-operating across platforms, languages, and domains, thereby enabling a more integrated decision-making process. This has practical implications for enterprises pursuing large-scale AI deployments: it necessitates standardized interfaces, data governance policies, and cross-system coordination mechanisms. Interoperability also raises questions about data stewardship, privacy, and security, as more systems connect and share insights. Nadella’s framing thereby underscores the importance of building a cohesive AI architecture that can harness distributed intelligences while maintaining a single, auditable chain of responsibility.

Beyond technology, Nadella’s remarks often touch on economic and societal dimensions. Davos is a forum where policy-makers, business leaders, and researchers explore how technologies can drive inclusive growth and address global challenges. In presenting AI as a collaborative endeavor between humans and machines, Nadella signals a desire for thoughtful policy and corporate strategy that encourages innovation while safeguarding workers and communities. The metaphor can be read as an invitation to design reskilling programs, create new job categories, and implement transition plans that help workers adapt to AI-enabled workflows. It also suggests a governance mindset that could influence regulatory approaches to AI, including transparency requirements, risk disclosures, and accountability standards for AI systems deployed at scale.

Critically, the metaphor does not resolve all tensions surrounding AI. It acknowledges the promise of intelligent machines while underscoring the necessity of careful stewardship. The success of this framing depends on concrete steps: establishing clear decision rights when AI is involved in high-stakes outcomes, ensuring that AI systems can be audited for bias or error, and maintaining human oversight that is meaningful rather than performative. It also requires a robust privacy and security posture. As AI capabilities expand, so too do the potential vectors for abuse, data breaches, and unintended consequences. Nadella’s framing invites ongoing dialogue about how to design AI ecosystems that are both powerful and controllable, capable of delivering value while remaining aligned with human values and legal norms.

Another layer of analysis concerns the implications for organizational structure. If leaders and workers are to act as “managers of infinite minds,” then organizational processes must adapt to a future where AI-assisted decision-making is routine. This could entail rethinking roles and responsibilities, developing new collaboration models between data scientists, engineers, and domain experts, and creating governance bodies with authority over AI policy and risk management. The metaphor encourages a proactive stance toward culture, ethics, and long-term strategy, urging leaders to embed responsible AI principles into performance metrics, incentives, and governance workflows. In this sense, Nadella’s Davos statements align with a trend toward human-centered AI governance, where technology serves as an amplifier of human capability rather than a replacement for human judgment.

Finally, Nadella’s remarks contribute to ongoing debates about the pace and direction of AI innovation. The idea of “infinite minds” invokes both optimism and caution: optimism about the potential to unlock new efficiencies and insights, and caution about the need to manage complexity, ensure accountability, and preserve human-centric values. The metaphor provides a lens through which businesses and policymakers can assess AI strategy, risk, and impact. It invites organizations to experiment with scalable coordination approaches—pilot programs that integrate multiple AI tools to tackle complex tasks, while implementing governance mechanisms that keep outcomes transparent and controllable. As the AI landscape continues to evolve, the “managers of infinite minds” concept may serve as a guiding narrative for how society negotiates the balance between powerful machine intelligence and essential human oversight.

Perspectives and Impact¶

The “managers of infinite minds” metaphor extends beyond a single speech and taps into broader, ongoing debates about how to responsibly deploy AI at scale. Proponents would argue that this framing helps address several persistent concerns: the opacity of AI decisions, the risk of bias, and the potential displacement of workers by automated systems. By recentering humans as stewards, Nadella emphasizes accountability and governance as central to AI strategy. This emphasis aligns with industry calls for robust evaluation of AI models, continuous monitoring for drift or bias, and the establishment of ethical guardrails that adapt as AI capabilities evolve.

*圖片來源:Unsplash*

From a workforce perspective, the metaphor highlights the transformative impact of AI on job roles and skill requirements. If humans are to supervise “infinite minds,” then there is a clear case for scaling up reskilling initiatives, creating new roles focused on AI governance and orchestration, and building cross-disciplinary teams that combine technical expertise with domain knowledge. Companies may need to rethink performance metrics to value governance outcomes, reliability, and responsible AI usage as much as traditional efficiency gains or revenue targets.

On the policy front, Nadella’s framing could influence regulatory conversations by underscoring the importance of transparency, safety, and accountability. Regulators may seek mechanisms to ensure that AI systems used in critical sectors—healthcare, finance, infrastructure—are auditable and explainable. The metaphor provides a vocabulary for discussing compliance requirements and risk management strategies that keep pace with rapid technical advancements. It also foregrounds the need for standards around interoperability so that diverse AI systems can coordinate effectively without compromising security or privacy.

In terms of market strategy, the “infinite minds” concept invites organizations to adopt orchestration-layer approaches. Rather than deploying a patchwork of siloed AI tools, firms might invest in governance frameworks, data pipelines, and common interfaces that enable multiple AI systems to work together coherently. This approach can help unlock more sophisticated capabilities, such as end-to-end automation across functions, cross-domain analytics, and more robust decision support. However, it also introduces complexity, requiring careful planning, governance, and risk management to prevent fragmentation or misalignment across AI initiatives.

The potential societal implications are substantial. As AI systems become more capable and integrated into everyday life, questions about privacy, autonomy, and the distribution of benefits become more salient. Nadella’s metaphor implies that society can maximize the positive impact of AI by equipping people with the tools to supervise and coordinate advanced computational partners, ensuring that governance and ethics keep pace with technological advances. If executed thoughtfully, this path could contribute to more informed policymaking, better public service delivery, and enhanced innovation ecosystems. If neglected, it could exacerbate inequalities or empower unchecked automated decision-making with insufficient oversight.

Key Takeaways¶

Main Points:

– Nadella reframes AI progress as humans acting as stewards who coordinate multiple AI systems, termed “managers of infinite minds.”

– The metaphor emphasizes governance, accountability, and human oversight in a landscape of scalable machine intelligence.

– Interoperability and orchestration across diverse AI tools are central to realizing the benefits of AI at scale.

– The framing aligns with responsible AI discourse, encouraging ethical guidelines, transparency, and risk management.

– Workforce transformation, reskilling, and new governance roles are likely outcomes of this shift.

Areas of Concern:

– Implementing effective governance across numerous AI systems is complex and resource-intensive.

– Risks include opacity in AI decision-making, potential biases, data privacy issues, and security vulnerabilities.

– Balancing rapid innovation with regulatory compliance and workforce protections remains challenging.

– Ensuring equitable access to AI benefits across industries and regions requires deliberate policy and investment.

– The metaphor alone does not prescribe concrete steps; successful execution depends on practical, sustained action.

Summary and Recommendations¶

Satya Nadella’s Davos articulation of AI as a domain where humans become “managers of infinite minds” presents a compelling framework for thinking about governance, scale, and collaboration between people and machines. It shifts the emphasis away from a simple technological arms race toward a holistic approach that prioritizes accountability, interoperability, and ethical stewardship. The metaphor captures the reality that AI capabilities are expanding rapidly and becoming embedded across diverse sectors, necessitating a governance-centric mindset to ensure responsible deployment and equitable outcomes.

For organizations, the implications are clear: invest in governance structures and orchestration capabilities that can coordinate multiple AI systems, develop clear accountability mechanisms, and integrate ethical considerations into every stage of AI development and deployment. Workforce strategies should focus on reskilling and creating roles centered on AI governance, risk management, and interdisciplinary collaboration. Policymakers and industry bodies can leverage the metaphor to frame standards for transparency, safety, and interoperability, supporting a sustainable AI-enabled economy.

However, the path from metaphor to measurable impact requires concrete steps. Enterprises should start by mapping AI toolchains, identifying decision points that involve AI outputs, and implementing auditable governance processes with defined ownership. Data governance, privacy protections, and security protocols must be embedded in every layer of AI orchestration. Pilot programs that test multi-AI coordination in restrained domains can help demonstrate value while surfacing governance challenges in a controlled manner.

In the longer term, Nadella’s framing may contribute to a more resilient and inclusive AI ecosystem—one where human judgment remains central, where machine intelligence operates under transparent constraints, and where the benefits of AI are scaled responsibly across society. The metaphor invites ongoing dialogue and action: to build interoperable AI ecosystems, to train and empower a workforce capable of managing these systems, and to cultivate governance norms that keep human values at the core of rapid technological progress.

References¶

- Original: https://www.geekwire.com/2026/satya-nadellas-new-metaphor-for-the-ai-age-we-are-becoming-managers-of-infinite-minds/

- Related: [1] OpenAI policy and governance resources, [2] Microsoft AI principles and governance frameworks, [3] World Economic Forum AI governance discussions

Forbidden:

– No thinking process or “Thinking…” markers

– Article must start with “## TLDR”

Note: This rewritten article preserves the factual basis of Satya Nadella’s Davos remarks and expands with reasoned context and analysis to produce a comprehensive, original piece suitable for publication.

*圖片來源:Unsplash*