TLDR¶

• Core Points: AI integration is moving from bespoke connectors to standardized model context protocols that unify communication with data, tools, and services.

• Main Content: Context-based protocols offer scalable, maintainable interfaces for LLMs to access databases, APIs, and business tools, reducing glue code.

• Key Insights: A standardized protocol can mitigate fragmentation, improve security, governance, and performance, while enabling broader tool interoperability.

• Considerations: Adoption hurdles, governance of prompts and context, versioning, and security implications require careful design.

• Recommended Actions: Invest in developing and adopting a Model Context Protocol standard, update tooling to support it, and pilot cross-tool integrations with strong governance.

Content Overview¶

The evolution of software integration has long been defined by custom code. For years, connecting disparate systems required bespoke adapters, connectors, and a continuous cycle of maintenance. Each new integration introduced unique quirks, compatibility issues, and debugging headaches. This pattern persists as organizations increasingly leverage artificial intelligence, particularly large language models (LLMs), to operate across data stores, APIs, and business tools. Developers find themselves writing ever more glue code to enable LLMs to query databases, call external services, and orchestrate workflows. The result is a landscape still characterized by fragmentation, where each integration bears its own set of constraints and risks.

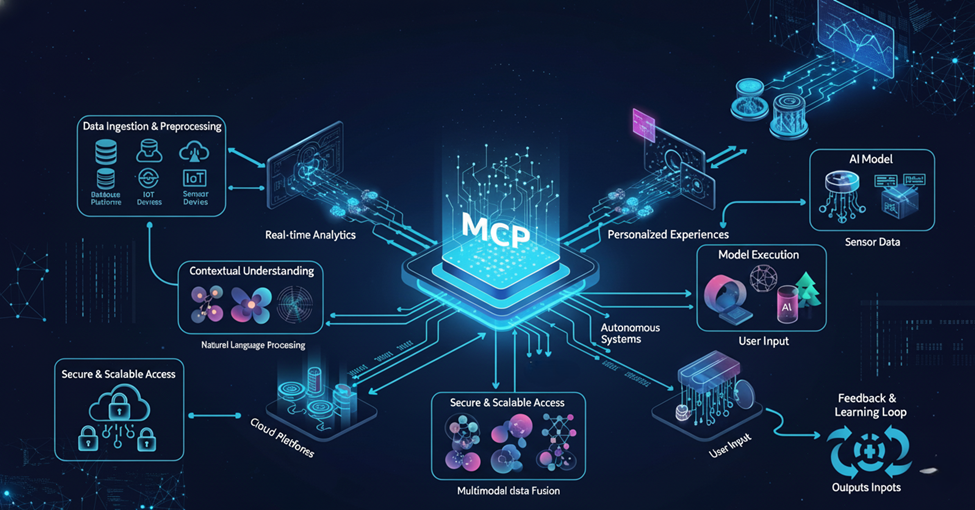

As AI becomes a central engine for decision making, automation, and insight generation, the limitations of current integration approaches become more apparent. The common thread across many projects is the absence of a unified, scalable mechanism that can describe, negotiate, and govern interactions between an AI model and external systems. The proposed shift is toward a Model Context Protocol (MCP)—a standardized way to provide context, capabilities, and intents to LLMs so they can operate with greater autonomy and safety. By adopting such a protocol, organizations can reduce the complexity of integration, improve reliability, and unlock more powerful use cases where AI agents act across multiple tools and data sources in a consistent manner.

This article examines why MCP is poised to become the new API paradigm, what benefits it offers, the challenges to adoption, and the practical steps organizations can take to begin migrating toward this approach. It also considers the broader implications for governance, security, developer productivity, and theAI ecosystem as a whole.

In-Depth Analysis¶

The core problem with current AI integrations is familiar to any software engineer: bespoke glue code. When an LLM needs to access a database, call an external API, or trigger a business workflow, developers often write custom connectors or adapters. These pieces of glue are brittle by design, tailored to a specific tool, data schema, or API version. As a result, maintenance costs escalate, and the time required to onboard new tools compounds quickly. Each integration becomes a special case, requiring bespoke handling for authentication, rate limiting, data transformation, and error handling. The proliferation of adapters without a unifying standard creates a fragmentation that hampers scalability.

A Model Context Protocol aims to address this fragmentation by formalizing how an AI model should understand and interact with external systems. MCP centers on three pillars: context, capabilities, and intents. Context describes the current state, data, and constraints relevant to the AI’s task. Capabilities define what a given tool or data source can do, including supported operations, input formats, and security requirements. Intents articulate the objective of the AI’s action, guiding the model toward appropriate actions within defined governance boundaries. By codifying these elements into a machine-readable protocol, MCP provides a consistent interface for LLMs to interact with diverse tools.

Benefits of MCP include:

- Consistency and Predictability: A standard model protocol reduces ad hoc integration logic, enabling developers to anticipate how an LLM will behave when interacting with a given tool or data source.

- Improved Observability and Governance: With explicit context and capabilities, it becomes easier to monitor, audit, and control AI actions, enforce policy, and trace decisions.

- Enhanced Security: A well-designed MCP can embed authentication, authorization, and data handling policies at the protocol level, mitigating common risks associated with LLM access to external services.

- Reusability and Portability: Once a tool’s capabilities and context are defined, multiple models and applications can reuse the same integration logic, accelerating development.

- Scalability: MCP’s standardized approach supports expanding tool ecosystems without a corresponding explosion of bespoke connectors.

However, transitioning to MCP is not without challenges. Industry-wide standardization requires consensus on how to represent context, capabilities, and intents, as well as how to version and evolve the protocol over time. There are also concerns about performance overhead, potential bottlenecks in the protocol layer, and ensuring that policies scale with increasing tool diversity. Additionally, organizations must consider data governance, privacy, and compliance when exposing internal systems to LLM-driven workflows.

From a technical standpoint, MCP is not a single, monolithic protocol but a framework for describing interactions in a structured, machine-actionable way. It can be implemented through a combination of schema definitions, interface contracts, and dynamic policy engines. The protocol would typically specify:

- Data contracts: The schema for data exchanged between the LLM and external systems, including input/output formats and data types.

- Tool capabilities: A formal description of what operations a tool supports, including supported methods, endpoints, rate limits, and required authentication.

- Context descriptors: A mechanism to convey the current task context, data provenance, and constraints that influence the AI’s decisions.

- Policy and governance rules: Access control, data handling, retention, and security policies that govern how tools may be used by the AI.

Adoption scenarios for MCP can vary. In some cases, organizations may adopt MCP as an internal standard to align all tool integrations across products and teams. In others, MCP could become part of a broader industry standard, promoted by consortiums or standards bodies to lower interoperability barriers across vendors. Regardless of the path, a phased approach typically proves most effective: start with a subset of tools, define a shared context and capability model for those tools, implement monitoring and governance pipelines, and iterate on the protocol based on real-world usage.

The cultural shift accompanying MCP adoption is significant. Teams accustomed to bespoke integrations will need to embrace a model-centric mindset, where API design, security, and governance are embedded into how models operate rather than being relegated to separate layers of middleware. This shift requires collaboration among AI researchers, software engineers, security professionals, data stewards, and governance leads. It also implies investing in tooling that can generate, validate, and test MCP descriptions, verify compatibility between models and tools, and simulate interactions to detect issues before production deployments.

Beyond technical considerations, MCP has implications for the AI ecosystem’s competitive landscape. Vendors offering standardized, tool-rich MCP ecosystems can enable a broader range of model capabilities with less friction, potentially accelerating innovation and time-to-value for customers. Conversely, concerns may arise about lock-in if certain MCP implementations privilege specific tool sets or providers. To mitigate such risks, a transparent, extensible, and interoperable MCP specification is essential, along with robust governance that supports vendor neutrality and open participation.

A practical path forward involves several concrete steps. First, organizations should map their current integration debt: identify tools, data sources, and services that rely on brittle glue logic and document the pain points. Next, define a minimal viable MCP for a representative set of tools—such as a database, a REST API, and a cloud-based service—describing the required data contracts, available operations, and governance policies. Then, implement experimental pilots where an LLM uses the MCP-described tools to perform end-to-end tasks, collecting metrics on latency, reliability, security events, and overall user outcomes. As confidence grows, expand MCP coverage to more tools and introduce versioning, test suites, and automated validation to ensure compatibility across model updates and tool changes.

Another critical dimension is performance. The MCP layer adds an abstraction boundary between the LLM and tools. It is crucial to design this boundary to minimize latency, maximize throughput, and support streaming or near-real-time interactions where appropriate. Techniques such as asynchronous request handling, caching, request batching, and parallel tool orchestration can help maintain a responsive experience. Additionally, clear error handling and fallback strategies are essential to prevent cascading failures when a tool is unavailable or returns an error.

Security and privacy must be central to any MCP strategy. Access control policies should be granular and auditable, with role-based or attribute-based controls aligned to data sensitivity. Data minimization principles should guide what information is shared with external tools or stored in the MCP layer. Observability tooling, including detailed logging and anomaly detection, is indispensable for detecting misuse or misconfiguration. Compliance considerations—such as handling personally identifiable information and sensitive business data—should shape MCP design from the outset rather than as an afterthought.

From a developer experience perspective, MCP can reduce cognitive load and accelerate delivery. Developers can rely on a consistent interface and a library of reusable, tested tool descriptions rather than building bespoke glue for each integration. This consistency also simplifies testing: simulation environments and automated tests can validate MCP interactions across a range of models and scenarios. Over time, standardized MCP descriptions may become a prerequisite for marketplace platforms and vendor ecosystems, enabling plug-and-play AI capabilities with diverse data sources and services.

Nevertheless, broad adoption requires collaboration. Standards bodies, major cloud providers, AI platform vendors, and enterprise users must participate in shaping the MCP specification. Open reference implementations, tooling ecosystems, and conformance tests will be critical to avoid fragmentation and to ensure that MCP remains accessible and useful across different contexts. In parallel, organizations should consider how MCP intersects with existing API standards (REST, GraphQL, gRPC) and data interchange formats (JSON, Avro, Parquet) to maximize compatibility and minimize duplication of effort.

*圖片來源:Unsplash*

In sum, the Model Context Protocol represents a potential turning point in how AI systems integrate with the broader software landscape. By standardizing how context, capabilities, and intents are described and negotiated, MCP aims to replace bespoke connectors with a scalable, governed, and interoperable framework. If widely adopted and thoughtfully implemented, MCP could unlock more capable AI agents, accelerate cross-tool automation, and tame the complexity that has long plagued AI-driven integration projects. The path forward will require technical ingenuity, disciplined governance, and a collaborative spirit among developers, operators, security professionals, and business stakeholders.

Perspectives and Impact¶

Looking ahead, several macro-trends converge to bolster the case for MCP. First, the AI market is moving toward increasingly capable agents that perform multi-step tasks across multiple tools. This requires a coherent, scalable mechanism for the model to discover and safely use tools without continuous bespoke integration work. Second, organizations are under pressure to deploy AI more rapidly while maintaining control over data, security, and compliance. A standardized protocol that encapsulates context and policy helps meet this need by providing a repeatable pattern for integration that is auditable and evolvable.

Third, the rise of composable architectures—where microservices, data services, and AI components are assembled to form end-to-end workflows—aligns with MCP’s philosophy. In such environments, having a shared language to describe tool capabilities and context reduces coupling and simplifies orchestration. Fourth, as data ecosystems expand to include not only structured databases but data lakes, data warehouses, streaming platforms, and edge devices, the need for a unified interface to heterogeneous data sources becomes more acute. MCP can serve as the connective tissue that harmonizes access rules, formatting, and governance across diverse data modalities.

The broader implications for governance and risk management are also meaningful. With MCP, organizations can implement centralized policy enforcement for how AI interacts with sensitive data, how long data is retained, and how tools may be invoked. This can enhance compliance with data protection regulations and help demonstrate due diligence during audits. From a market perspective, MCP-facilitated interoperability can lower integration barriers for smaller vendors and agencies, enabling a richer ecosystem of AI-enabled tools and services without prohibitive integration costs.

However, the transition to MCP is not a silver bullet. It requires substantial investment in standardization efforts, tooling, and training. There is a real risk of premature standardization—where a proposed MCP becomes locked into a suboptimal design that stifles innovation. To mitigate this, the MCP movement should emphasize extensibility, backward compatibility, and open governance. It should also support multiple profiles or dialects to accommodate industry-specific needs while preserving core compatibility.

Another consideration is performance and resilience. An additional protocol layer can become a bottleneck if not designed with low-latency principles and robust failure handling. Therefore, performance engineering must be integral to MCP design, including strategies for partitioning, regional deployment, and intelligent routing of requests to the most appropriate tools. The human factors involved are equally important. Teams must adapt to a model-centric paradigm, investing in training that covers protocol design, tool description, security principles, and testing practices. The cultural shift may be as impactful as the technical one, requiring new collaboration patterns between AI researchers, software developers, security engineers, and product managers.

As MCP matures, we can expect a more dynamic AI ecosystem. Tool providers could publish MCP-compliant capabilities and context definitions, enabling easy onboarding for customers who want to assemble AI workflows with minimal friction. AI platforms could offer marketplaces of MCP-described tools, with standardized pricing, access controls, and governance policies. For organizations, this could translate into faster time-to-value, better risk management, and the ability to experiment with more ambitious AI-driven processes.

Yet, political and organizational dynamics matter. Industry coalitions, vendor neutrality, and transparent governance will play a critical role in ensuring MCP remains broadly beneficial rather than becoming a tool for few dominant platforms. Stakeholders should advocate for open specifications, reference implementations, and interoperability tests that promote healthy competition and protect customer interests.

In the longer term, the MCP concept could influence the evolution of APIs themselves. If MCP proves its value in reducing integration complexity and enabling safer, more scalable AI actions, API design practices may incorporate MCP-like principles as a default layer for AI interactions. This could lead to a hybrid landscape where MCP governs model-to-tool interactions, while traditional APIs continue to serve standard data exchange and interoperability needs. The result would be a more resilient, adaptable software ecosystem that can evolve with advances in AI capabilities.

Key Takeaways¶

Main Points:

– Model Context Protocol offers a standardized approach to AI-system integration, replacing bespoke glue code.

– MCP emphasizes context, capabilities, and intents to enable safe, scalable interactions with data and tools.

– Adoption requires collaboration across standards bodies, vendors, and enterprise users, with strong governance and extensibility.

Areas of Concern:

– Achieving broad, vendor-neutral standardization may be challenging.

– Potential performance overhead and security risks if not thoughtfully designed.

– Risk of premature standardization or lock-in without open governance.

Summary and Recommendations¶

The trajectory of AI integration points toward a standardized, governance-driven model for interfacing AI with external systems. The Model Context Protocol presents a compelling vision: a consistent, scalable, and secure way for LLMs to reason about and interact with data sources, APIs, and business tools. By codifying context, capabilities, and intents into a machine-readable protocol, MCP can reduce the fragmentation that currently burdens integration projects, accelerate development velocity, and improve observability and security. The transition will not be trivial. It requires a concerted effort from technology providers, enterprises, and standards bodies to define robust, extensible descriptions, develop tooling for authoring and validating MCP specifications, and cultivate an ecosystem that values interoperability and open governance.

Organizations considering this shift should begin with a pragmatic, phased approach:

– Inventory and assess current integration debt, identifying the most critical tools and data sources.

– Define a minimal viable MCP for a representative tool set, outlining data contracts, operations, and governance rules.

– Implement pilot programs with measurable outcomes (latency, reliability, security events, user impact) to validate the approach.

– Invest in tooling for MCP authoring, testing, and validation, plus monitoring and governance pipelines.

– Foster collaboration with partners and standards bodies to promote openness, compatibility, and ongoing evolution of the protocol.

If executed thoughtfully, MCP has the potential to transform AI-driven integration from a perpetual cycle of bespoke glue code into a scalable, secure, and maintainable framework. This would empower AI applications to operate with greater autonomy and reliability across diverse data sources and tools, unlocking new capabilities and accelerating time-to-value for organizations embracing AI at scale.

References¶

- Original: https://dzone.com/articles/future-of-ai-integration-why-mcp-is-the-new-api

- Additional references:

- Article on the role of standardization in AI tool integration

- Industry whitepaper on governance and security for AI-enabled workflows

- Research on scalable architectures for model-to-tool interactions

Forbidden:

– No thinking process or “Thinking…” markers

– Article must start with “## TLDR”

Ensure content is original and professional.

*圖片來源:Unsplash*