TLDR¶

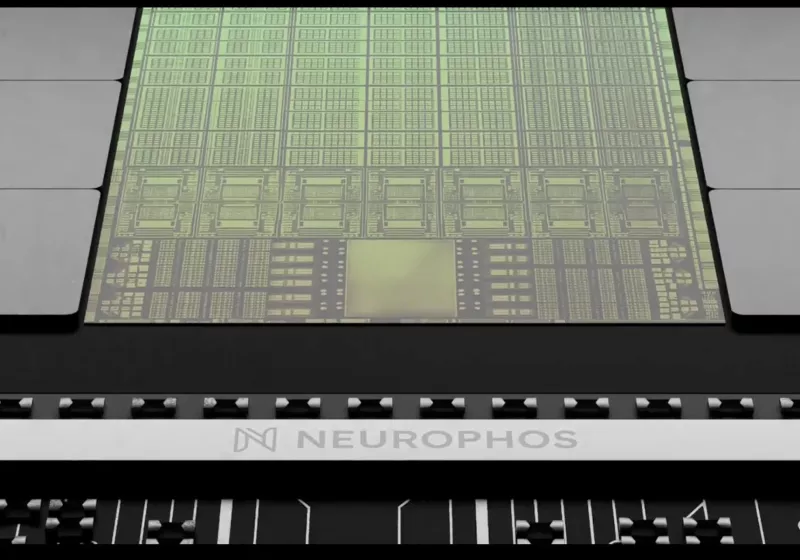

• Core Points: A startup, Neurophos, proposes an optical processing unit using light to perform computations, claiming up to 470 petaFLOPS FP4/INT4 with power draw similar to Nvidia Rubin GPUs.

• Main Content: The concept centers on optical computing to surpass conventional silicon performance while maintaining comparable energy efficiency, addressing industry pressure for higher compute density.

• Key Insights: If scalable, light-based chips could transform AI workloads, but significant engineering and manufacturing hurdles remain for reliable, mass-produced accelerators.

• Considerations: Feasibility, manufacturability, interoperability with existing software, and long-term cost will determine impact.

• Recommended Actions: Monitor Neurophos’ technical milestones, independent validation, and potential partnerships with hyperscalers or foundries.

Product Review Table (Optional):¶

Only include this table for hardware product reviews (phones, laptops, headphones, cameras, etc.). Skip for other articles.

Product Specifications & Ratings (Product Reviews Only)¶

| Category | Description | Rating (1-5) |

|---|---|---|

| Design | Optical processing architecture concept aiming to replace electronic compute paths | N/A |

| Performance | Claimed 470 petaFLOPS FP4/INT4, approx 10x Nvidia Rubin GPUs | N/A |

| User Experience | Not applicable (concept stage) | N/A |

| Value | Theoretical efficiency with similar power draw; practical cost unknown | N/A |

Overall: N/A

Content Overview¶

The article centers on a startup named Neurophos proposing an optical processing unit (OPU) that performs computations with light rather than electrons. The company asserts that its technology could achieve up to 470 petaFLOPS of FP4/INT4 compute while drawing power comparable to Nvidia’s Rubin GPUs. The broader context is the industry’s ongoing push to increase compute density and performance without proportionally increasing energy consumption, driven by AI workloads that demand massive parallel processing. Optical computing has long been explored as a path to higher bandwidth and lower latency because photons travel and interact differently from electrons, potentially enabling faster data movement and reduced heat generation. Neurophos’ claim places the concept into a more concrete performance target, suggesting a potential path to an order-of-magnitude gain over current silicon-based accelerators. However, the article also implies scrutiny, given the early-stage nature of optical computing technologies and the challenges inherent in translating laboratory demonstrations into reliable, scalable, and cost-effective production hardware.

In-Depth Analysis¶

Neurophos enters a field that has seen periodic excitement around optical or photonic computing as a solution to the end of Moore’s Law-era scaling for traditional silicon chips. The premise is straightforward: use light to perform computations or to shuttle data within a processor architecture, thereby potentially reducing energy loss and increasing data throughput. In conventional electronic accelerators, the movement of electrons through copper interconnects and the processing within transistors create both latency and heat that scale with performance demands. Photons, in contrast, can offer higher bandwidth and lower energy per bit transferred, especially for certain linear algebra operations central to AI workloads.

Neurophos asserts that an optical processing unit could reach 470 petaFLOPS of FP4/INT4 compute. FP4/INT4 refers to low-precision formats (likely 4-bit floating point and 4-bit integer-like representations) that can accelerate AI inference and some training tasks while reducing memory and compute requirements. If true, 470 PFLOPS at similar power to Nvidia’s Rubin GPUs would imply roughly an order of magnitude improvement in compute density per watt, a tantalizing proposition for data centers, edge devices, and other high-demand environments.

Yet several key challenges temper optimism. First, delivering high-precision, repeatable, and programmable optical computations at scale remains difficult. Optical processors often rely on analog signal processing, where noise, drift, fabrication variability, and calibration can impact accuracy. Second, programming models, compilers, and hardware-software co-design must mature to exploit an optical path’s strengths while accommodating existing AI frameworks. Third, integration with memory hierarchies, data ingestion, and result retrieval—particularly in mixed precision regimes—requires careful architectural planning to avoid bottlenecks that erode theoretical gains.

The industry context is critical. Nvidia’s Rubin GPUs, built on mature CMOS technology, have benefitted from decades of optimization, robust software ecosystems (CUDA, cuDNN, libraries, and toolchains), and extensive hardware validation across thousands of deployments. A shift to photonics would necessitate complementary breakthroughs in packaging (how optical and electronic components are integrated), thermal management, fault tolerance, and long-term reliability in varied operating environments.

Moreover, supply chain and manufacturing considerations loom large. If Neurophos’ architecture depends on novel materials, specialized fabrication steps, or non-standard foundry processes, achieving cost-effective mass production could become a limiting factor. The capacity to produce at scale with consistent yields determines whether any theoretical performance advantages translate into real-world capabilities.

From a market perspective, the potential impact hinges on a few trajectories:

– AI inference acceleration: If FP4/INT4 workloads benefit substantially from optical paths with lower energy per operation and high throughput, data centers could see meaningful efficiency gains, particularly for large-scale multi-tenant deployments.

– Training considerations: Training often requires higher precision and stability; whether a photonic approach can deliver competitive performance across training tasks remains uncertain.

– Edge and embedded scenarios: Photonic advances could enable energy-efficient acceleration in environments where power and cooling are constrained, assuming ruggedness and integration reliability are addressed.

Independent validation will be critical. Early-stage claims frequently rely on projections or demonstrations under constrained benchmarks. Third-party benchmarking, reproducibility across different testbeds, and transparent reporting of metrics such as actual energy per operation, latency, and end-to-end application performance will be essential to establish credibility.

Neurophos’ narrative aligns with a broader ecosystem interest in non-electronic computing paradigms that might extend the lifespan of energy-efficient AI acceleration. It sits among a suite of research directions, including photonic neural networks, spintronics, neuromorphic systems, and quantum-inspired accelerators. Each of these approaches confronts a core tension: the gap between laboratory feasibility and production-grade, scalable hardware that can operate reliably in diverse data-center racks and compute farms.

*圖片來源:Unsplash*

Looking ahead, several technical milestones would help the field gauge progress:

– Demonstrations of programmable optical search and compute primitives that can be mapped from neural network operations to photonic channels with stable results.

– Benchmark comparisons across representative AI workloads, including large language models and computer vision tasks, to quantify any real-world speedups and energy benefits.

– Integration pathways with electronic memory and processing units that preserve the advantages of photonics without introducing unacceptable complexity or latency.

– Robust error correction, calibration, and fault-tolerance techniques to handle long-running workloads in production environments.

– Clear roadmaps detailing manufacturing plans, partner ecosystems (foundries, materials suppliers, packaging specialists), and timelines for pilot deployments.

It is also important to consider the software ecosystem implications. AI developers rely on mature, optimized libraries and runtime environments. A shift to optical computing would require new compilers, accelerators, and runtime stacks that can convert high-level models into efficient photonic circuits. Cross-compatibility with existing frameworks would be advantageous to encourage adoption and minimize disruption for organizations already invested in silicon-based accelerators.

In sum, Neurophos presents a bold claim: a light-based chip could deliver ten times Nvidia’s latest Rubin GPUs’ performance at a similar power level. The appeal is clear—vast improvements in compute density could unlock faster AI inference, more capable training, and improved energy efficiency. However, the path from concept to commercial product is fraught with scientific, engineering, and economic hurdles. The critical questions concern not only whether the 470 petaFLOPS figure can be realized in a programmable, general-purpose accelerator, but also whether such devices can be manufactured at scale, integrated into existing data-center architectures, and supported by a robust software ecosystem. As with many disruptive technologies, cautious optimism is warranted, with attention to independent validation, partnerships, and clear demonstration milestones before large-scale adoption.

Perspectives and Impact¶

The potential disruption posed by optical computing, as proposed by Neurophos, centers on the fundamental speed and efficiency limitations of electronic interconnects and semiconductor switching. If light-based processing can deliver high-throughput, low-latency computation with favorable power characteristics, it could redefine how data centers architect accelerators for AI workloads. The implications extend beyond raw performance figures. Energy efficiency is a primary driver in modern data-center design due to recurring operating expenses and cooling requirements. A tenfold improvement in compute-per-watt would not only reduce energy bills but could also enable more compact racks, denser micro data centers, or expanded capabilities at the edge.

However, several impact considerations deserve emphasis:

– Economic viability: The total cost of ownership will hinge on manufacturing yields, material costs, packaging complexity, and the need for specialized tooling or maintenance.

– Ecosystem maturity: Software tools, libraries, and developer familiarity are as important as hardware performance. A lack of widely adopted tooling can impede adoption even when hardware is powerful.

– Reliability and lifecycle: Data centers demand reliable hardware with predictable performance over years. Optical chips must demonstrate stable operation across temperature variations, mechanical stress, and potential degradation of optical components.

– Security and cryptography: New hardware paradigms may introduce novel threat models. Understanding how optical accelerators handle cryptographic workloads and secure enclaves will be critical.

– Competitive landscape: Even if optical accelerators prove feasible, competing teams will likely pursue alternative approaches (e.g., advanced electronic architectures, AI-specific accelerators, or specialized memory technologies). The market’s consensus will emerge only after multiple validating products reach scale.

If Neurophos achieves milestone demonstrations that corroborate their claims, the company could catalyze collaboration opportunities with hyperscalers, semiconductor foundries, or academic institutions. Partnerships could accelerate joint development, standardization of interfaces, and the creation of testbeds that compare photonic accelerators to silicon-based options under real workloads.

The long-term trajectory for optical computing remains uncertain but promising. The industry has invested in photonic interconnects and optical communication for data transfer for years, and extending photonics into computation could leverage existing expertise in light-based hardware. The path forward will require sustained research investment, rigorous experimentation, and transparent reporting.

Key Takeaways¶

Main Points:

– Neurophos proposes an optical processing unit capable of up to 470 petaFLOPS FP4/INT4 with power similar to Nvidia Rubin GPUs.

– The claim suggests potential tenfold performance gains through photonic computation, addressing AI workload demands.

– Realizing these gains will require overcoming significant scientific, manufacturing, and software ecosystem challenges.

Areas of Concern:

– Programmability, accuracy, and reliability of optical compute at scale.

– Manufacturing scalability, costs, and integration with existing data-center infrastructure.

– Dependence on new software stacks and compiler support for widespread adoption.

Summary and Recommendations¶

Neurophos’ claim of a light-based chip delivering up to 470 petaFLOPS in FP4/INT4 with comparable power to current high-end electronic GPUs presents an ambitious and potentially transformative direction for AI hardware. The allure of significantly higher compute density and energy efficiency is substantial, given the escalating demands of AI training and inference. However, the journey from concept to reality is complex and fraught with risk. Optical computing must demonstrate programmable, reliable performance across diverse workloads, efficient and scalable manufacturing, and strong support within software ecosystems before it can meaningfully compete in data-center environments.

Stakeholders should adopt a cautious yet proactive stance:

– Track Neurophos’ independent verifications, testbed results, and third-party benchmarks to assess real-world performance and reliability.

– Seek strategic partnerships with research institutions, foundries, and hardware accelerators developers to validate the technology under varied workloads.

– Monitor developments in packaging, thermal management, and fault tolerance specific to photonic architectures, as these affect practical deployment.

– Consider potential software ecosystem implications, including new compilers, libraries, and interoperability with existing AI frameworks.

If Neurophos can deliver on iterative milestones with transparent reporting and verifiable results, optical computing could join the broader suite of pathways exploring ways to extend the life of AI hardware scaling. Until such milestones are evidenced, the technology remains an intriguing but unproven approach to redefining compute performance per watt in the era of increasingly demanding AI workloads.

References¶

- Original: techspot.com

- [Add 2-3 relevant reference links based on article content]

*圖片來源:Unsplash*