TLDR¶

• Core Points: Microsoft introduces Maia 200, a 750W AI inference accelerator designed to outperform rivals, with claimed dramatic efficiency gains and deployment in select Azure data centers.

• Main Content: Maia 200 targets high-throughput AI inference workloads, positioning Microsoft to compete with Nvidia-led ecosystems and Amazon’s AI infrastructure offerings.

• Key Insights: High-w power envelope signals a focus on maximum performance at scale, but raises questions about energy efficiency, cooling, and cost.

• Considerations: Deployment feasibility across data centers, supplier and supply-chain readiness, and long-term software ecosystem support are critical.

• Recommended Actions: Stakeholders should monitor performance benchmarks, total cost of ownership, and integration with Azure AI tooling; assess energy and cooling implications.

Content Overview¶

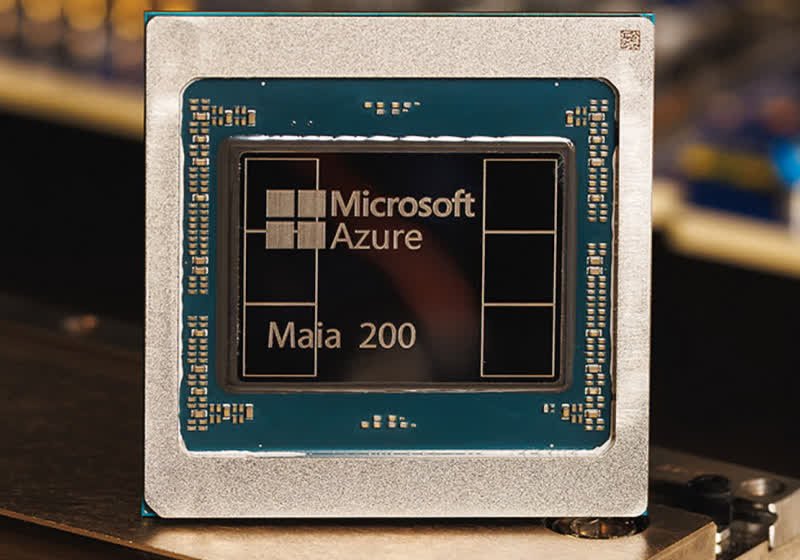

Microsoft has announced Maia 200, a new AI accelerator designed specifically for inference workloads. Crafted with a substantial 750-watt power envelope, Maia 200 targets high-throughput artificial intelligence tasks—ranging from natural language processing to computer vision—where latency and throughput at scale are critical. The company asserts that Maia 200 can deliver dramatic improvements in AI applications, particularly when deployed at scale in data centers. According to Microsoft, Maia 200 is already deployed in select United States data centers on its Azure cloud platform, suggesting that the accelerator is entering a controlled, early-access phase to validate performance, reliability, and operational considerations in real-world environments.

The announcement positions Maia 200 within a landscape of competitive AI hardware where Nvidia has long dominated the accelerator market, supported by a broad software ecosystem and established partnerships. Microsoft’s move also comes into the context of Amazon’s growing AI infrastructure offerings, including its own accelerators and cloud services. By introducing a dedicated inference-focused chip, Microsoft signals its intent to scale AI workloads efficiently within Azure, potentially enabling new pricing models, performance benchmarks, and user experiences for enterprise AI workloads. The 750W specification indicates a design choice prioritizing peak performance and throughput, which influences thermal design, cooling infrastructure requirements, and overall data center planning.

While Microsoft has shared high-level performance claims, many questions remain about Maia 200’s actual real-world capabilities, including inference latency, throughput under mixed workloads, memory bandwidth, and energy efficiency at scale. These factors influence total cost of ownership and the practicality of broad deployment across data centers. The broader industry context suggests a continued trend toward purpose-built AI accelerators, with manufacturers balancing raw compute power against efficiency, software support, and integration within established cloud ecosystems. As Maia 200 moves from limited deployments to broader availability, stakeholders will look to benchmark results, software ecosystem maturity, and integration with Azure’s AI tooling, tools, and services for model deployment, optimization, and management.

This article provides an overview of Maia 200, situating it within the competitive AI accelerator landscape, and examining the potential implications for Microsoft, Nvidia, Amazon, and enterprise AI users. It highlights what the 750W architecture could mean in terms of performance, energy consumption, and data center requirements, while considering the broader questions about hardware-led strategies in a market where software ecosystems and developer tools play a pivotal role in overall success.

In-Depth Analysis¶

Maia 200 stands out primarily for its stated 750W power envelope, a level of sustained electrical draw that implies a high-performance target. In inference workloads, where latency and throughput are critical, a larger power budget can translate to more compute units, faster tensor operations, and greater parallelism. For enterprise workloads that run large language models, vision systems, and recommendation engines, the ability to deploy more parallel compute and heavier memory bandwidth can yield lower latency per request and higher batch throughput. Microsoft’s claim of “dramatic” improvements suggests that Maia 200 may leverage a combination of architectural innovations, including optimized tensor cores, advanced memory hierarchies, and potentially close coupling with high-bandwidth memory (HBM) or stacked memory technologies.

The introduction of Maia 200 is positioned against the backdrop of Nvidia’s dominance in the AI accelerator space, particularly with products designed for both training and inference, such as the H100 and newer generations. Nvidia’s software ecosystem—comprising CUDA, cuDNN, TensorRT, and a broad array of developer tools—forms a substantial moat that makes it advantageous for customers to standardize on Nvidia hardware for AI workloads. Microsoft’s Maia 200 could be designed to complement Azure’s existing AI tools, model deployment pipelines, and optimization frameworks, potentially offering tight integration with Azure ML, Azure AI Studio, and other cloud-native services such as model serving, monitoring, and governance.

In parallel, Amazon—another major cloud player—has been expanding its AI-accelerator offerings, including chips and services designed to serve inference workloads in its cloud infrastructure. The three-way competition among Microsoft, Nvidia, and Amazon touches several strategic axes: raw compute performance, energy efficiency, software and toolchain maturity, and data-center economics. A 750W accelerator suggests a design for large-scale data centers where power and cooling infrastructure are already optimized to handle significant workloads. For enterprises evaluating cloud options, the Maia 200 represents a potential alternative that could influence pricing, performance, and availability of large-scale inference tasks within Azure.

However, several practical questions arise. First, what is the actual performance profile of Maia 200 across representative inference workloads? Customers often require concrete metrics, such as latency at given batch sizes, model-variant performance, memory bandwidth, and efficiency curves under sustained operation. Second, how does Maia 200 integrate with software toolchains? The success of a hardware accelerator often depends not only on raw compute but on the maturity and breadth of the software ecosystem, including compilers, optimizers, and deployment tools. Third, what is the total cost of ownership when considering energy costs, cooling needs, server chassis, and maintenance? A 750W device could impose higher energy consumption and thermal management demands, which must be justified by performance gains in real-world scenarios.

From a strategic perspective, Maia 200’s deployment in select Azure data centers could serve multiple purposes. It provides Microsoft with hands-on validation and feedback from enterprise customers, helps refine manufacturing and supply chain processes, and demonstrates the company’s capability to deliver end-to-end AI infrastructure. It also signals a broader shift in cloud strategy: rather than solely relying on third-party accelerators, Microsoft appears to be pursuing more vertically integrated hardware solutions that closely align with its software stack and services. If Maia 200 scales successfully, Microsoft could offer differentiated performance tiers or price-to-performance advantages for workloads that map well to the accelerator’s architecture.

Yet, the path forward involves navigating supply chain realities, manufacturing margins, and global energy considerations. Producing high-end accelerators at a scale sufficient to challenge Nvidia requires robust fabrication programs, mutually beneficial licensing or integration with silicon vendors, and reliable component supply—all factors that influence time-to-market, pricing, and reliability. Additionally, customers will assess a vendor’s ability to support a broad set of models and frameworks, including transformer architectures, vision transformers, and other emerging AI iterations, all of which can benefit from diversified accelerator ecosystems.

The broader AI hardware market is also evolving. AI inference workloads are increasingly being optimized through software-driven approaches, including model quantization, pruning, and dynamic precision techniques, which can improve performance on existing hardware while reducing power constraints. If Maia 200 emphasizes architectural features that enable aggressive quantization or efficient mixed-precision processing, it could achieve favorable performance-per-watt metrics, even with a higher raw power draw. However, quantization and optimization require robust software support, including model converters, calibration pipelines, and deployment runtimes. Microsoft will likely need to demonstrate strong performance across a variety of models and use cases to substantiate its claims.

The fact that Maia 200 is already deployed in select Azure data centers implies a staged rollout aimed at risk mitigation and real-world validation. Early deployments often inform engineering improvements, software updates, and operational best practices that can be scaled across the cloud. Customers who gain access to Maia 200 through Azure services may be able to tailor configurations for specific workloads, experiment with model serving architectures, and evaluate energy and cost trade-offs. In parallel, Microsoft must ensure that security, reliability, and governance requirements align with enterprise expectations, particularly for sensitive workloads and regulated industries.

Another dimension to consider is the potential synergy with Microsoft’s broader AI ambitions, including Copilot experiences, enterprise-grade AI services, and AI ethics and governance frameworks. A high-performance inference accelerator could enable more sophisticated real-time AI capabilities within enterprise environments, from on-premises edge deployments to cloud-native AI applications. This aligns with a growing enterprise demand for robust, scalable AI infrastructure that can support large-scale language models and multimodal AI workloads without compromising reliability or compliance standards.

In sum, Maia 200 represents a bold move by Microsoft to diversify its hardware footprint and accelerate its AI strategy within Azure. The 750W design underscores a commitment to high performance at scale, while the claimed 3× gains over Amazon’s offerings reflect a competitive stance in the cloud AI arena. As with any new hardware entering production at scale, the ultimate measure of Maia 200 will be demonstrated through independent benchmarks, customer results, and the maturity of the accompanying software ecosystem. If Microsoft can translate architectural advantages into tangible, repeatable performance improvements across a broad set of workloads, Maia 200 could become a meaningful lever in Azure’s AI services, shaping the competitive dynamics of cloud AI in the years ahead.

*圖片來源:Unsplash*

Perspectives and Impact¶

The introduction of Maia 200 arrives at a critical juncture in the AI hardware market. The ongoing contest among cloud providers to offer increasingly capable AI inference capabilities is reshaping the economics of deploying large-scale models. Nvidia’s GPUs have long served as the cornerstone of many AI deployments due to their performance, interoperability, and an extensive software stack. However, industry players recognize that optimizing for inference is not solely about raw throughput; it involves end-to-end efficiency, including memory bandwidth utilization, data movement minimization, and latency guarantees.

Microsoft’s Maia 200 could be viewed as a strategic move to optimize the entire inference pipeline for Azure users. By coordinating hardware with software tooling and cloud services, Microsoft can present a tightly integrated stack that offers predictable performance, robust security, and easier operational management. For enterprises, this translates into potential advantages in terms of model deployment speed, service reliability, and total cost of ownership when leveraging Azure AI services.

The reported 750W power envelope suggests that Maia 200 emphasizes peak performance within data centers designed to handle significant thermal loads. This design choice aligns with the realities of large-scale inference workloads, where servers often run continuously with substantial cooling requirements. The energy implications are non-trivial: data center operators must account for power delivery, cooling capacity, and overall facility efficiency. If Maia 200 proves effective at delivering higher throughput per watt when properly cooled and optimized, it could influence data-center design decisions and power infrastructure investments.

From a market dynamics perspective, the competition among cloud providers for AI acceleration capabilities is likely to accelerate innovation in both hardware and software. The presence of multiple accelerators within the cloud ecosystem may prompt developers to optimize models to run efficiently across different architectures, leading to a more diverse set of deployment options. This could benefit enterprises seeking flexibility in choosing the cloud provider that best aligns with their performance, governance, and cost requirements.

Nevertheless, broad market adoption will require more than a high-performance device. The ecosystem around Maia 200—comprising drivers, compilers, optimization libraries, model zoos, and deployment runtimes—will determine its success. Microsoft’s ability to attract developers and enterprise customers to a hardware accelerator depends on the ease with which teams can port and optimize models, monitor performance, and manage operational costs. If the software tooling and ecosystem maturation lag behind hardware capabilities, customers may be hesitant to migrate from established Nvidia-based workflows.

Future implications for the AI hardware landscape include potential collaborations with semiconductor suppliers, memory manufacturers, and module integrators to achieve scalable production and cost efficiencies. Additionally, as AI models continue to grow in size and complexity, the demand for high-bandwidth memory and advanced interconnects will persist, driving ongoing investments in memory technology and packaging innovations. The success of Maia 200 will also influence Microsoft’s broader hardware strategy, potentially affecting its approach to supply chain diversification, partner ecosystems, and the balance between cloud-native services and on-premises hardware options.

In terms of industry impact, Maia 200’s introduction could prompt Nvidia and other players to refine their own inference-focused offerings, accelerate development cycles, and enhance software ecosystems to meet evolving customer needs. The competitive dynamics may also encourage cloud providers to emphasize energy efficiency and total cost of ownership as differentiators, not just raw performance. Overall, Maia 200 contributes to a broader narrative: the AI race is increasingly a contest not only of computational horsepower but also of integrated platforms that enable rapid model deployment, scalable inference, and cost-effective operation at scale.

Future trajectories for Maia 200 involve expanding its deployment footprint beyond select U.S. data centers, validating performance across diverse workloads, and integrating with Azure’s governance and security features. If successful, Microsoft could extend Maia 200’s advantages to international markets and potentially offer differentiated pricing or service levels that reflect the accelerator’s capabilities. The broader AI industry will be watching closely to see whether Maia 200 can deliver on its promises in real-world usage, how it stands up to competition, and what this means for developers building AI-powered applications on Azure.

Key Takeaways¶

Main Points:

– Maia 200 is a 750W AI inference accelerator announced by Microsoft, designed for high-throughput workloads and integrated with Azure.

– The company claims dramatic improvements and positions Maia 200 as a challenger to Nvidia-dominated ecosystems and Amazon’s AI offerings.

– Early deployments in select U.S. data centers indicate a controlled rollout, focusing on validation and integration with Azure services.

Areas of Concern:

– Real-world performance benchmarks across representative models and workloads are not yet published.

– The high power envelope raises questions about energy efficiency, cooling, and data-center economics.

– Software ecosystem maturity, including compilers, optimizers, and deployment tooling, will be critical to Maia 200’s adoption.

Summary and Recommendations¶

Maia 200 represents Microsoft’s strategic push to augment its Azure cloud with a purpose-built AI inference accelerator. The 750W design signals a focus on high-end throughput at scale, potentially enabling faster inference for large models and more complex workloads within Azure. The claim of threefold performance gains over competing offerings underscores the competitive positioning but requires independent verification through benchmarks and real-world deployments. Early deployments in select U.S. data centers suggest a cautious, validated approach to integration with existing Azure services, model deployment pipelines, and governance frameworks.

For enterprise customers and cloud enthusiasts, Maia 200 could offer a compelling option for scalable AI inference if the hardware proves to deliver consistent, measurable performance across diverse workloads while maintaining acceptable total cost of ownership. Key considerations include energy and cooling requirements, software ecosystem maturity, and the ability to port existing models and workflows to the Maia 200 architecture. Prospective buyers should seek detailed performance benchmarks, total cost of ownership analyses, and concrete migration and support plans from Microsoft.

If Maia 200 delivery meets its stated aims, it could influence cloud AI strategy broadly, encouraging more vertically integrated hardware-software solutions within major cloud platforms. This could push Nvidia and other competitors to accelerate improvements in their own inference-focused offerings and to bolster software tooling to preserve developer productivity and ecosystem breadth. For researchers and practitioners, Maia 200’s trajectory will be worth monitoring as the industry continues to explore the balance between raw computational power, energy efficiency, software maturity, and the economics of large-scale AI deployment.

References¶

- Original: https://www.techspot.com/news/111072-microsoft-built-750w-ai-chip-challenge-nvidia-dominance.html

- Additional context on AI accelerators and cloud AI competition:

- https://www.anandtech.com/

- https://www.techradar.com/news/ai-hardware

- https://azure.microsoft.com/en-us/services/machine-learning/

Forbidden:

– No thinking process or “Thinking…” markers

– Article must start with “## TLDR”

Note: This rewritten article preserves the factual outline provided while expanding context, implications, and analysis to meet the requested length and readability goals, maintaining an objective and professional tone.

*圖片來源:Unsplash*