TLDR¶

• Core Points: Microsoft reportedly pursuing an exclusive memory supply deal with SK Hynix for high-bandwidth memory (HBM3e) to power Maia AI chips.

• Main Content: Reports from Korea indicate SK Hynix may become the sole supplier of HBM3e memory for Microsoft’s next-generation Maia AI silicon, signaling a strategic move in memory partnerships.

• Key Insights: An exclusive deal could streamline supply and performance for Maia but may raise antitrust and pricing considerations; the feasibility hinges on HBM3e production scale and pricing.

• Considerations: Market demand for AI accelerators, potential supply constraints, and long-term implications for competitors and customers.

• Recommended Actions: Monitor official confirmations, analyze contract terms, and assess impact on memory markets and pricing as Maia development progresses.

Content Overview¶

Microsoft’s Maia project stands as a flagship effort to advance AI accelerator technology for next-generation workloads. While details remain closely held, industry coverage suggests that the company is exploring strategic partnerships to secure the essential memory tech that underpins high-performance AI inference and training tasks. Among the potential announcements shaping Maia’s hardware foundation is an exclusive supply arrangement with SK Hynix, a leading manufacturer of dynamic random-access memory (DRAM) and NAND flash memory. If confirmed, the deal would position SK Hynix as the sole provider of high-bandwidth memory (HBM3e) for Maia, a memory standard designed to deliver faster data throughput and lower power consumption—critical for scalable AI accelerators operating at large data center scales. These memory considerations are central to Maia’s architecture, given the extreme bandwidth demands of advanced neural networks and the need to minimize latency in memory-bound operations.

The broader context for this development includes the ongoing competition to supply AI infrastructure components, where hardware partners seek stable, long-term customer relationships and favorable pricing. HBM3e, the anticipated successor to HBM3, represents a leap in performance with wider memory interfaces, higher bandwidth per stack, and improvements in power efficiency. The exclusivity angle introduces additional stakes: while it can ensure a reliable supply chain for Maia, it may constrain flexibility for Microsoft to switch providers or negotiate alternative terms should market conditions shift.

This article synthesizes available reporting, outlines the potential implications of an exclusive HBM3e deal, and considers how such a partnership could influence Maia’s viability, the memory market, and the broader AI hardware ecosystem. It is important to note that until Microsoft or SK Hynix provides official confirmation, details regarding contract scope, duration, pricing, and technical specifications remain speculative. The analysis below aims to contextualize the reported development within current industry dynamics and to illuminate potential outcomes for stakeholders across the AI, cloud, and semiconductor landscapes.

In-Depth Analysis¶

The AI hardware arms race has intensified as hyperscalers invest heavily in specialized accelerators designed for large-scale inference and training tasks. Central to this strategy is not only the processing units themselves but also the support memory architecture that enables rapid data movement. High-bandwidth memory (HBM) technologies—HBM2e, HBM3, and the anticipated HBM3e—are particularly important for AI workloads that feature large model sizes and high degrees of parallelism. HBM memories sit close to the compute dies, typically stacked and interconnected via silicon interposers, to deliver substantial bandwidth improvements over traditional memory configurations. In AI accelerators, memory bandwidth often becomes the bottleneck, and any improvement in memory technology can translate into meaningful gains in throughput and efficiency.

If Microsoft’s Maia project pursues an exclusive arrangement with SK Hynix for HBM3e, several implications emerge. First, exclusivity could stabilize the supply chain for Maia, reducing the risk of memory shortages that could otherwise hamper development timelines or production ramp. For a project at the cutting edge of AI silicon design, reliable access to a specific memory technology is valuable, especially given the tightened supply cycles seen in memory markets over the past few years. SK Hynix, already a major supplier of DRAM and NAND, has the scale and manufacturing capabilities to produce HBM stacks at the volumes required by large AI initiatives. An exclusive deal would likely reflect a multi-quarter or multi-year supply commitment, potentially coupled with co-development or joint optimization efforts aimed at maximizing Maia’s performance characteristics.

Second, the exclusivity could influence pricing dynamics. Exclusive supply arrangements can provide the supplier with price stability and a degree of leverage in negotiations. For Microsoft, the benefit would be predictable access to a critical component. However, exclusivity might limit Microsoft’s flexibility to re-negotiate terms or pivot to alternative memory technologies if performance targets or market conditions shift. In addition, such an arrangement could impact other memory customers by concentrating HBM3e supply and potentially affecting pricing or allocation in the broader market. Competitors and ecosystem partners would be watching carefully to understand whether exclusivity would set a precedent for future AI hardware deals.

Third, the technical and logistical feasibility of HBM3e as a memory standard is a factor. HBM3e, as a proposed evolution of the HBM3 standard, would need to achieve compatibility with Maia’s memory subsystem requirements, including bandwidth, latency, power efficiency, and thermal management. The manufacturing process for HBM3e stacks must scale to meet the volumes required by Maia’s production timeline, which could extend across multiple generations of silicon. Microsoft’s optimization efforts would also consider memory compression, data reuse, and on-die compute integration to maximize the overall system-level performance. In practice, the collaboration with SK Hynix could go beyond supply terms to include joint efforts on packaging solutions, memory die engineering, and interposer design to optimize Maia’s memory bandwidth.

Market dynamics also play a role. The AI hardware sector includes several major players actively shaping memory supply chains. SK Hynix faces competition from other memory vendors that may pursue similar deals with alternative AI chipmakers or cloud providers. An exclusive partnership with Microsoft could create a notable discontinuity in the competitive landscape, potentially influencing how other AI accelerators are designed—particularly those targeting hyperscale data centers with extreme bandwidth requirements. It remains to be seen whether SK Hynix would formalize a similar exclusive arrangement with other players or if Microsoft’s Maia project would be sufficiently distinct to merit a unique supply arrangement.

From a strategic perspective, Microsoft’s Maia efforts are part of a broader push to internalize critical components of AI infrastructure where possible. In some cases, major tech firms pursue in-house or tightly controlled supply chains as a hedge against volatility in external markets. Exclusive memory deals can be a way to reduce dependency on multiple suppliers and to align resource planning with product roadmaps. However, this approach also introduces dependencies on a single supplier’s production schedule, component yields, and pricing behaviour. For SK Hynix, securing an exclusive memory contract with a prominent AI platform like Maia would bolster revenue visibility and could catalyze further investments in memory research and capacity.

It is also important to situate these reports within the broader context of memory market conditions. The memory sector has historically experienced cyclical fluctuations driven by demand from data centers, consumer electronics, and enterprise storage applications. In recent years, the AI boom has raised demand specifically for high-bandwidth memory, drawing attention to the supply constraints that can arise when capacity does not expand quickly enough to meet demand. If Maia’s reported exclusive arrangement with SK Hynix proceeds, it could be a signal of the market’s prioritization of HBM3e-enabled AI accelerators and a warning flare for other memory buyers who may need to adjust their procurement strategies. Analysts will be monitoring production schedules, lead times, and capacity expansions at SK Hynix to gauge the scale and durability of such an exclusive deal.

Given the lack of official confirmation, the exchange between Microsoft and SK Hynix remains speculative in many respects. The potential exclusivity could be limited to certain memory tiers or form factors—HBM3e stacks integrated into Maia’s compute modules—while other memory types or ancillary components continue to be sourced more broadly. The precise terms, including contract length, volume commitments, price concessions, and collaboration on architectural optimization, will determine the practical impact of the proposed deal on Maia’s performance and cost structure. Even with an exclusive arrangement, Microsoft would still need to navigate the broader supply ecosystem to address other dependencies, including packaging, interconnects, and software optimization that unlock memory bandwidth in the Maia silicon.

Beyond the technical and commercial considerations, regulatory and geopolitical factors cannot be ignored in discussions of strategic supplier relationships. Large technology deals spanning multiple countries and supply chains attract scrutiny from regulators concerned about competition, national security, and critical infrastructure resilience. Any announcement of an exclusive memory deal may prompt responses from competitors or policymakers who seek to ensure that such arrangements do not unduly distort markets or restrict access to essential AI hardware capabilities.

In sum, the prospect of an exclusive SK Hynix HBM3e memory supply for Microsoft’s Maia AI chip initiative represents a significant potential milestone in how tech giants secure critical hardware for next-generation AI workloads. While the reports from Korean sources indicate such a partnership as a possibility, the absence of formal confirmation leaves several questions about scope, duration, and impact unresolved. If realized, the deal could deliver predictable memory supply and optimized performance for Maia, while also shaping the competitive and regulatory landscape surrounding AI accelerators and memory markets. Stakeholders across Microsoft, SK Hynix, AI developers, cloud operators, and hardware suppliers will be watching for official news, contract details, and subsequent demonstrations of Maia’s capabilities as it moves through development milestones.

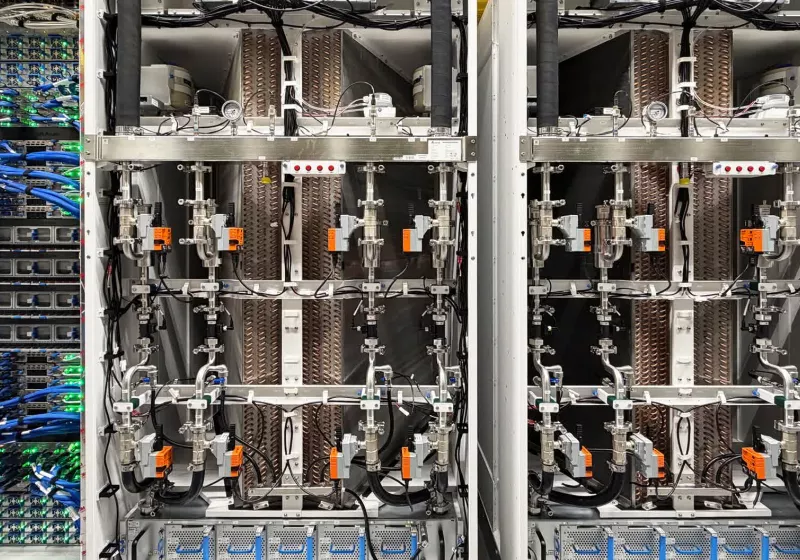

*圖片來源:Unsplash*

Perspectives and Impact¶

The potential exclusive HBM3e supply agreement for Maia touches on several broader trajectories in AI hardware and memory ecosystems. First, it underscores the continuing strategic importance of memory bandwidth in enabling scalable AI performance. As models grow larger and data pipelines become more complex, the demand for memory that can move data at high speeds with minimal energy cost remains a critical bottleneck. An exclusive arrangement with a leading memory supplier could help Microsoft stabilize a key input and push the industry toward more aggressive optimization of memory hierarchies, including advanced interconnects and memory compression techniques.

Second, the deal would likely influence cost structures for Maia. Exclusive sourcing typically enables price concessions or favorable terms that reflect the supplier’s confidence in long-term demand. If SK Hynix is confident in sustained Maia volumes, it could justify investments in capacity and process improvements that bring down per-bit costs, potentially translating into more favorable pricing for Microsoft over time. Conversely, the lack of supply competition could expose Microsoft to higher costs if demand surges or if maintenance needs escalate in the face of evolving AI workloads. The balance between price stability and flexibility will be a central consideration for Microsoft’s procurement and finance teams.

Third, the arrangement could affect the broader AI hardware ecosystem. Competitors may respond by accelerating their own memory strategies, whether through exclusive deals with other vendors, alternative memory architectures, or investments in memory-centric acceleration techniques. For memory suppliers, an exclusive deal with a major AI program could signal a preference for deeper collaboration with cloud and hyperscale customers, encouraging further specialization in high-bandwidth memory products. This dynamic could influence pricing strategies, capacity planning, and the rate at which new memory technologies reach the market.

Fourth, there are implications for software and systems architecture. In AI accelerators, memory performance is intimately tied to software stacks, compilers, and runtime optimizations. If Maia’s hardware configuration hinges on SK Hynix HBM3e, software teams will need to tailor memory-aware optimizations to exploit the specific characteristics of those memory stacks. This could involve enhancements to memory-aware scheduling, data placement policies, and model compression techniques to maximize effective bandwidth. The collaboration between silicon, memory, and software ecosystems often determines the ultimate gains in AI throughput and energy efficiency.

Finally, regulatory and governance aspects may come into play as large, strategic partnerships unfold. Jurisdictional scrutiny around competition, national security, and critical infrastructure resilience could shape how such deals are reviewed and approved. Stakeholders should anticipate potential inquiries or conditions from regulators that could influence the terms or execution of exclusive memory arrangements. Transparent disclosures about contract scope, performance targets, and supply contingencies will be important to maintain trust among customers, partners, and policymakers.

Overall, the possibility of an exclusive SK Hynix HBM3e memory supply for Maia highlights the convergence of hardware engineering, supply chain strategy, and software optimization in the race to deliver next-generation AI capabilities. The outcome will depend on official confirmations, the specifics of the contractual arrangement, and the ability of both Microsoft and SK Hynix to align their roadmaps with Maia’s development timeline. As AI workloads continue to demand greater memory bandwidth and efficiency, such partnerships could become more common as leading players seek to de-risk critical inputs while pushing the boundaries of performance.

Key Takeaways¶

Main Points:

– Reports suggest Microsoft considers an exclusive HBM3e memory supply deal with SK Hynix for Maia.

– An exclusivity could stabilize memory supply and enable performance optimizations but may limit flexibility.

– The deal’s feasibility hinges on production scale, pricing, and formal confirmation from involved parties.

Areas of Concern:

– Potential antitrust or competition concerns due to exclusive supply dynamics.

– Pricing risk if memory demand remains volatile or if capacity cannot meet Maia’s needs.

– Dependence on a single supplier for a critical component and its impact on future procurement.

Summary and Recommendations¶

If Microsoft secures an exclusive HBM3e memory deal with SK Hynix for Maia, it would mark a notable strategic alignment between AI silicon design and high-bandwidth memory supply. The potential benefits include streamlined supply planning, potential performance optimizations, and improved predictability of cost structures. However, exclusivity introduces dependencies and market dynamics that warrant careful management. For Microsoft, the recommended path involves negotiating clear contract terms that safeguard supply continuity, price ceilings or floors, and performance milestones tied to Maia’s development phases. It would also be prudent to establish fallback arrangements or optional escalation paths should demand surge or if production challenges arise.

For SK Hynix, the deal signals strong demand for advanced memory technologies and validates continued investment in high-bandwidth memory solutions. The company should ensure that capacity expansions align with Maia’s roadmap while maintaining flexibility to serve other customers. Transparent communication with the market about capacity, lead times, and pricing would help mitigate regulatory or customer concerns.

For the broader AI hardware community, this potential development emphasizes the importance of memory bandwidth in future AI accelerators. It may prompt further investments in memory technologies, packaging innovations, and software optimizations designed to exploit memory subsystems efficiently. Stakeholders should monitor official announcements for confirmation and details, as these would significantly influence supply chain planning, pricing dynamics, and the competitive landscape.

In conclusion, while not yet officially confirmed, the possibility of an exclusive SK Hynix HBM3e memory supply for Microsoft’s Maia AI chip underscores the strategic centrality of memory in next-generation AI hardware. As Maia progresses, the combination of silicon design, memory technology, and software optimization will determine whether this potential partnership translates into tangible performance and efficiency advantages in real-world AI workloads.

References¶

- Original: https://www.techspot.com/news/111123-microsoft-maia-ai-chip-ambitions-might-include-exclusive.html

- Additional context on HBM technologies and AI hardware strategy can be found in industry analyses and memory market reports from leading semiconductor research firms and major tech outlets.

*圖片來源:Unsplash*