TLDR¶

• Core Points: AI agents are expanding from Q&A to autonomous task execution, tool use, API queries, workflow orchestration, cloud interactions, and DevOps automation.

• Main Content: The article argues for a cohesive, repeatable workflow that moves AI agents from a local prototype to production using Docker Compose as the backbone for environment parity, tooling, and deployment orchestration.

• Key Insights: Standardized local-to-prod pipelines reduce risk, improve reproducibility, and accelerate iteration; careful attention to tooling, observability, security, and scalability is essential for production-readiness.

• Considerations: Balancing speed of iteration with robustness, selecting the right services, handling dependencies, monitoring, and access control; potential bottlenecks in scaling agent workloads.

• Recommended Actions: Adopt a unified Docker Compose-based workflow, define clear environment specs, implement robust observability, validate with progressive deployment, and plan for production-grade security and reliability.

Content Overview¶

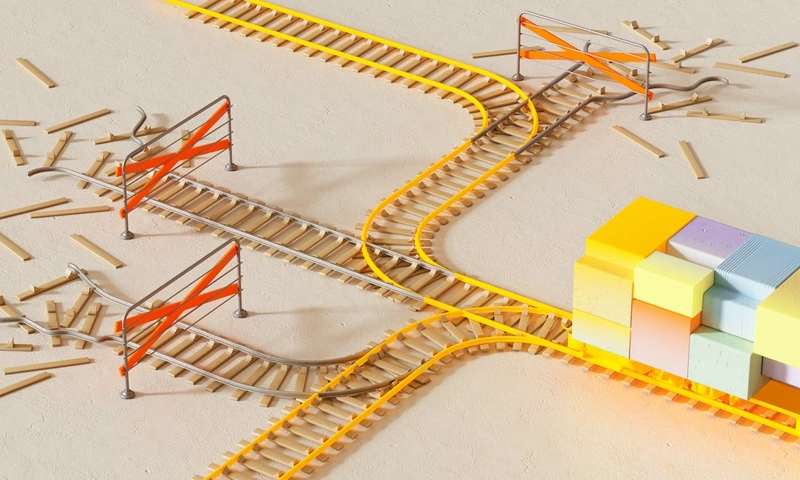

As AI agents evolve beyond simple chat responders, they increasingly function as autonomous software entities capable of reasoning about tasks, invoking external tools, querying APIs, orchestrating complex workflows, and even managing cloud infrastructure. These capabilities unlock powerful new applications across software development, IT operations, data processing, and automation. However, they also introduce a familiar engineering challenge: how to build, run, and ship AI agents reliably—from a local laptop prototype to a scalable production system.

The central argument is that a unified workflow—one that preserves parity between development and production—can dramatically reduce friction and risk. Docker Compose emerges as a practical backbone for this workflow, offering a declarative, reproducible environment across services and stages. By modeling the agent, its tools, and the surrounding infrastructure as compose services, teams can iterate locally with confidence while maintaining a clear path to deployment in production. This approach emphasizes stability, observability, and automation, ensuring that environments, dependencies, and configurations behave consistently across the development lifecycle.

The article outlines how to structure such a workflow, what components to include, and how to address common pitfalls. It stresses the importance of defining explicit service boundaries, versioning tooling, and instituting checks that validate that local prototypes will operate in production. The ultimate goal is to enable AI agents to progress from experimental demonstrations to reliable, scalable deployments without rewriting core infrastructure or re-architecting the application for each stage.

In-Depth Analysis¶

The proposed approach treats AI agents as first-class software components that require careful engineering practices to reach production readiness. The key idea is to encapsulate everything an AI agent needs—its runtime, tools, data sources, and orchestration logic—within a reproducible Docker Compose configuration. This configuration serves multiple purposes:

- Environment parity: Developers run the same set of services on their laptops as in staging and production, minimizing “it works on my machine” issues.

- Tooling cohesion: Agents, tool wrappers, and adapters (for APIs, databases, cloud services, messaging systems) are modeled as dedicated services with well-defined interfaces.

- Dependency management: All dependencies, including model versions, tool clients, and credentials (in a secure manner), are versioned and declared.

- Observability and tracing: Centralized logging, metrics, and tracing enable rapid diagnosis of issues as agents operate across tasks and tools.

- Orchestration and workflow: Agents can coordinate with other services, trigger pipelines, and automate sequences that span multiple systems through a single declarative configuration.

To operationalize this, teams can structure their Compose setup around the following components:

- AI agent service: The runtime that hosts the agent model, prompt templates, memory, and decision logic.

- Tooling services: Wrappers or adapters for external tools the agent may call (e.g., code execution sandboxes, search interfaces, file systems, notebooks, CI/CD triggers).

- API and data services: Mock or real external APIs the agent queries, along with data stores for persistence.

- Cloud and infrastructure services: Short-lived infrastructure components or cloud SDKs that the agent provisions or interacts with.

- Auth and secrets management: Secure handling of credentials, tokens, keys, and access policies integrated with the deployment environment.

- Orchestration layer: A workflow engine or controller that helps coordinate multi-step tasks, retries, and error handling, potentially augmenting the agent’s decision process.

The workflow emphasizes progressive validation. Teams can begin with a minimal local prototype that demonstrates a single task, then incrementally add tools, API integrations, and automation. Each increment should be accompanied by a testable deployment in Docker Compose, followed by a transition to more production-like configurations. This staged approach reduces risk and builds confidence that the agent’s behavior will remain consistent when scaled.

Security considerations are crucial. Even in a local prototype, protecting credentials and limiting the blast radius of failed tasks are paramount. Secrets should be injected securely, access controls should be defined for each service, and the chain of tool invocations should be auditable. The Compose setup should support safe defaults, with the ability to escalate privileges only where necessary and to scope network access tightly to prevent unintended exposure.

Observability is another vital pillar. Centralized logs, metrics, and traces provide visibility into the agent’s decisions, tool invocations, and outcomes. Structured logs and standardized metrics enable operators to monitor performance, identify bottlenecks, and diagnose failures quickly. Such observability is essential when moving from a developer’s laptop to a production environment with multiple concurrent agents and users.

Finally, the approach should anticipate the realities of production workloads. As agents scale, orchestration needs evolve, and so do requirements around concurrency, fault tolerance, and cost management. The Docker Compose-based workflow can serve as a strong foundation for early-stage production setups, but teams should also plan for eventual migration to more scalable deployment models (e.g., Kubernetes) as demand grows. The core benefit remains: a consistent, testable, and auditable path from prototype to production that minimizes the risk of drift between environments.

*圖片來源:Unsplash*

Perspectives and Impact¶

The adoption of a Docker Compose-driven workflow for AI agents could reshape how teams approach AI-powered automation. By standardizing the development-to-production path, organizations can shorten development cycles, reduce repetitive work, and improve reliability. Engineers gain clearer visibility into how agents operate, which tools they rely on, and where failures may occur. This clarity supports faster iteration while maintaining safety and governance.

In the near term, the approach encourages teams to treat agents and their supporting services as a cohesive system rather than a set of loosely connected scripts. This mindset fosters better engineering practices, including versioned toolkits, reproducible environments, and robust testing strategies. In practice, teams might:

- Define a canonical Compose file that describes the agent, tools, and dependencies, with environment-specific overrides for development, staging, and production.

- Use lightweight containers for rapid iteration during prototyping and scale up complexity gradually as requirements mature.

- Implement health checks, readiness probes, and automated rollback mechanisms to manage failures gracefully.

- Create test suites that simulate real-world task workflows, ensuring that tool calls and API interactions behave as expected under various conditions.

- Integrate with CI/CD pipelines to automatically validate changes to the agent’s configuration, tooling, and deployment descriptors.

Looking ahead, this workflow could inform best practices for AI governance, security, and compliance in AI-enabled environments. As agents interact with external systems and cloud resources, having an auditable, reproducible deployment process becomes increasingly valuable for audits, regulatory compliance, and organizational risk management. The approach also aligns with broader trends toward “as-code” infrastructure and declarative configuration, enabling teams to codify not just infrastructure but also the behavioral expectations and constraints of AI agents.

Yet challenges remain. Complex agent workflows may demand more sophisticated orchestration than a single Compose file can comfortably manage. Scaling to multiple agents, each with distinct toolsets and data requirements, could lead to intricate dependency graphs. In such cases, teams may consider transitioning to more scalable orchestration platforms while preserving the core principles of reproducibility and visibility established in the initial Compose-based workflow.

Key Takeaways¶

Main Points:

– AI agents now perform autonomous tasks, tool calls, API queries, workflow orchestration, and cloud interactions.

– A Docker Compose-based workflow provides environment parity, repeatability, and an organized path from local prototype to production.

– Observability, security, and incremental validation are essential to achieving production readiness.

Areas of Concern:

– Scaling beyond a single agent and managing complex tool dependencies.

– Ensuring robust security and access control as the system grows.

– Migrating from Compose-based workflows to more scalable deployment platforms when needed.

Recommendations:

– Start with a canonical Docker Compose setup that encapsulates the agent, tools, data sources, and orchestration logic.

– Enforce strict secret management, access controls, and auditing within the Compose environment.

– Invest in observability (logs, metrics, traces) and automated testing to validate behavior across stages.

– Plan for progressive deployment and consider future migration paths to Kubernetes or other scalable platforms.

Summary and Recommendations¶

A cohesive Docker Compose–driven workflow offers a practical, disciplined path to transform AI agents from local prototypes into production systems. By modeling the agent, its tools, and its environment as interconnected services, teams can achieve consistent environments, clearer boundaries, and improved reliability across development, testing, and deployment. The approach emphasizes incremental validation, strong observability, and robust security—foundational elements that mitigate risk as agents gain increasing capabilities and interact with a growing array of external systems.

While the Compose-based workflow is an excellent starting point for early-stage production, teams should anticipate evolving requirements as organizations scale. Complexity can grow with multiple agents, more tools, and higher security demands. At that point, transitioning to more scalable orchestration platforms while preserving the core principles—reproducibility, visibility, and secure operations—will be essential. The overarching message is clear: adopting a unified, repeatable workflow from the outset accelerates development, reduces drift between environments, and lays the groundwork for dependable AI-enabled automation in production.

References¶

- Original: https://dev.to/jasdeepsinghbhalla/docker-compose-for-ai-agents-from-local-prototype-to-production-in-one-workflow-3a4m

- Additional references:

- Docker Compose official documentation: https://docs.docker.com/compose/

- Best practices for AI governance and security in production: https://ai.google/practices/security

- Observability in containerized environments: https://www.redhat.com/en/topics/containers/monitoring-docker-containers

- From monolithic scripts to orchestrated AI workflows: https://aws.amazon.com/blogs/architecture/building-and-managing-ai-workflows-with-orchestration/

*圖片來源:Unsplash*