TLDR¶

• Core Points: Octorus achieves high-speed rendering of massive diffs through thoughtful data structures, incremental updates, and careful I/O optimization, balancing perceived and actual performance.

• Main Content: The project analyzes what “fast” means in rendering TUI diffs, identifies bottlenecks beyond zero-copy and caching, and details architectural choices that enable smooth rendering of hundreds of thousands of lines.

• Key Insights: Performance is multi-faceted—initial display, syntax highlighting, scroll smoothness, and API call efficiency all matter; bottlenecks can arise from large payloads despite low-level optimizations.

• Considerations: Ensure scalable parsing, minimize re-processing, and manage memory usage; consider user experience factors like frame rate and latency.

• Recommended Actions: Adopt incremental rendering, optimize data flow, benchmark across workloads, and design for graceful degradation under extreme diffs.

Content Overview¶

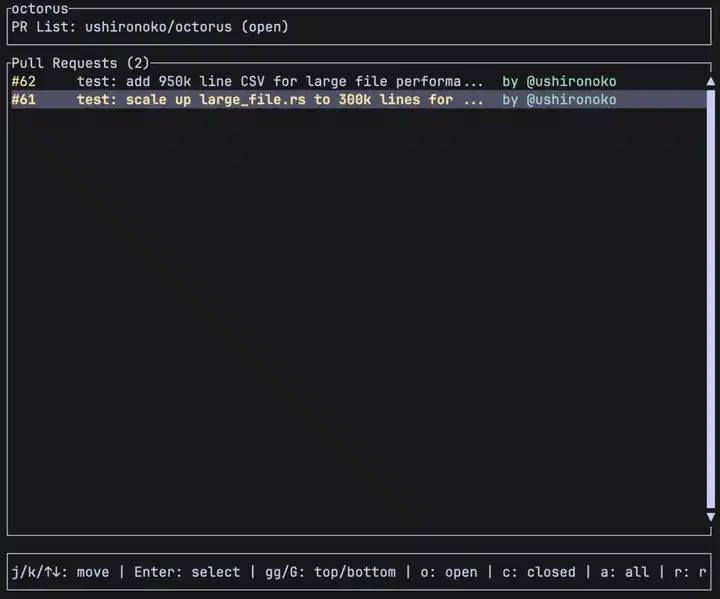

The article discusses performance optimizations behind Octorus, a text-based user interface (TUI) tool designed to render large diffs efficiently. It emphasizes that “fast” is not a single metric; it encompasses several dimensions of rendering performance, including the time to display the initial view, the speed of syntax highlighting, and the smoothness of scrolling. Although modern optimizations like zero-copy data transfers and caching can reduce some overhead, the ultimate bottlenecks often lie elsewhere—particularly when dealing with massive patches. The author reflects on the complexity of rendering large diffs and outlines the architectural strategies employed to maintain responsiveness even when faced with hundreds of thousands of lines.

The discussion acknowledges that perceived speed—how fast a user feels the UI responds—can diverge from internal, measured speed. A design that eliminates data copying might still feel slow if the API surface is heavy or if the system spends time constructing an enormous diff into memory before rendering. The article then moves to concrete considerations and methods used to achieve high-speed rendering, including incremental updates, careful memory management, and efficient rendering pipelines. By examining both the work that happens before display (parsing, structuring, and deciding what to render) and the work that happens during rendering (drawing, highlighting, and scrolling), the piece presents a holistic view of performance for large-scale UI diff visualization.

In-Depth Analysis¶

Octorus is built to render diffs, and its performance narrative begins with a clear definition of “fast.” In user interfaces, speed is multi-dimensional. There is the initial display speed—the time it takes for the UI to present the first meaningful view after a user action or start-up. Then there is the ongoing speed of operations like syntax highlighting, which must be applied to potentially massive blocks of text without introducing noticeable delays. Scroll smoothness, often quantified by frames per second (fps), is another critical dimension; even if an operation completes quickly, a choppy scroll can make the experience feel slower than it is.

A central premise of the article is that, despite the lure of zero-copy techniques and aggressive caching, large diffs introduce bottlenecks that such optimizations cannot fully eliminate. If the patch is enormous, the act of loading, parsing, and preparing the data for rendering can dominate the time-to-display. Therefore, while low-level optimizations are valuable, they must be complemented by higher-level design choices that minimize unnecessary work and distribute costs more evenly over time.

Incremental rendering emerges as a core strategy. Rather than parsing and rendering the entire diff in one go, Octorus breaks the workload into smaller, manageable chunks. This enables the UI to present partial results quickly, giving the user something to view while the rest of the data is still being prepared. Incremental rendering pairs well with a responsive architecture: as soon as a portion of the diff is ready, it is drawn, and the system can continue processing in the background. This approach helps keep the user’s perception of speed positive by delivering tangible progress and reducing the time to first useful content.

Another essential aspect is the careful handling of syntax highlighting. Highlighting can be computationally intensive, especially when applied to large blocks of text. Strategies to optimize this step include:

– Limiting the scope of highlighting to currently visible lines, or predicting the range that will soon enter view.

– Caching syntax states for portions of the diff that are likely to be re-displayed or revisited.

– Deferring non-critical highlighting tasks while the critical rendering path is active, then enriching the view as resources permit.

Memory management is also a key factor. Large diffs can consume significant memory, not only for the text lines but also for metadata, syntax state, and UI decorations. Efficient data structures are used to balance memory usage with quick access. Techniques such as compact representations, arena allocators, and selective caching help reduce pressure on the system while still enabling rapid rendering when required.

The article also highlights the importance of a clean data flow between components. If the pipeline from diff retrieval to display is cluttered with heavy transformations, the system becomes susceptible to latency spikes. A well-designed architecture minimizes the number of times data is copied, transformed, or moved between layers. This often involves:

– Streaming lines as they are parsed, rather than buffering complete chunks.

– Employing a minimal, well-defined data model that supports incremental updates.

– Reducing cross-thread contention by clearly separating producer and consumer responsibilities and using lock-free or low-lock data structures where appropriate.

Additionally, the author discusses the role of I/O efficiency. In practice, reading and decoding enormous diffs can become a bottleneck. Efficient I/O strategies, such as asynchronous reads, memory-mapped files, or prefetching in anticipation of user actions, can help ensure that the UI remains responsive even when disk latency would otherwise impede progress. When combined with incremental rendering, these I/O optimizations enable the system to start showing results quickly while continuing to fetch more data in the background.

*圖片來源:Unsplash*

Beyond the immediate rendering pipeline, the article considers the broader context of performance measurement and benchmarking. It’s not enough to claim speed; one must quantify it under realistic workloads. Benchmark scenarios should reflect typical diffs encountered by users, including variations in line length, syntax, and the density of changes. By instrumenting critical paths and collecting metrics such as time-to-first-render, average frame time during scrolling, and time spent on syntax highlighting, developers can identify bottlenecks and validate improvements.

In sum, the high-speed rendering of 300k-line diffs is achieved through a combination of incremental rendering, efficient highlighting, careful memory management, streamlined data flows, and prudent I/O strategies. The approach is intentionally holistic: optimizations in one area are most effective when coordinated with the broader rendering pipeline and user-experience goals. The result is a tool that remains responsive and smooth even when confronted with diffs of considerable size.

Perspectives and Impact¶

The work on Octorus provides several valuable takeaways for developers tackling similar challenges:

– Complexity scales with data size, but user experience does not have to scale linearly with it. By decomposing work into incremental units and prioritizing the user-visible path, systems can preserve perceived speed even under heavy load.

– The line between internal efficiency and user-perceived performance is nuanced. Techniques like zero-copy and caching are necessary, but they must be complemented by strategies that reduce overall workload and prevent expensive operations from blocking the render loop.

– A modular, streaming-oriented architecture is well-suited for diff rendering. When components can operate asynchronously and independently, the system can keep the UI responsive while processing large inputs in the background.

– Realistic benchmarking matters. Measuring performance with representative workloads provides actionable data that guides optimization priorities and validates that improvements translate into a faster, smoother experience for users.

Looking forward, several implications and opportunities emerge. First, as diffs continue to grow in scale and complexity, further improvements may come from more aggressive pipeline parallelism and better utilization of modern CPU features, including vectorized operations for syntax highlighting and parallel parsing where dependencies permit. Second, user expectations for instantaneous feedback will likely push developers toward more aggressive prefetching and predictive rendering, enabling the UI to be ready for the next user action even before it occurs. Finally, the broader ecosystem around TUIs could benefit from standardizing best practices for rendering large text diff data, creating reusable patterns and components that can be adopted across projects.

The experience with Octorus underscores a broader design principle: performance is most effectively achieved when system architecture, rendering strategy, and user experience goals are aligned. Optimizations should be targeted not only at raw throughput but at reducing perceived latency, maintaining smooth interaction, and providing a reliable, predictable experience across a wide range of inputs.

Key Takeaways¶

Main Points:

– Fast rendering of very large diffs requires a holistic approach combining incremental rendering, efficient highlighting, and mindful I/O.

– Perceived performance often diverges from raw computational speed; optimization must consider user experience metrics like first meaningful render and scroll smoothness.

– A streaming, modular architecture that minimizes data copies and enables background processing is well-suited for large-scale diff visualization.

Areas of Concern:

– Handling extreme diffs may still stress memory and I/O subsystems; risk of latency spikes remains if not carefully managed.

– Over-optimizing low-level paths without aligning with UI priorities can yield diminishing returns.

– Benchmarking must reflect real-world usage to avoid optimizing for artificial workloads.

Summary and Recommendations¶

Rendering 300k-line diffs at high speed is not solely about micro-optimizations; it requires a balanced design that coordinates data handling, rendering, and user interaction. Incremental rendering stands out as a practical technique to deliver rapid feedback, while selective highlighting and compact data representations help keep the system responsive under heavy workloads. A streamlined data flow minimizes redundant work, and robust I/O strategies ensure that disk latency does not undermine the rendering process. The overarching lesson is that performance is a product of carefully orchestrated components working in concert rather than isolated optimizations in isolation.

For developers aiming to build or improve similar tools, the following recommendations are prudent:

– Embrace incremental, streaming rendering to provide early feedback and maintain responsiveness.

– Apply syntax highlighting selectively, caching results where feasible, and avoid re-processing unseen data.

– Optimize memory usage with compact data representations and efficient allocators.

– Design clear, low-friction data pipelines with minimal copying and well-defined boundaries between producers and consumers.

– Invest in realistic benchmarking that mirrors typical user workloads, and use the results to guide optimization priorities.

By following these principles, projects can achieve fast, smooth experiences even when confronted with diffs that stretch into hundreds of thousands of lines.

References¶

- Original: https://dev.to/ushironoko/how-octorus-renders-300k-lines-of-diff-at-high-speed-h4p

- Additional readings:

- Performance optimization patterns for streaming UI rendering

- Strategies for incremental rendering in text-based interfaces

- Benchmarking approaches for large-scale text processing systems

Forbidden:

– No thinking process or “Thinking…” markers

– Article starts with “## TLDR”

*圖片來源:Unsplash*