TLDR¶

• Core Points: Nvidia aims to extend its silicon stack with Vera, a CPU designed to compete with Intel Xeon and AMD Epyc in data centers, signaling a shift toward becoming a full-stack silicon provider for AI and HPC.

• Main Content: Vera represents Nvidia’s strategic broadening beyond GPUs, targeting server workloads with a purpose-built architecture to optimize AI and HPC performance at scale.

• Key Insights: Nvidia’s move could reshape data-center CPU choices, potentially reducing reliance on traditional CPU ecosystems if Vera proves competitive on performance, efficiency, and ecosystem support.

• Considerations: Adoption hinges on Vera’s performance, software compatibility, production readiness, supply reliability, and the ability to deliver a robust developer and ecosystem experience.

• Recommended Actions: Stakeholders should monitor Vera’s benchmarks, software porting efforts, and early partner engagement; plan risk-adjusted evaluations for mixed CPU configurations in AI-first workloads.

Content Overview¶

Nvidia has signaled a bold expansion of its silicon strategy with the development of Vera, its own central processing unit intended for data-center workloads. The move is presented as a natural evolution of Nvidia’s long-term mission to be a one-stop silicon provider for AI and high-performance computing. Historically, Nvidia’s GPUs have driven accelerated workloads across AI training, inference, and scientific computing, while the broader data-center ecosystem has relied on CPUs from incumbents like Intel and AMD. Nvidia’s Vera seeks to shift this dynamic by delivering a cohesive architecture that can stand alongside, and potentially compete with, established CPUs such as Intel Xeon and AMD Epyc.

The decision to pursue Vera reflects more than a single product launch; it embodies a strategic reorientation toward owning more of the stack used to power AI-first data centers. By designing a CPU in-house, Nvidia aims to optimize the entire system around its software and hardware capabilities, reducing dependency on third-party CPU roadmaps and enabling tighter integration between CPUs and GPUs, along with its future acceleration technologies. The broader aim is to unlock efficiencies in AI workloads, large-scale training, and inference pipelines that can benefit from a processor designed with Nvidia’s software stack and silicon philosophy in mind.

This shift arrives amid a competitive and rapidly evolving data-center landscape. Intel and AMD have built sizable CPU ecosystems and cloud footprints, while Nvidia has cultivated CUDA, robust GPU software libraries, and a strong position in AI model development and deployment. Vera’s introduction could accelerate a broader industry conversation about specialized CPUs tailored to AI and HPC workloads, potentially reshaping cost models, performance-per-watt considerations, and deployment topologies in modern data centers. The conversation also touches on ecosystem dynamics—how software compatibility, compiler support, optimization libraries, and developer tooling will be harmonized with Nvidia’s Vera to deliver the anticipated advantages.

The narrative surrounding Vera emphasizes Nvidia’s intention to keep pushing the envelope on silicon design, software integration, and hardware-software co-design. As with any new CPU push, success will depend on several factors beyond raw performance: reliability, production capacity, supply chains, and the pace of software maturation. Nvidia will need to demonstrate compelling benchmarks across representative workloads, effective porting and optimization strategies for existing AI and HPC pipelines, and a clear path for developers to adopt Vera alongside the company’s CUDA ecosystem and GPU acceleration capabilities. Industry observers will be watching not only for performance numbers but for Vera’s ability to integrate with mainstream data-center operations, including orchestration, virtualization, cloud-native deployment, and enterprise-grade management tools.

In-Depth Analysis¶

Nvidia’s Vera represents a significant strategic pivot. For years, Nvidia’s core business model has revolved around GPUs and software tooling that enable developers to leverage GPU acceleration for a wide range of workloads. While Nvidia has leveraged partnerships with CPU makers and relied on CPU ecosystems to complement GPU-based pipelines, Vera signals a shift toward end-to-end ownership of the primary compute fabric in data centers.

Architectural ambitions. Vera is being positioned as a modern, enterprise-class CPU designed to handle diverse workloads common in AI and HPC environments. By building its own processor, Nvidia can tailor the instruction set, memory hierarchy, interconnects, and power/performance characteristics to maximize the efficiency of its software stack, including CUDA, cuDNN, and other libraries that power AI workloads. The objective is to reduce friction between CPU and GPU components, enabling tighter coupling and more predictable, predictable performance for end users deploying large-scale AI models or complex simulations.

Ecosystem and software alignment. A central theme of Nvidia’s strategy is to align silicon choices with software and ecosystem development. If Vera is to succeed, Nvidia must ensure that compilers, programming models, libraries, and tooling work seamlessly on its CPU, with a clear migration or optimization path for developers. The CUDA ecosystem, coupled with libraries tailored for matrix operations, graph workloads, and HPC kernels, could potentially be extended or adapted to Vera’s architecture. Compatibility with existing workloads—notably Python-based AI model development frameworks, GPU-accelerated libraries, and standard high-performance computing toolchains—will be critical. Nvidia’s ability to deliver performance gains while maintaining or improving software portability will influence early adoption.

Performance considerations. In theory, a purpose-built CPU from Nvidia could outperform incumbents in AI-centric tasks due to better CPU-GPU data paths, optimized memory bandwidth, and a harmonious software layer designed to minimize host-accelerator data transfer overhead. However, Vera will need to demonstrate competitive performance in traditional CPU-relevant workloads such as database processing, enterprise workloads, and virtualization, not just AI workloads. It must provide robust multi-threading, excellent single-thread performance, and strong energy efficiency to appeal to the broader data-center market, where total cost of ownership is a critical decision factor.

Production and supply chain readiness. Nvidia’s move to a data-center CPU also introduces questions about manufacturing capacity, wafer supply, and production timelines. The ability to scale Vera to meet hyperscale demand, while maintaining yield and reliability standards, will be essential. Nvidia will likely rely on foundries and existing semiconductor partners, but the integration of a new CPU line with Nvidia’s broader silicon portfolio presents manufacturing and logistics challenges that must be managed carefully to avoid bottlenecks.

Competitive dynamics. If Vera can deliver compelling performance-per-watt and performance-per-dollar metrics, it could alter the competitive dynamics in the data-center CPU market. Enterprises may consider Vera as a viable alternative to Xeon and Epyc, especially in AI-first contexts where Nvidia already has a strong foothold due to its GPUs and software stack. Yet incumbents have mature ecosystems, extensive customer support networks, and broad software compatibility across enterprise workloads. Vera’s success will hinge on how convincingly Nvidia can balance raw performance with ecosystem maturity and enterprise reliability.

Security and resilience. In the data-center, security and reliability are paramount. Vera will need robust hardware security features, secure boot, firmware integrity, and isolation capabilities to protect sensitive workloads. Given the increasing emphasis on supply-chain security and platform resilience, Nvidia’s approach to secure silicon design and ongoing firmware/software updates will be closely scrutinized by enterprises with stringent compliance requirements.

Strategic implications for Nvidia’s business model. Vera is part of a broader ambition to offer a complete silicon and software stack for AI and HPC. If Nvidia can demonstrate that its CPU, in concert with its GPUs, data-path accelerators, and software tooling, delivers superior end-to-end performance and easier deployment, it could accelerate a shift toward a more vertically integrated compute model. This, in turn, could affect partnerships with cloud providers and enterprise customers, who may recalibrate procurement strategies to maximize standardized efficiency and performance gains across GPUs and CPUs.

Future trajectories. The Vera initiative could be a precursor to more advanced silicon platforms that unify CPUs, GPUs, and accelerators under a single architectural philosophy. Nvidia might pursue further specialization for AI workloads, real-time analytics, and scientific computing, while continuing to cultivate its software ecosystem to ensure broad developer adoption. The pace of innovation, the breadth of workload coverage, and the ability to maintain competitive cost structures will determine Vera’s long-term viability and market impact.

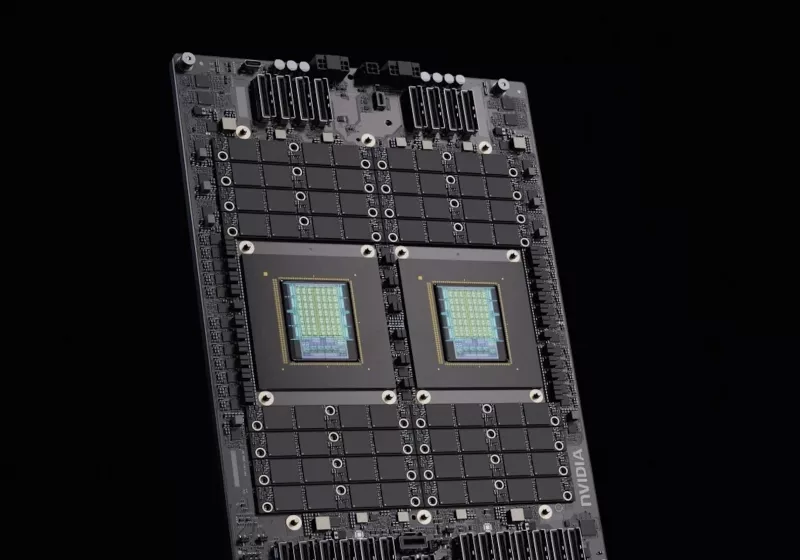

*圖片來源:Unsplash*

Perspectives and Impact¶

Industry observers will weigh Vera against the looming questions of performance, compatibility, and ecosystem maturity. If Vera achieves significant performance improvements for AI-centric workloads, and if Nvidia can provide a straightforward path for developers to port or optimize existing code, Vera could become a compelling option for data centers seeking to consolidate their compute foundations around a single vendor.

Adoption scenarios vary. In cloud environments where scale and efficiency are paramount, Vera could appeal to hyperscalers looking to optimize for AI inference and training, particularly when integrated with Nvidia’s GPUs and software tools. For traditional enterprise workloads, Vera’s appeal will depend on how well it competes with Xeon and Epyc across a broad set of use cases, including virtualization, database workloads, and mixed-guest environments. A successful introduction would likely prompt a re-evaluation of CPU procurement strategies, with potential cost and performance implications for data-center budgets and total cost of ownership calculations.

Another dimension is the developer experience. Nvidia’s existing developer ecosystem—CUDA, cuDNN, and related libraries—has a persistent advantage in AI-oriented communities. If Vera aligns with or extends this ecosystem, developers may find it straightforward to port workloads or optimize performance. However, broad enterprise adoption also depends on mature compiler support (such as LLVM or GCC toolchains), stable firmware interfaces, and compatibility with popular operating systems and hypervisors. Developer tooling, debugging capabilities, and performance analytics will be vital for a smooth transition.

Security, resilience, and supply chain trust will shape Vera’s reception among enterprises with strict governance requirements. Any concerns about hardware root of trust, firmware updates, or potential supply disruptions could slow adoption. Nvidia will need to demonstrate a credible, end-to-end reliability and security story that complements its silicon strategy.

From a market perspective, Vera could catalyze more competition in the CPU space. While incumbents have established footprints and robust ecosystems, a successful Nvidia CPU could push competitors to accelerate architectural innovations, optimize for AI-centric workloads, and reimagine data-center efficiency models. The broader industry could see increased collaboration among hardware and software players as new optimizations emerge for workloads combining CPU and GPU resources.

Looking ahead, Vera’s path to market will determine whether Nvidia can translate its silicon ambition into a durable, multi-year competitive advantage. The company will need to deliver on several fronts: a compelling performance profile across representative workloads, a clear migration and optimization strategy for developers, reliable manufacturing and supply, and a security-and-resilience framework that meets enterprise standards. If these elements align, Vera could become a meaningful challenger to Xeon and Epyc in the data center, delivering Nvidia’s AI-first vision with a broader compute backbone.

Key Takeaways¶

Main Points:

– Nvidia is developing Vera, a data-center CPU designed to challenge Intel Xeon and AMD Epyc.

– Vera reflects Nvidia’s strategy to own more of the silicon stack for AI and HPC workloads.

– Success depends on performance, software ecosystem maturity, production readiness, and enterprise adoption factors.

Areas of Concern:

– Hardware and software ecosystem compatibility with existing enterprise workloads.

– Production deployment timelines, supply chain reliability, and manufacturing capacity.

– Long-term cost competitiveness versus established CPU platforms.

Summary and Recommendations¶

Nvidia’s Vera represents a foundational step in broadening the company’s silicon portfolio beyond GPUs toward a more integrated data-center compute strategy. By pursuing an in-house CPU aligned with its software and accelerator ecosystem, Nvidia aims to deliver optimized AI and HPC performance while reducing reliance on traditional CPU roadmaps. The initiative carries significant potential to reshape data-center procurement considerations, particularly if Vera can demonstrate meaningful advantages in AI workloads alongside acceptable performance across general server tasks.

For stakeholders—customers, cloud providers, developers, and partners—the key is rigorous evaluation and informed risk management. Closely watch Vera’s public benchmarks, early porting guidance, and ecosystem development progress. Engage early with Nvidia to understand tooling, support, and roadmap commitments. Consider pilot deployments that mix Vera with Nvidia GPUs to gauge real-world benefits for AI training, inference, and mixed workloads. Finally, monitor compensation from incumbents’ responses, such as optimizations and partnerships that may emerge as the CPU landscape evolves.

In short, Vera could become a pivotal piece of Nvidia’s strategy to become a comprehensive silicon and software provider for AI and HPC. Its success will hinge on performance parity or superiority across a broad workload spectrum, a robust and mature software ecosystem, reliable manufacturing and supply, and the ability to deliver enterprise-grade security and resilience. If these elements coalesce, Vera may redefine how data centers architect compute, potentially altering the balance of power among CPU and GPU producers for years to come.

References¶

- Original: techspot.com

- Additional references:

- Nvidia Vera CPU announcements and hardware strategy analyses (industry press and analyst reports)

- Intel Xeon data-center architecture and ecosystem papers

- AMD Epyc server CPU architecture and market position

- CUDA ecosystem and developer tooling documentation

Forbidden:

– No thinking process or “Thinking…” markers

– Article starts with “## TLDR”

*圖片來源:Unsplash*