TLDR¶

• Core Points: Reports suggest Microsoft is pursuing an exclusive memory supply deal with SK Hynix for its Maia AI chip family, potentially securing high-bandwidth memory (HBM3e).

• Main Content: The collaboration would position SK Hynix as the sole supplier of HBM3e for Microsoft’s next-gen Maia chips, aligning with broader industry moves toward dedicated accelerators and custom silicon for AI workloads.

• Key Insights: Exclusive memory arrangements could streamline supply, reduce risk, and optimize performance, but may raise antitrust and supplier diversification considerations.

• Considerations: Long-term availability, price stability, and potential impact on other AI hardware projects relying on HBM memory need assessment.

• Recommended Actions: Monitor official confirmations, evaluate supply chain implications, and consider parallel sourcing strategies to balance exclusivity with risk.

Content Overview¶

Microsoft’s Maia project represents a strategic push into dedicated AI acceleration hardware designed to optimize the performance and efficiency of large-scale AI workloads, including generative and multimodal models. Maia is conceived as a family of AI chips tailored for arrayed accelerator architectures, potentially combining custom logic with optimized memory interfaces to maximize throughput and reduce latency for matrix operations, tensor cores, and associated data movement. The memory subsystem is a critical component of any AI accelerator, with high-bandwidth memory (HBM) playing a central role in delivering the data bandwidth required for modern transformer-based models.

Korean industry reports circulate that SK Hynix, a global leader in DRAM and NAND fabrication, has entered talks to secure an exclusive supply arrangement with Microsoft for Maia’s memory needs. If realized, SK Hynix would become the sole supplier of HBM3e memory for Maia, potentially shaping the chip’s performance characteristics, cost structure, and supply risk profile. While the exact terms of the deal, including volume, pricing, and contract length, have not been disclosed publicly, such an exclusive arrangement would be notable given the broader competitive landscape in memory technologies and the AI accelerator market.

To understand the implications, it’s helpful to review the context of memory in AI hardware. HBM3e is an advanced iteration of high-bandwidth memory designed to offer higher data transfer rates and improved energy efficiency compared with earlier HBM generations. For AI accelerators, memory bandwidth often represents a critical bottleneck, sometimes more impactful than raw compute throughput. An exclusive HBM supplier can enable tighter coupling between memory and compute, potentially enabling higher sustained performance for Maia workloads. It can also streamline the manufacturing and logistics process, reduce integration risk, and help Microsoft optimize the thermal and power envelopes of Maia-based systems.

This development sits against a backdrop of intensified competition in AI silicon and memory supply chains. Major cloud providers, AI startups, and hardware vendors are racing to deliver efficient, scalable AI inference and training solutions. In such an environment, exclusive partnerships with memory manufacturers can be a differentiator, offering predictable supply and tailored memory configurations aligned with a vendor’s silicon roadmap. However, exclusivity can raise questions about supply diversification, pricing leverage, and the broader health of the memory ecosystem.

The broader tech ecosystem is also watching how large accelerators balance custom silicon with off-the-shelf components. Some AI chips rely on a combination of bespoke processing units and standard DRAM or HBM stacks. The decision to lock in a single memory supplier is not unique to Maia; various other accelerators have explored or adopted exclusive arrangements to ensure tight performance integration and to secure favorable terms in a highly demanding supply market.

In summarizing the potential impact, if Microsoft secures an exclusive HBM3e supply from SK Hynix for Maia, several effects could unfold:

– Performance optimization via a tightly integrated memory subsystem, potentially enabling higher memory bandwidth at lower interconnect overhead.

– Predictable supply and pricing, reducing volatility amid global memory market fluctuations.

– A potential dependence on a single supplier, which could raise concerns about supply resilience, especially in the face of geopolitical or manufacturing disruptions.

– Competitive signaling within the AI accelerator space, pressuring rivals to secure their own exclusive or near-exclusive arrangements.

The exact roadmap for Maia—whether it will be released broadly to customers as a part of an SDK-enabled platform, or deployed initially in controlled environments—remains to be seen. It will also be important to monitor whether Microsoft’s Maia strategy incorporates memory-sied configurations, on-package memory, or other innovations in data movement and on-die interconnects, all of which influence how a memory exclusivity agreement would affect real-world performance.

In-Depth Analysis¶

Microsoft’s Maia program is part of a broader shift toward purpose-built AI accelerators designed to handle the demands of next-generation AI models. As models grow in size and complexity, the need for high memory bandwidth, low latency, and energy efficiency becomes paramount. This drives a focus on co-design between memory and compute—the philosophy that the memory subsystem and processing units should be designed in concert to maximize performance per watt and per dollar.

HBM3e memory, the prospective exclusive supplier’s product, represents an advanced memory technology featuring stacked DRAM dies with a wide interface. Compared with traditional GDDR or standard DDR memory, HBM offers substantially higher bandwidth in a compact form factor, enabling closer physical proximity to the processing silicon and improved energy efficiency. For AI workloads, HBM3e can dramatically reduce memory access bottlenecks, enabling faster data flows between memory and compute units. In a Maia context, the choice to align memory with a single vendor like SK Hynix could enable more aggressive timing budgets, tighter memory timing controls, and optimized memory channel configurations tuned to Maia’s architectural characteristics.

From a supply chain perspective, memory shortages and price volatility have been ongoing concerns for AI hardware developers. Exclusive deals can mitigate some risk by locking in supply commitments and potentially pricing, but they also concentrate risk if production issues arise at the supplier level or if demand surges beyond contracted volumes. Microsoft’s decision to pursue exclusivity may be driven by a desire to ensure stable delivery for Maia’s early-stage production, reduce integration risk with a single supplier’s memory, and unlock negotiated performance or cost advantages through a deep, joint engineering relationship.

It’s important to note that exclusive supplier arrangements are not unprecedented in the AI hardware space. Other accelerator developers have collaborated with memory manufacturers to optimize memory configurations that best fit their chip designs and software stacks. The benefits typically include streamlined qualification, tighter control over the memory subsystem’s timing and power characteristics, and the opportunity to tailor memory products to align with Maia’s workloads, such as transformer-based inference and mixed-precision training.

However, exclusivity also introduces potential downsides. If SK Hynix faces manufacturing constraints or supply chain shocks, Maia’s memory supply could be delayed or constrained. Microsoft would need to manage these risks through contractual provisions, capacity reservations, and contingency planning. There is also the potential for regulatory scrutiny, should exclusivity at this scale raise concerns about competition or create barriers for other memory suppliers seeking access to similar high-growth customers.

Beyond the technical and supply considerations, the strategic implications for Microsoft, SK Hynix, and the broader AI ecosystem are meaningful. For Microsoft, Maia represents a platform to differentiate its cloud AI offerings, enabling customers to deploy demanding inference and training workloads with predictable performance and energy efficiency. An exclusive memory deal could be a lever to secure preferred access to the latest memory technologies and to coordinate product roadmaps across hardware and software.

For SK Hynix, the deal would be a major validation of its HBM3e technology and manufacturing capabilities, potentially opening further opportunities with other hyperscalers or AI startups. The exclusivity could underpin a long-term revenue stream and solidify SK Hynix’s position in the high-end memory market. Yet, it could also constrain the company’s ability to serve other large customers with identical memory product lines during the exclusivity period.

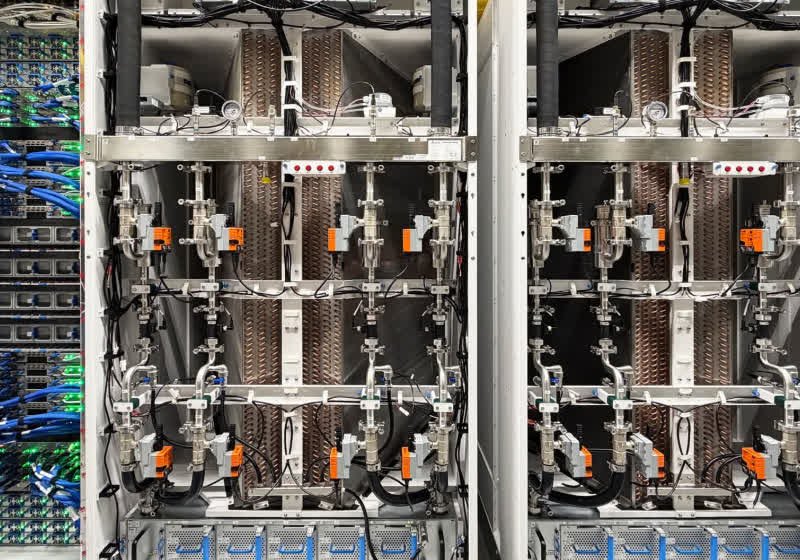

*圖片來源:Unsplash*

Looking ahead, the Maia project’s ultimate success will hinge on multiple factors beyond memory exclusivity. These include the effectiveness of compiler and software stacks to exploit Maia’s architectural advantages, the efficiency of the memory subsystem in real-world workloads, and the ecosystem’s ability to deliver compatible accelerators, software libraries, and tooling. The pricing model for Maia—whether it leans on a hardware-as-a-service paradigm, on-perimeter licensing, or traditional hardware sales—will influence the total cost of ownership for customers and insulate or expose them to memory cost fluctuations.

Moreover, the broader trend in AI hardware highlights an ongoing debate about vertical integration versus an open, multi-vendor memory strategy. Some customers value strict performance predictability and simpler supply chains achievable through exclusivity, while others prefer diversification to shield against supplier risk. Microsoft’s Maia decision could influence future procurement strategies among cloud providers, research labs, and enterprise customers seeking scalable AI acceleration capabilities.

From a research and development standpoint, the exclusive HBM3e deal with SK Hynix could accelerate Maia’s architectural innovations, particularly in memory compression, data tiling, and on-die memory management techniques. The synergy between memory bandwidth and compute throughput often manifests in practical gains for large-scale AI tasks, including low-latency inference for chat-based models, multimodal processing, or real-time decision-making applications. If Microsoft can demonstrate tangible improvements in latency and throughput while maintaining energy efficiency—critical for data center economics—the Maia platform could become a compelling option in Microsoft’s cloud AI services.

In terms of market dynamics, competitors are likely to respond with accelerated investments in their own accelerators and partnerships. Nvidia, AMD, Intel, and various startup firms are pursuing customized AI silicon and memory configurations, while memory manufacturers continue to innovate with new HBM generations and packaging technologies. An exclusive arrangement between Microsoft and SK Hynix would be a strategic signal in this high-stakes race, potentially prompting rivals to seek similar exclusivity deals or to differentiate through software ecosystems, tooling, or specialized interconnects.

The regulatory and governance implications of large exclusivity agreements should not be ignored. Regulators may scrutinize whether such arrangements limit competition or impede memory suppliers from serving other customers or entering new markets. While exclusivity can be beneficial for a customer’s performance and supply certainty, it must be balanced against broader market health and the need for competitive access to cutting-edge memory technologies.

In sum, the rumored exclusive memory agreement between Microsoft and SK Hynix for Maia’s HBM3e memory underscores the high-stakes interplay between chip design, memory technology, and supply chain management in today’s AI era. If confirmed, the deal would illustrate how memory availability and performance can be as critical as processing power in shaping the capabilities of next-generation AI accelerators. The Maia platform’s ultimate trajectory will depend on the depth of this collaboration, the software stack’s maturity, and the broader market’s response to Microsoft’s strategic choices in memory and silicon design.

Perspectives and Impact¶

- Industry significance: An exclusive HBM3e memory supply deal would highlight the importance of memory bandwidth in achieving AI accelerator performance targets. It would also signal supplier-co-design emphasis, where memory and compute are developed in tandem to squeeze out maximum efficiency.

- Customer implications: For Microsoft, exclusivity could translate into more predictable hardware supply, potential cost savings, and performance gains that can be marketed to enterprise customers seeking reliable AI cloud capabilities. However, customers might worry about reduced supplier diversity in the long term and potential price dynamics tied to exclusivity.

- Supplier dynamics: SK Hynix would gain a strong, long-term customer relationship and revenue certainty, reinforcing its leadership position in the HBM market. Competitors may respond with intensified investments in alternative memory technologies or partnerships to secure their own access to high-bandwidth memory.

- Risk considerations: Concentrating memory supply with a single vendor introduces single-source risk. Contingency plans, contractual safeguards, and capacity commitments would be essential to ensure Maia’s production remains uninterrupted in adverse scenarios.

Future implications include how such an exclusivity framework could influence other hyperscalers’ hardware strategies and whether this model becomes a template for future AI accelerator programs. The balance between performance optimization, supply reliability, and competitive fairness will shape policy discussions and procurement practices in cloud AI infrastructure for years to come.

Key Takeaways¶

Main Points:

– Microsoft’s Maia AI chip program may pursue an exclusive HBM3e memory deal with SK Hynix.

– Exclusive memory supply can enhance integration, performance, and supply certainty for Maia.

– Such exclusivity carries risks around supply resilience and competition but could offer strategic differentiators in AI cloud offerings.

Areas of Concern:

– Dependence on a single memory supplier could expose Maia to production disruptions.

– Regulatory inquiries could arise related to market competition and supplier access.

– Long-term pricing and terms of exclusivity may affect total cost of ownership for customers.

Summary and Recommendations¶

If Microsoft indeed secures exclusive access to SK Hynix’s HBM3e memory for Maia, the arrangement would reflect a broader industry trend toward tightly integrated, purpose-built AI accelerators where compute and memory are co-optimized. The benefits would likely include predictable supply, potential performance gains through tighter hardware-software coupling, and a streamlined manufacturing process. However, the arrangement would raise considerations about supply resilience, competitive dynamics, and pricing that must be managed through thoughtful contracting, contingency planning, and ongoing evaluation of alternative memory pathways.

For stakeholders evaluating or following Maia, recommended actions include:

– Seek official confirmation and technical disclosures from Microsoft and SK Hynix to clarify scope, terms, and roadmap.

– Assess supply chain risk and develop mitigation strategies, including potential secondary memory options or phased memory configurations.

– Monitor software ecosystem development and compiler toolchains to gauge whether Maia delivers on performance promises in real-world workloads.

– Consider broader procurement strategies that balance the benefits of exclusivity with the value of diversification for future AI hardware needs.

References¶

- Original: https://www.techspot.com/news/111123-microsoft-maia-ai-chip-ambitions-might-include-exclusive.html

- Additional sources to follow up on Maia, HBM3e, and memory ecosystem dynamics:

- Link 1: Broad context on HBM technologies and AI accelerator requirements

- Link 2: Industry analysis of exclusive supplier arrangements in hardware

- Link 3: SK Hynix memory roadmap and HBM3e specifications (as available)

Note: The above references should be supplemented with up-to-date reports from credible tech news outlets and official statements from Microsoft and SK Hynix when available.

*圖片來源:Unsplash*