TLDR¶

• Core Points: Prometheus scrapes pull metrics from HTTP endpoints in exposition format; careful endpoint design improves reliability, observability, and performance.

• Main Content: The article explains how Prometheus scrapes work, common pitfalls, and practical strategies to optimize metrics endpoints for clarity, durability, and scalability.

• Key Insights: Proper labeling, consistent namespaces, clean metric names, and robust scrape configurations reduce confusion and improve alerting.

• Considerations: Network reliability, scraping cadence, target availability, and security implications must be balanced.

• Recommended Actions: Audit metrics endpoints, standardize naming, configure scrape intervals and retries, and implement rate limiting and security controls.

Content Overview¶

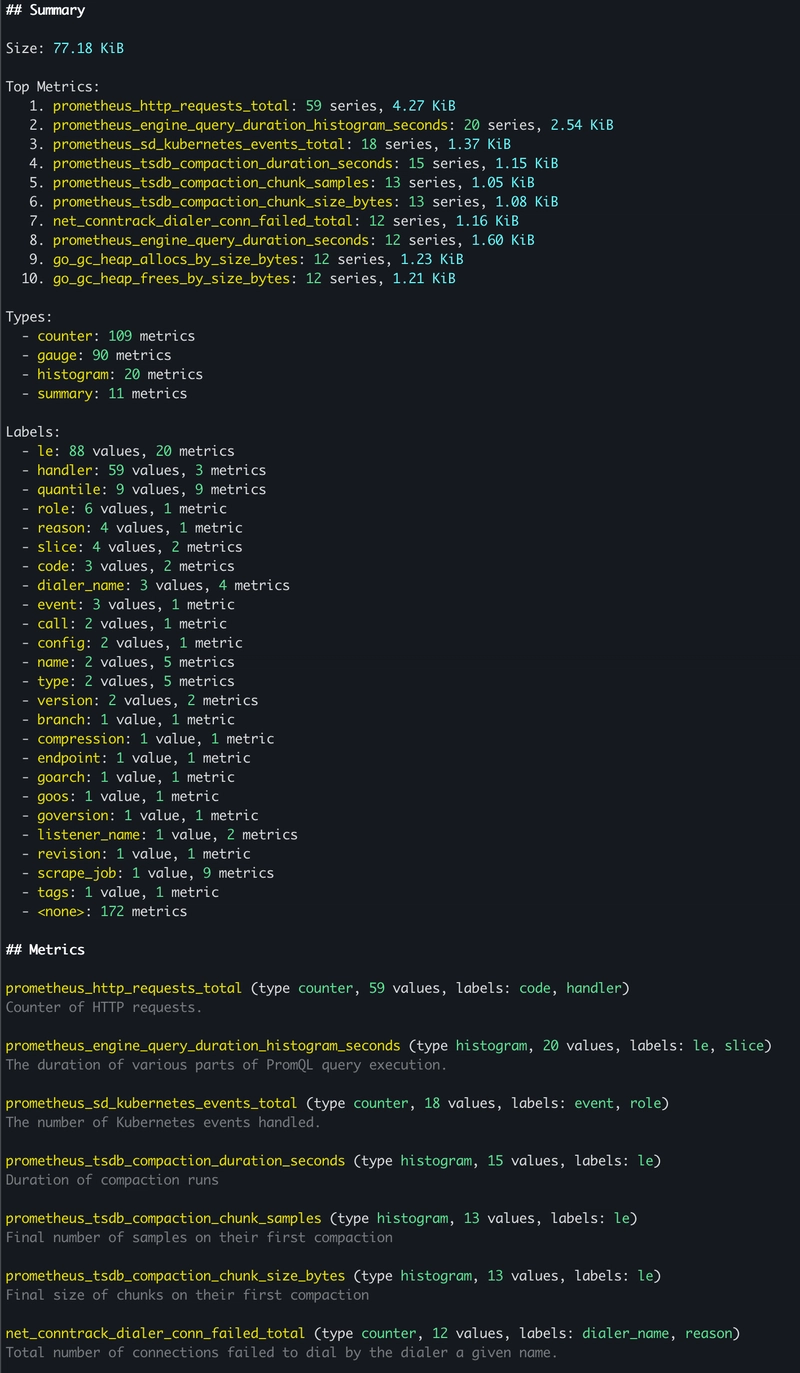

Prometheus is a widely adopted monitoring system that operates on a pull-based model. Unlike systems where services push metrics to a central collector, Prometheus periodically scrapes metrics from a set of targets. Each target exposes an HTTP endpoint, typically /metrics, containing a plain-text document in the Prometheus exposition format. This document enumerates the metrics the service wishes to expose, along with metadata such as help text and data type.

In practice, a scrape is a simple HTTP GET request to a target’s metrics endpoint. A successful response includes lines that define and describe metrics, using a recognizable pattern:

– A HELP line provides a human-readable description, for example: “# HELP http_requests_total Total number of HTTP requests.”

– A TYPE line indicates the metric type, such as gauge, counter, or summary.

– Followed by one or more metric samples, such as: “http_requests_total{method=”GET”, code=”200″} 1027″

This exposition format is deliberately straightforward, designed to be both human-readable and machine-parseable. However, the practical reality of real-world systems means that operators must carefully design and maintain metrics endpoints to ensure data quality, observability, and system performance. Misconfigured or noisy endpoints can lead to misleading dashboards, unstable alerts, and wasted storage and compute resources.

Prometheus’ scrape behavior is influenced by several configuration aspects, including the list of targets, scrape intervals, scrape timeouts, and relabeling rules. The global configuration determines how often Prometheus will fetch data, how long it will wait for a response, and how it will transform or filter metrics as they are ingested. Beyond basic collection, Prometheus supports a robust ecosystem of exporters, instrumentations, and service mocks that help populate metrics across diverse environments.

This article delves into the fundamentals of Prometheus scrapes, examines common challenges faced when exposing metrics, and offers best practices for designing reliable endpoints. It aims to provide practical guidance for operators, developers, and site reliability engineers who want to enhance visibility into distributed systems without compromising performance or reliability.

In-Depth Analysis¶

Prometheus’s pull model offers several advantages, including simplified network topologies, reduced risk of metric backpressure on services, and the ability to orchestrate scrapes across dynamic environments. However, this approach also introduces a set of practical considerations that teams must address to maintain clean, actionable metrics.

1) Endpoint Design and Exposure

– Consistency: Each target should expose metrics in a consistent format and naming scheme. Inconsistent metric names, labels, or units complicate aggregation and alerting. Establish a centralized naming convention and enforce it across services.

– Namespacing and Labels: Use meaningful namespaces (e.g., application, environment, region) and labels that enable flexible querying. Avoid unbounded label sets, which can lead to high cardinality and memory pressure in Prometheus.

– Metrics Scope: Expose only useful metrics on the /metrics endpoint. Unnecessary or highly volatile metrics can bloat the data stream, increase scrape load, and complicate analysis.

– HELP and TYPE Metadata: Provide informative HELP text and accurate TYPE declarations for each metric. This improves readability in dashboards and aids new contributors in understanding the data.

2) Performance and Reliability

– Scrape Interval and Timeout: Balance the cadence of scrapes with the cost of scraping. Short intervals improve timeliness but increase load on targets and Prometheus. Configure sensible timeouts to avoid long-tail delays during network issues.

– Retries and Backoffs: Prometheus handles transient failures with retry mechanisms. Use robust targets and implement idempotent metrics collection so failed scrapes don’t produce misleading gaps in data.

– Target Health and Discovery: Use service discovery mechanisms that reflect the current topology (Kubernetes endpoints, cloud instances, or static targets). Misaligned targets lead to stale data or missed scrapes.

– Resource Utilization: Metrics ingestion consumes memory and CPU. Monitor Prometheus server resources and consider sharding or federation for very large deployments to prevent single points of failure.

3) Data Quality and Cardinality

– Label Strategy: Avoid excessively high cardinality by limiting dynamic labels. Each unique combination of label values contributes to memory usage and query time. Plan labels with long-term scalability in mind.

– Numerical Semantics: Maintain consistent units (e.g., seconds, bytes) and avoid mixing aggregations across unrelated metrics. Normalize units at the source where possible.

– Counter Reset and Isolation: Understand how counters behave after restarts or rollbacks. Implement boundaries or dashboards that handle resets gracefully to avoid misinterpretation.

4) Security and Observability

– Access Control: Protect metrics endpoints from unauthorized access. Use authentication, IP allowlists, or network policies where appropriate, especially in multi-tenant or public-cloud environments.

– Data Exposure: Be mindful of exposing sensitive information in metrics, such as internal identifiers or credentials embedded in labels. Apply redaction or use separate, restricted endpoints for sensitive data.

– Telemetry Hygiene: Regularly audit exposed metrics to ensure compliance with governance and privacy policies. Remove deprecated metrics and retire unused exporters to minimize attack surface and confusion.

5) Failed Scrapes and Alerting

– Scrape Failures as Signals: Treat scrape failures as observable events that warrant attention. Configure alerting rules that surface consistent failures (e.g., target down, high scrape latency) and distinguish transient issues from persistent problems.

– Latency and Throughput: High scrape latency can introduce stale data. Monitor scrape durations and tune exporters to reduce processing time on the target side.

– Backfill and Gaps: Plan for data gaps during outages. Use synthetic checks or redundancy to minimize blind spots and ensure continuity of critical dashboards.

6) Best Practices for End-to-End Health

– Standardized Instrumentation: Prefer standard libraries and instrumentations for common frameworks and languages to ensure consistent metric formats and semantics.

– Documentation: Maintain up-to-date documentation for each service’s metrics, including a glossary of metric names, labels, and intended use cases.

– Versioning and Change Management: Track changes to metrics exposure. Version endpoints or maintain backward-compatible changes to avoid breaking dashboards and alerts.

– Testing and Validation: Implement tests for metrics correctness, including unit tests for instrumentation and integration tests that verify the metrics endpoint behaves as expected under typical and failure scenarios.

7) Operationalizing at Scale

– Federation and Sharding: For large-scale deployments, consider federating Prometheus instances or sharding data to avoid performance bottlenecks and to improve query performance across regions or teams.

– Exporters and Custom Metrics: When integrating with legacy systems, use exporters to translate metrics into Prometheus-compatible formats. Ensure exporters themselves are reliable and well-maintained.

– Observability of Observability: Treat the monitoring stack as a system worth observing. Monitor Prometheus performance, alert routing, and dashboard health to prevent blind spots in your own monitoring.

*圖片來源:Unsplash*

The practical upshot is that the quality of your metrics endpoints directly impacts visibility, incident response, and operational efficiency. By aligning endpoint design with scalable data practices, teams can derive clearer insights with fewer false alarms and smoother maintenance cycles.

Perspectives and Impact¶

The Prometheus scraping model has become foundational in modern cloud-native environments. Its pull-based approach aligns well with dynamic architectures such as microservices and containerized workloads, where services can spontaneously scale up or down. Relying on endpoints that expose metrics is convenient and scalable when done with discipline.

Looking ahead, several trends shape how teams should approach metrics endpoints:

– Increasing emphasis on standardized instrumentation across teams to reduce fragmentation and duplication of metrics.

– Growing importance of controlling metric cardinality as systems expand, which requires careful planning of labels and aggregation strategies.

– Greater adoption of federation and multi-cluster monitoring to manage data gravity and performance in large organizations.

– Enhanced security practices for exposing metrics, including encryption, authentication, and network segmentation to protect sensitive telemetry.

The implications for practitioners are clear: invest in consistent naming, robust endpoint hygiene, and scalable architectures that support reliable scraping and meaningful analysis. As systems evolve, so too should the instrumentation strategy, ensuring that Prometheus continues to provide timely, accurate, and actionable insights.

Key Takeaways¶

Main Points:

– Prometheus uses a pull-based scraping model where targets expose metrics at /metrics in the Prometheus exposition format.

– Endpoint quality, naming consistency, and label management are critical to scalable observability.

– Scrape reliability, performance, and security must be balanced to maintain data quality.

Areas of Concern:

– High cardinality from labels can strain memory and queries.

– Misconfigured scrapes can lead to hidden outages or misleading dashboards.

– Exposure of sensitive data through metrics labels requires careful governance.

Summary and Recommendations¶

To maximize the value of Prometheus scrapes, organizations should implement a disciplined approach to metrics endpoints. Start by establishing a centralized naming convention, namespace strategy, and label-usage policy to prevent unmanageable cardinality. Ensure HELP and TYPE metadata accompanies every metric, and keep the /metrics surface lean by exposing only what is essential for monitoring and alerting.

Configure scrape intervals and timeouts in alignment with system performance and network reliability, and enable robust target discovery to reflect real-time topology changes. Security considerations should guide access controls and data exposure, with encryption and authentication where appropriate.

Invest in exporting and instrumentation practices that are standardized across teams to simplify cross-service analysis. Regularly audit and retire outdated metrics, and implement testing—both unit and integration—to validate metrics behavior under normal and failure scenarios. Finally, consider scalable architectures such as federation or sharding for large deployments to maintain query performance and resilience as data volume grows.

By adhering to these practices, operators can achieve clearer visibility into distributed systems, faster incident response, and more reliable, scalable monitoring with Prometheus.

References¶

- Original: https://dev.to/frosnerd/taming-prometheus-scrapes-understanding-and-analyzing-your-metrics-endpoints-ik5

- Additional references for further reading:

- Prometheus: The Definitive Guide to Monitoring with Prometheus

- Kubernetes Monitoring with Prometheus: Best Practices for Metrics, Scrapes, and Alerts

- OpenTelemetry: Standardized Observability for Instrumentation and Metrics

Forbidden:

– No thinking process or “Thinking…” markers

– Article starts with “## TLDR”

*圖片來源:Unsplash*