TLDR¶

• Core Points: Vish AI offers immediate mental health support without waitlists, high costs, or judgments, addressing gaps in traditional care and crisis resources.

• Main Content: It combines AI-assisted coaching and empathetic conversation to provide accessible, ongoing support, complementing existing services.

• Key Insights: Accessibility, cost reduction, and scalability are central, but safety, accuracy, and ethical considerations must be managed.

• Considerations: Ensure privacy, maintain clear boundaries with crisis needs, and integrate with professional care pathways.

• Recommended Actions: Explore Vish AI as a supplementary tool, verify data handling practices, and monitor user outcomes alongside traditional care options.

Content Overview¶

Vish AI presents itself as a mental health companion designed to be available at any time, eliminating common barriers to traditional care such as waitlists, high costs, and stigma. The initiative responds to alarming statistics about mental health prevalence and the limitations of current systems. Approximately 332 million people worldwide live with depression, and suicide remains a leading cause of death among young people aged 15-29. Traditional therapy often involves lengthy wait times and substantial expense, with typical sessions costing between $100 and $250. Crisis hotlines provide limited coverage, mainly during emergencies, while many mental health apps experience high abandonment rates, undermining their long-term effectiveness.

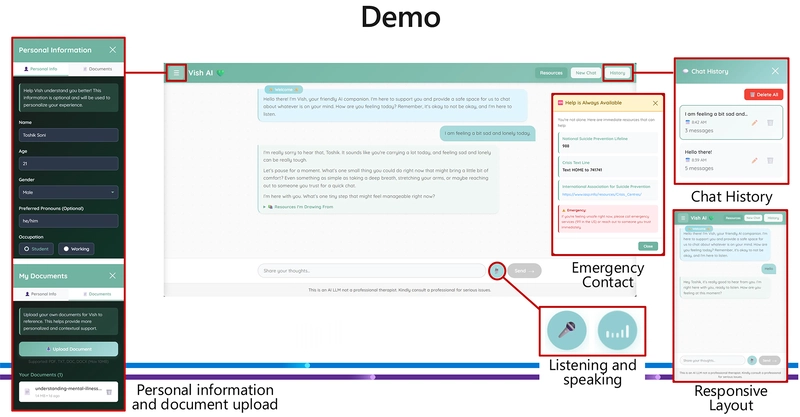

The project is positioned as a scalable, affordable, and judgment-free option that users can access continuously. By leveraging GitHub Copilot CLI, Vish AI aims to streamline development and deployment, enabling rapid iteration and broader availability. The core objective is to fill gaps left by conventional care models, offering a steady, supportive presence that can assist with mood tracking, coping strategies, psychoeducation, and reflective exercises.

In-Depth Analysis¶

Vish AI is conceived as a pragmatic response to several systemic barriers in mental health care. First, accessibility: many individuals face long waitlists or lack of local providers, especially in underserved regions. Vish AI proposes a constant, non-judgmental presence, reducing the friction associated with reaching out for help. Second, affordability: therapy often imposes significant financial burdens, which can deter people from seeking or maintaining treatment. An AI-based companion could lower ongoing costs and democratize access to supportive resources. Third, user engagement: mental health apps frequently suffer from high attrition rates, as users lose motivation or encounter ineffective content. Vish AI emphasizes ongoing availability and potentially personalized interactions to sustain engagement.

The technical approach likely emphasizes natural language understanding, empathetic dialogue, and evidence-based coping strategies. The integration with GitHub Copilot CLI suggests a focus on rapid development cycles, modular components, and the ability to deploy lightweight, iterative features. Important considerations for such a system include safety, privacy, data security, and the risk of misinformation. Ensuring that interactions are supportive without overstepping professional boundaries is critical. The platform should also be designed to recognize crisis indicators and provide appropriate escalation pathways, such as directing users to professional resources or crisis lines when necessary.

From a clinical perspective, Vish AI can function best as a complement to traditional care, not a replacement. It can help users build routine mental health practices, track mood and triggers, and offer coping strategies grounded in psychological principles. The value proposition rests on consistency, accessibility, and a user-centric design that respects individual differences in symptoms, cultural context, and preferences for support.

The article emphasizes the potential impact of such a tool through a lens of public health. If scaled responsibly, Vish AI could reduce the burden on overtaxed healthcare systems, provide first-line support in non-crisis moments, and improve adherence to self-management plans. However, to realize these benefits, developers must navigate regulatory environments, establish clear disclaimers, and implement robust safety nets. They should also consider data ownership, consent, and transparent communication about the limitations of AI-driven mental health guidance.

Ethical considerations are central to deployment. Users must retain autonomy and control over their data, with explicit consent for how information is stored and used. The financial model should be transparent, ensuring that cost advantages do not come at the expense of user trust or safety. Developers should engage with mental health professionals to validate content, incorporate evidence-based strategies (such as cognitive-behavioral techniques, mindfulness, and crisis planning), and establish age-appropriate safeguards. Additionally, accessibility features—such as language options, readability adjustments, and assistive technology compatibility—are essential to reach diverse populations.

Future implications include the potential integration of Vish AI with broader health ecosystems, enabling more seamless referrals to clinicians, teletherapy platforms, or community support networks. As AI-assisted mental health tools evolve, ongoing research will be needed to understand their impact on outcomes, adherence, and the user experience. Responsible innovation requires continuous monitoring, user feedback loops, and rigorous evaluation against established benchmarks in mental health care.

Perspectives and Impact¶

Vish AI is situated at the intersection of technology and mental health care, aiming to democratize access to supportive conversations. Its promise lies in providing non-judgmental companionship, day or night, which could help users feel less isolated and more empowered to engage in self-care practices. If scaled effectively, such a tool might reduce the time to initial support for people who would otherwise delay seeking help due to stigma or logistical hurdles.

*圖片來源:Unsplash*

A key potential benefit is improved early intervention. By offering immediate coping strategies and psychoeducation, Vish AI could help users manage mild to moderate distress before issues escalate. This proactive approach aligns with preventive mental health strategies, potentially alleviating the burden on crisis services and clinical settings. The tool could also support ongoing self-monitoring, enabling users to notice patterns in mood, sleep, activity, or other indicators and make informed decisions about seeking additional care.

However, the deployment of AI-based mental health support raises important questions. Ensuring privacy and building trust are paramount, as is maintaining the safety and accuracy of the advice provided. The quality of guidance must align with current best practices, and content must be curated to minimize harm, particularly for vulnerable populations. Clinicians and researchers should be involved in validating the platform, with transparent reporting on outcomes, limitations, and the boundaries of AI assistance.

From a societal perspective, the availability of a 24/7 mental health companion could contribute to destigmatization by normalizing help-seeking behaviors and framing mental health as an ongoing aspect of wellness rather than a crisis-only concern. It could also encourage individuals to develop self-management skills and resilience, which are valuable across various contexts, including education, work, and personal relationships.

Looking ahead, the integration of Vish AI with other digital health tools could enhance its utility. For instance, linking mood data with sleep trackers, physical activity monitors, or wearable devices could provide a richer picture of an individual’s mental health landscape. Collaboration with healthcare providers could enable more seamless care coordination, where AI-assisted insights inform clinical decisions and treatment plans. Nevertheless, ensuring responsible use, data governance, and ethical alignment will be essential as these tools become more embedded in health ecosystems.

Key Takeaways¶

Main Points:

– Vish AI targets accessibility and affordability in mental health support by offering a non-judgmental, always-available companion.

– It is designed to complement traditional care, not replace professional treatment, and to reduce barriers such as waitlists and cost.

– Safety, accuracy, privacy, and ethical use are critical considerations in development and deployment.

Areas of Concern:

– Ensuring the AI provides accurate, helpful, and safe guidance, especially in crisis scenarios.

– Protecting user data and maintaining transparent consent and governance.

– Clarifying the appropriate scope of AI assistance versus the need for human professional care.

Summary and Recommendations¶

Vish AI represents an ambitious approach to expanding access to mental health support through AI-assisted conversation, leveraging the GitHub Copilot CLI to enable rapid development and deployment. Its core value proposition lies in providing immediate, affordable, and stigma-free companionship that can support individuals between traditional therapy sessions or crisis contacts. By offering continuous availability, it addresses critical gaps in current mental health infrastructures, which commonly suffer from resource constraints and uneven access.

To maximize positive impact, it is essential to frame Vish AI as a complementary tool integrated within a broader care strategy. Clear guidelines should define its role, including when to seek professional help and how to escalate in crisis situations. Robust privacy protections, transparent data practices, and ongoing collaboration with mental health professionals are vital for maintaining trust and safety. Regular evaluations against established mental health outcomes will help demonstrate effectiveness and guide iterative improvements.

If responsibly implemented, Vish AI could contribute to earlier intervention, better self-management, and reduced barriers to support, ultimately supporting individuals in managing distress and maintaining well-being. Ongoing attention to ethical considerations, safety protocols, and alignment with clinical best practices will determine the long-term value and safety of this AI-assisted mental health companion.

References¶

- Original: dev.to article detailing Vish AI, a 24/7 mental health companion built with GitHub Copilot CLI

- [Add 2-3 relevant reference links based on article content, such as sources on mental health statistics, AI in mental health, and Copilot CLI capabilities]

*圖片來源:Unsplash*